While Mythos 5 remains largely unconstrained for restricted government and trusted enterprise partners, Fable 5 is wrapped in a sophisticated safety perimeter. If Fable 5 detects a prompt drifting toward high-risk vectors—like cyberwarfare exploits, advanced biology, or chemical synthesis—it doesn’t just give a generic “I can’t answer that” error. Instead, the query seamlessly falls back to Claude Opus 4.8 (Anthropic’s next-most capable model) to handle the response safely.

Today we’re launching Claude Fable 5: a Mythos-class1 model that we’ve made safe for general use.

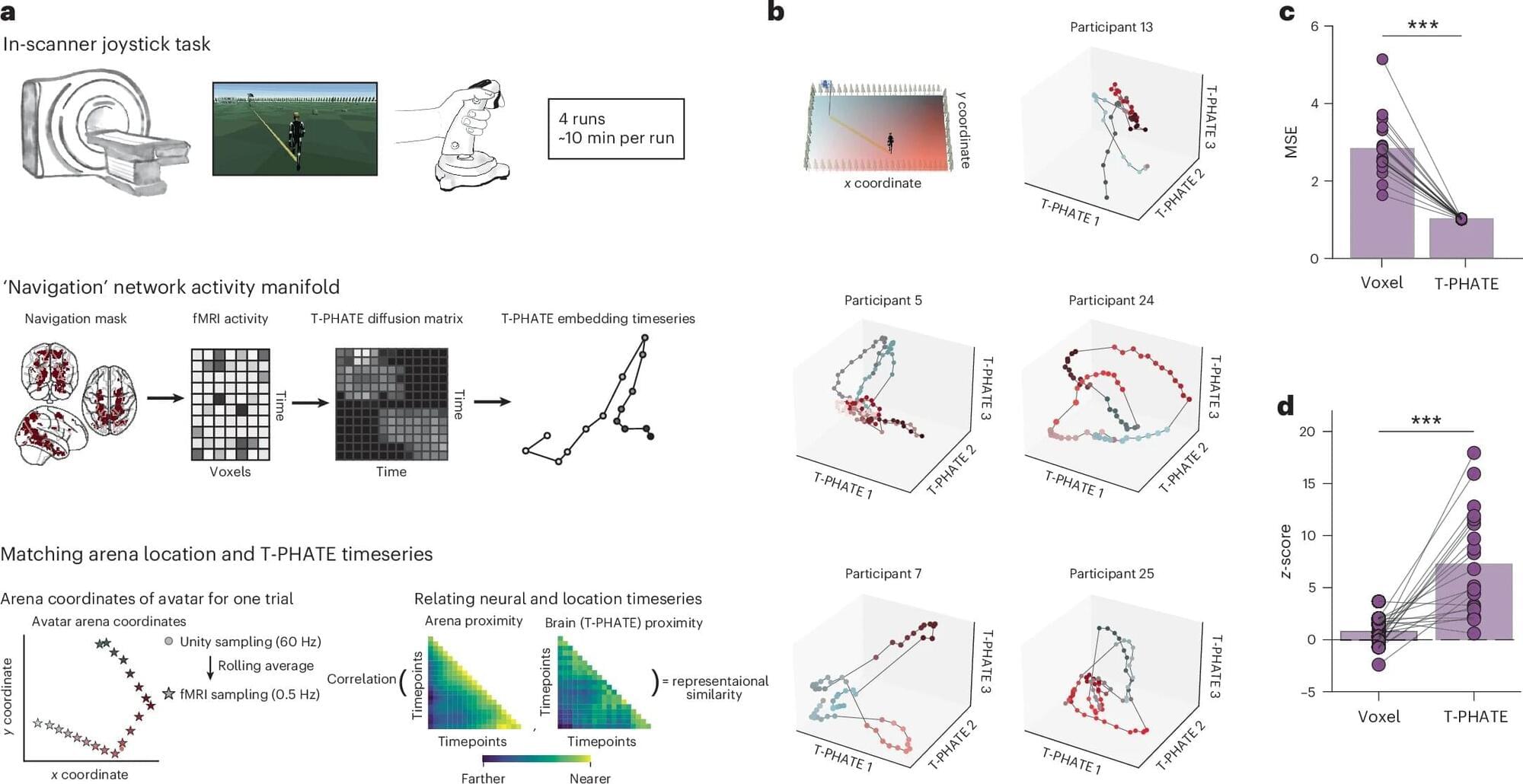

Fable 5’s capabilities exceed those of any model we’ve ever made generally available. It is state-of-the-art on nearly all tested benchmarks of AI capability, showing exceptional performance in software engineering, knowledge work, vision, scientific research, and many other areas. The longer and more complex the task, the larger Fable 5’s lead over our other models.

Releasing a model this capable comes with risks. Without safeguards, Fable 5’s capabilities in areas like cybersecurity could be misused to cause serious damage. We’ve therefore launched the model with safeguards that mean queries on some topics will instead receive a response from our next-most-capable model, Claude Opus 4.8. To release the model both safely and quickly, we’ve tuned these safeguards conservatively—they’ll sometimes catch harmless requests, though they trigger, on average, in less than 5% of sessions. With more capable models arriving in the coming months, we’re working to improve our safeguards and reduce false positives as quickly as we can.