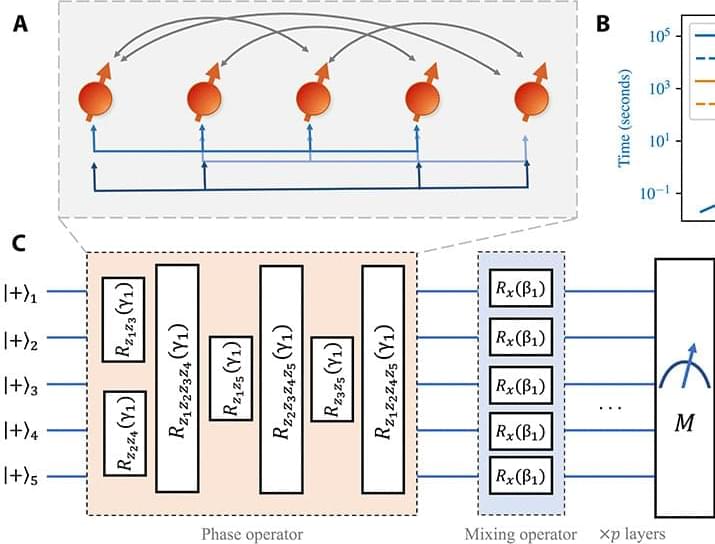

We study the scaling of QAOA TTS with the problem size on the low autocorrelation binary sequences (LABS) problem (15, 16), also known as the Bernasconi model in statistical physics (17, 18). The LABS problem has applications in communications engineering, where the low autocorrelation sequences are used for designing radar pulses (15, 19). To solve LABS, one has to produce a sequence of N bits that minimizes a specific quartic objective.

We choose LABS to study the scaling of QAOA TTS for the following three reasons. First, the complexity of LABS grows rapidly, with optimal solutions known only for N ≤ 66 and the best heuristics producing approximate solutions of quality decaying with N for N 200 (20, 21). This makes it a promising candidate problem, since only a few hundred qubits are required to tackle classically intractable instances. Second, the performance of classical solvers for LABS has been benchmarked (20, 21) in terms of the scaling of their TTS with problem size. Since optimal solutions are only known for N ≤ 66, the scaling of TTS for all classical solvers is obtained by fitting results for N ≤ 66. We reproduce these results and observe that that the scaling of classical solvers at N ≤ 40 matches the behavior for N up to 66 reported in the literature. This provides evidence that the scaling we observe for QAOA at N ≤ 40 will similarly extrapolate to larger N. Third, LABS has only one instance per problem size N. Combined with the hardness of LABS, this makes it possible to reliably study the scaling of QAOA at large problem sizes, where simulating tens or hundreds of random instances would be computationally infeasible.

We obtain the scaling by performing noiseless exact simulation of QAOA with fixed schedules. Our results are enabled by a custom algorithm-specific graphics processing unit (GPU) simulator (22), which we execute using up to 1,024 GPUs per simulation on the Polaris supercomputer accessed through the Argonne Leadership Computing Facility. We find that the TTS of QAOA with number of layers p = 12 grows as 1.46N, which is improved to 1.21N if combined with quantum minimum finding. This scaling is better than that of the best classical heuristic, which has a TTS that grows as 1.34N. We note that we do not propose any new quantum algorithms in this work. Instead, we study a general quantum optimization heuristic with broad applicability (namely, QAOA) and make no specific modifications to adapt it to the LABS problem.