One of the major challenges for nanotechnology deals with the diagnosis and treatment of BBB-related dysfunctions involving stroke, brain tumors and cancer. Tight junction (TJ) barriers protect the CNS. These barriers are located in three main locations inside CNS: the brain endothelium, the arachnoid epithelium, and the choroid plexus epithelium (Figure 3, Abbott et al., 2006). BBB consists of endothelial cells connected by close fitting junctions that separate the flowing blood from the brain extracellular fluid. Therefore, BBB controls the entrance of biomolecules into the brain and protects the brain from many common bacterial infections. However, the BBB presents a few limitations for nanomedicine approaches. For instance, due to the presence of BBB, the drug delivery to the brain area for tumor therapy or other neurodegenerative diseases such as Alzheimer’s is a crucial challenge. The majority of diagnosed brain tumors are currently treated with surgery, radiation, and chemotherapy; nanoscience and technology could be a promising solution to this challenge. There are several comprehensive reviews on the interaction of BBB with nanomaterials that focus on various methods to transfer nanomaterials across BBB (Chen and Liu, 2012; Khawli and Prabhu, 2013; Krol et al., 2013).

Figure 4 (Chen and Liu, 2012) presents the main, well-recognized, transport pathways across BBB, which are commonly used for carrying solute molecules. Among all the pathways shown in Figure 4, the “g” route is the most suitable for drug delivery via liposomes or nanoparticles. A summary of the conventional methods used for BBB permeability assessment is given in Stam’s work (Stam, 2010).

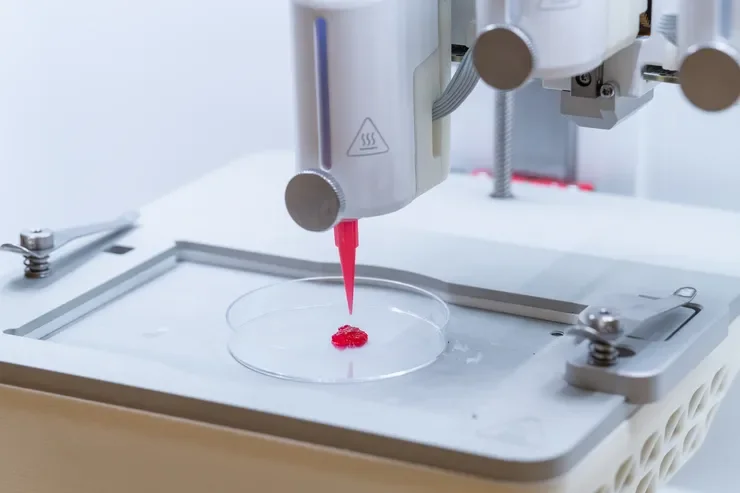

Different approaches and routes possible for transport of drugs across the BBB as summarized in Table 1. Biocompatible nanomaterials such as nanoparticles, liposomes, and supramolecular aggregates are promising drug carriers since their size can be tuned to fit the BBB transport. In addition, their surfaces can be functionalized to facilitate their transport through the BBB. It should be mentioned that the cytotoxicity of NPs must be precisely monitored, using various well-recognized methodologies (Mahmoudi et al., 2010, 2011a; Mao et al., 2013), to ensure their biocompatibility. The surface functional groups enhance the BBB permeability by various mechanisms such as adsorptive-mediated transcytosis and receptor-mediated transcytosis. As an example, Lactoferrin is a receptor located on cerebral endothelial cells that facilitates the transport of NPs across BBB by receptor-mediated transcytosis (Qiao et al., 2012).