Standardizing calculations of the helium byproducts generated in advanced fission and fusion energy system materials can increase reactor safety and longevity, according to a study led by University of Michigan Engineering with collaborators at Oak Ridge National Laboratory and its management contractor UT-Battelle.

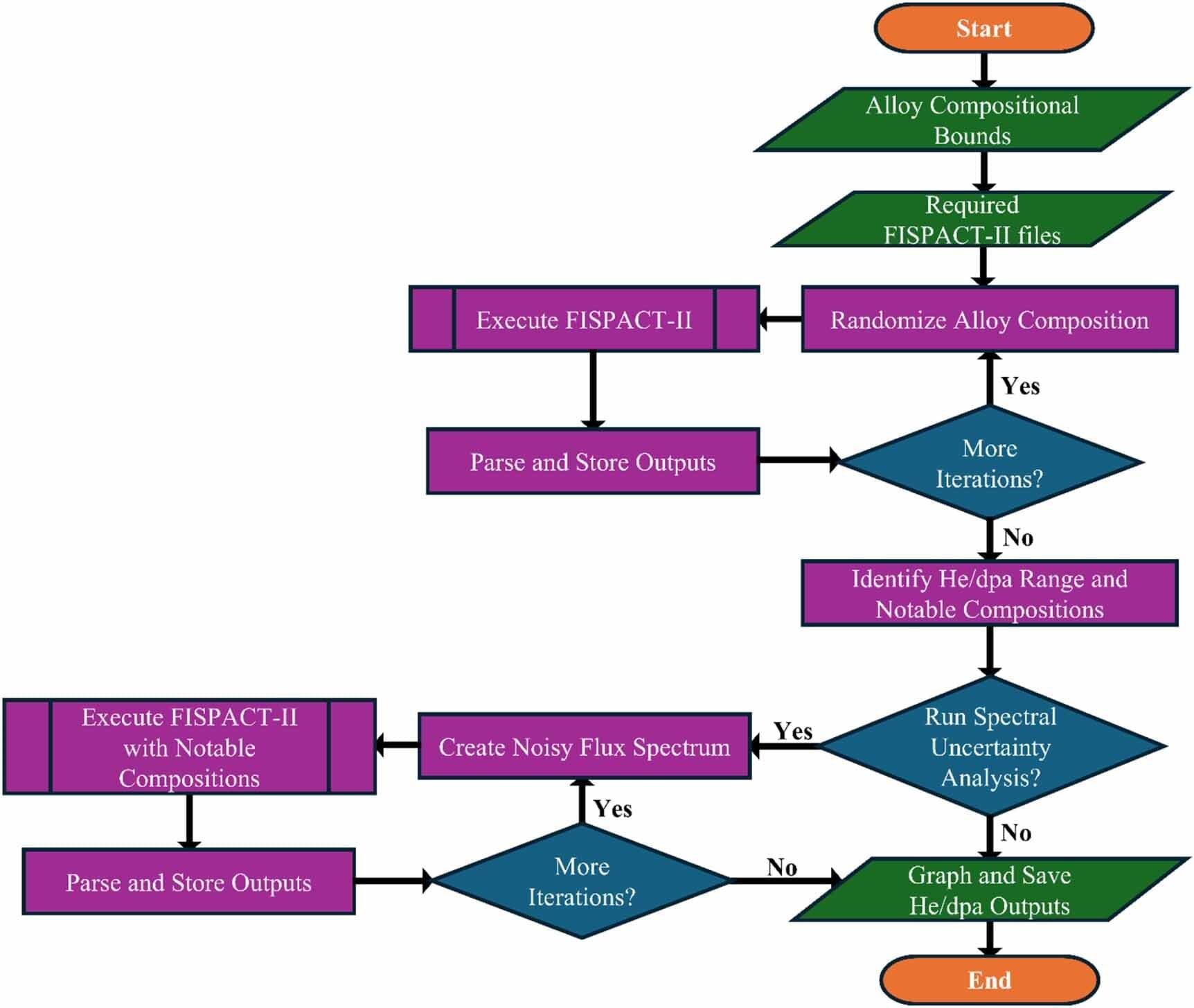

Through a series of simulations, the researchers found that modeling assumptions and key alloy elements—like carbon, nitrogen and nickel—significantly influence helium generation predictions. If left unaddressed, excess helium in real-world reactors could lead to faster component failure as materials swell and become brittle.

“If used, our reporting methods will improve the experimental and modeling fidelity of the nuclear materials databases being generated both domestically and internationally, driving the rapid deployment of advanced nuclear,” said Kevin Field, a professor of nuclear engineering and radiological sciences at U-M and corresponding author of the study published in the Journal of Physics: Energy.