Cambium launches ApexShield 3000 coating that withstands temperatures up to 800°F for hypersonic and aerospace applications.

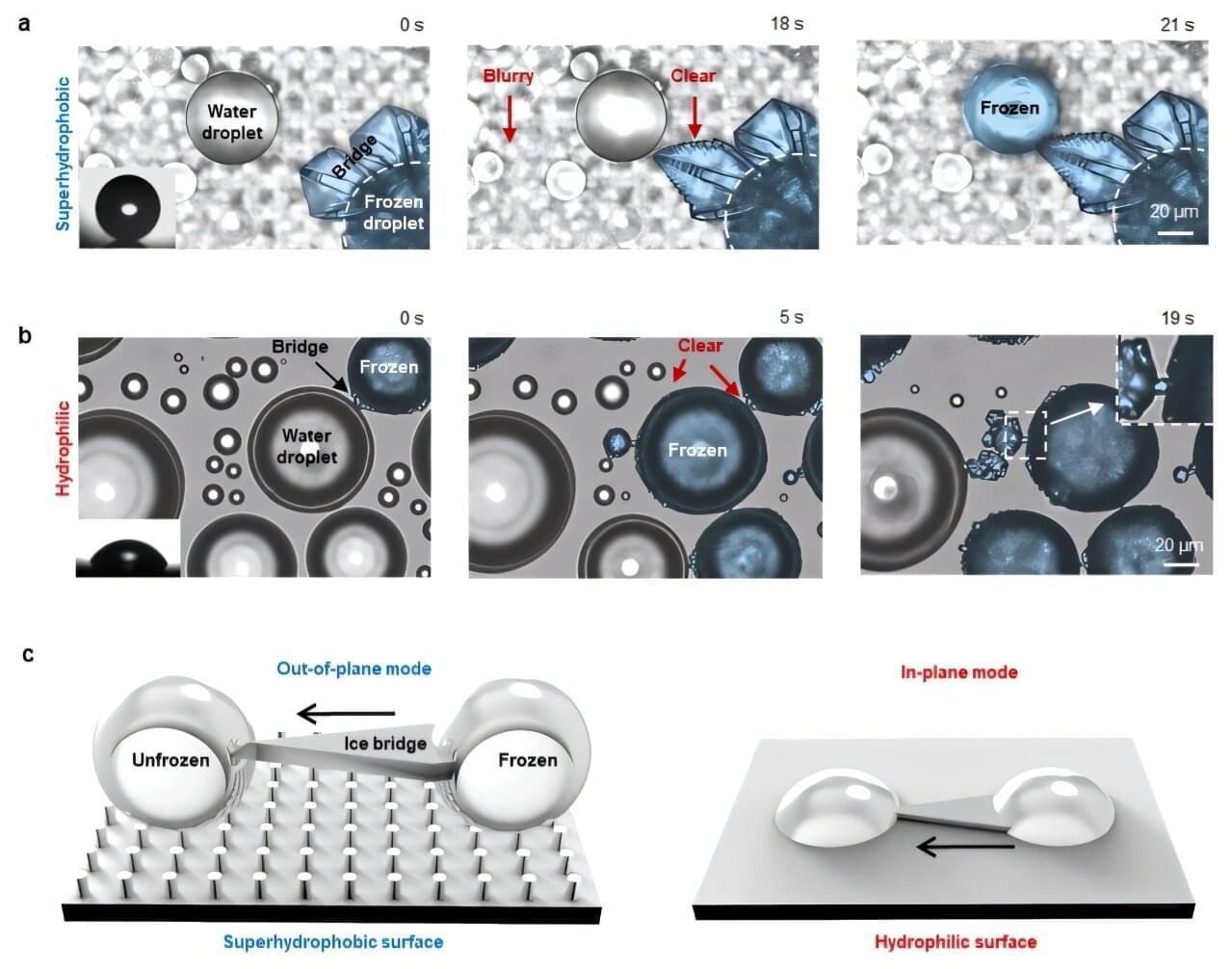

A research team led by Professor Nenad Miljkovic in The Grainger College of Engineering at the University of Illinois Urbana-Champaign has published a breakthrough study in Nature Physics. The work reports the first experimental discovery of a previously unknown frost propagation mechanism—a “suspended ice bridge”—offering new pathways for anti-frosting surface design.

Frost formation plays a critical role in many engineering systems, including air-source heat pumps, refrigeration systems and aerospace applications. At the microscopic level, frost mainly spreads through the formation of “ice bridges” that connect neighboring supercooled liquid droplets, enabling freezing to propagate rapidly across a surface. For decades, these ice bridges were widely assumed to grow along the solid surface.

This assumption, largely based on conventional top-view imaging, has shaped existing theoretical models and anti-frosting strategies. However, the Illinois team’s study reveals that this long-held view is incomplete.

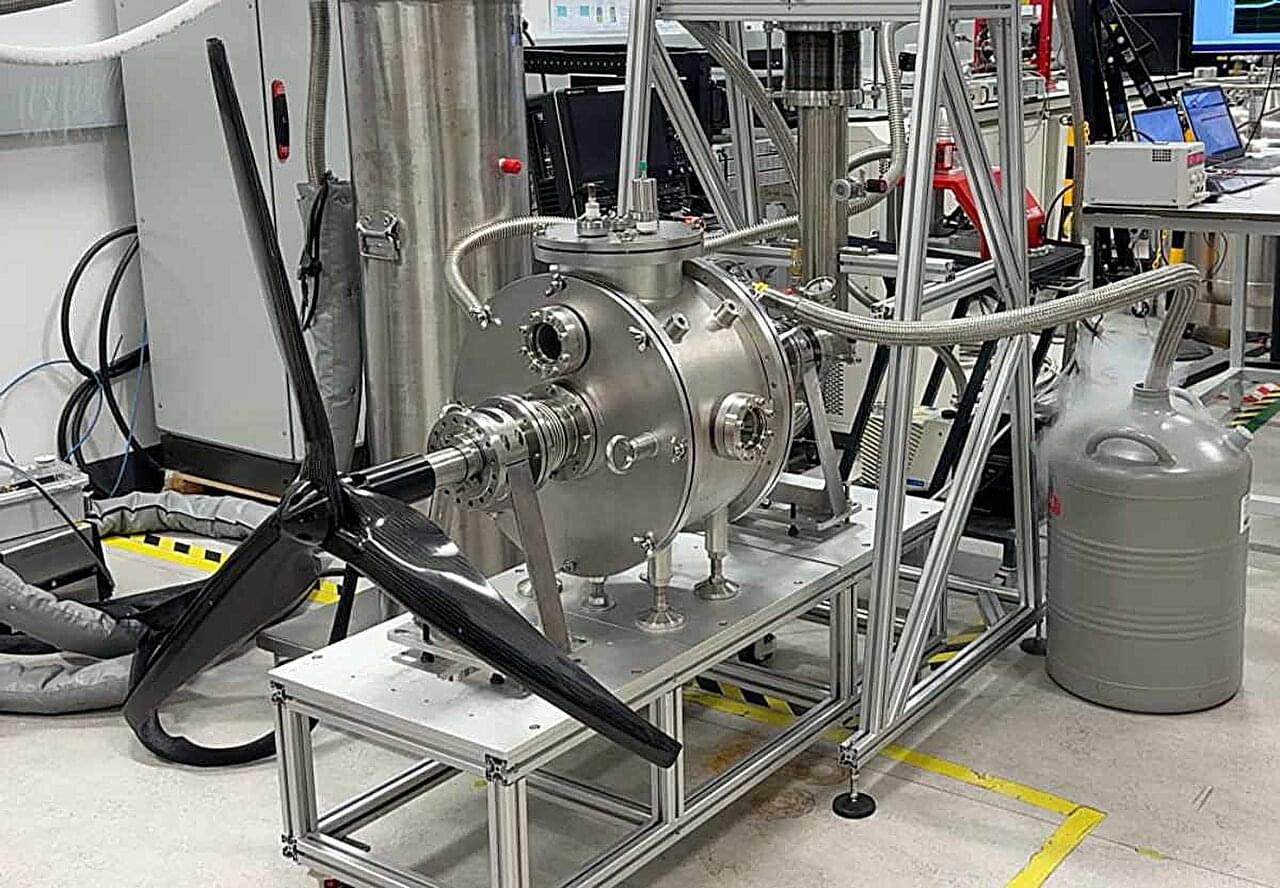

Researchers at a Scottish university have demonstrated a 100kW fully superconducting aviation motor that could help pave the way for an electric aircraft.

The prototype system, created by the Applied Superconductivity Laboratory (ASL) at the University of Strathclyde in Glasgow, represents one of the first attempts in the world to develop a fully superconducting axial-flux motor for aviation.

The motor uses high temperature superconducting (HTS) technology to carry very large electrical currents with almost no resistance when cooled to cryogenic temperatures: 20 Kelvin (K) or −253 °C.

John Nash was born on June 13, 1928, in Bluefield, West Virginia, a former coal town nestled deep in the Appalachian Mountains. As a young boy, Nash was solitary, bookish, and introverted. His father, John Sr., was a quiet engineer with an incisive mind. His mother, Virginia, also intelligent, was a former teacher who had large dreams for her son, pushing him to read at four, learn Latin, and skip a grade at school.

The first hint of John Nash’s math talent came in fourth grade, when a teacher told Virginia that the boy couldn’t do the math. Virginia laughed, well aware that her son was going down his own path to solve the simple problems. In high school, John solved his teachers’ clunky proofs in just a few elegant steps. He was one of ten nationally awarded winners of the George Westinghose Award, which provided him with a full scholarship to the Carnegie Institute of Technology. He hopped from engineering to chemistry before discovering his passion: mathematics.

He was accepted into Princeton University, which at the time was to mathematicians what Detroit was, and still is, to cars. Nash first wowed his peers with an elegantly playable board game, which his peers dubbed “Nash,” but later reached the market as Hex. He then absorbed himself in one of the sexiest math fields of the day, game theory, which described strategies in competition, whether in card games or business. His deceptively simple doctoral thesis would later re-orient the field of economics, although no one, not even Nash, predicted its potential.

I recorded this interview 13 years ago.

It should feel dated by now. It doesn’t. It feels like a prophecy.

Back in 2013, Dr. Ann Cavoukian sat down with me as the Information and Privacy Commissioner of Ontario and the mind behind Privacy by Design. She told me privacy was not dead. She told me security and freedom were not a trade-off. She told me metadata reveals more about you than the content ever could.

Then she said something I have never been able to shake:

“We have to protect privacy globally, or we protect it nowhere.”

Think about where we are now. Surveillance is the business model. Your data trains systems you will never see. The “nothing to hide” crowd got louder, and the borders she warned about got thinner. She saw all of it coming.

When it comes to snow groomers, excavators or crane vehicles, how can their operation be optimized even in difficult conditions and made safer for people in and around the vehicle? An international research team, including the Institute of Visual Computing at Graz University of Technology (TU Graz), investigated this question as part of the THEIA-XR project.

The researchers aimed to improve human-machine interaction through the use of extended reality technologies. The focus was on the operator, whose field of perception was to be expanded without negatively affecting control performance. The work is published in the journal Computers & Graphics.

When working with snow groomers, for example, the team from TU Graz found that data or VR headsets tend to be counterproductive, while information projected via a repurposed disco laser proved to be a great help.

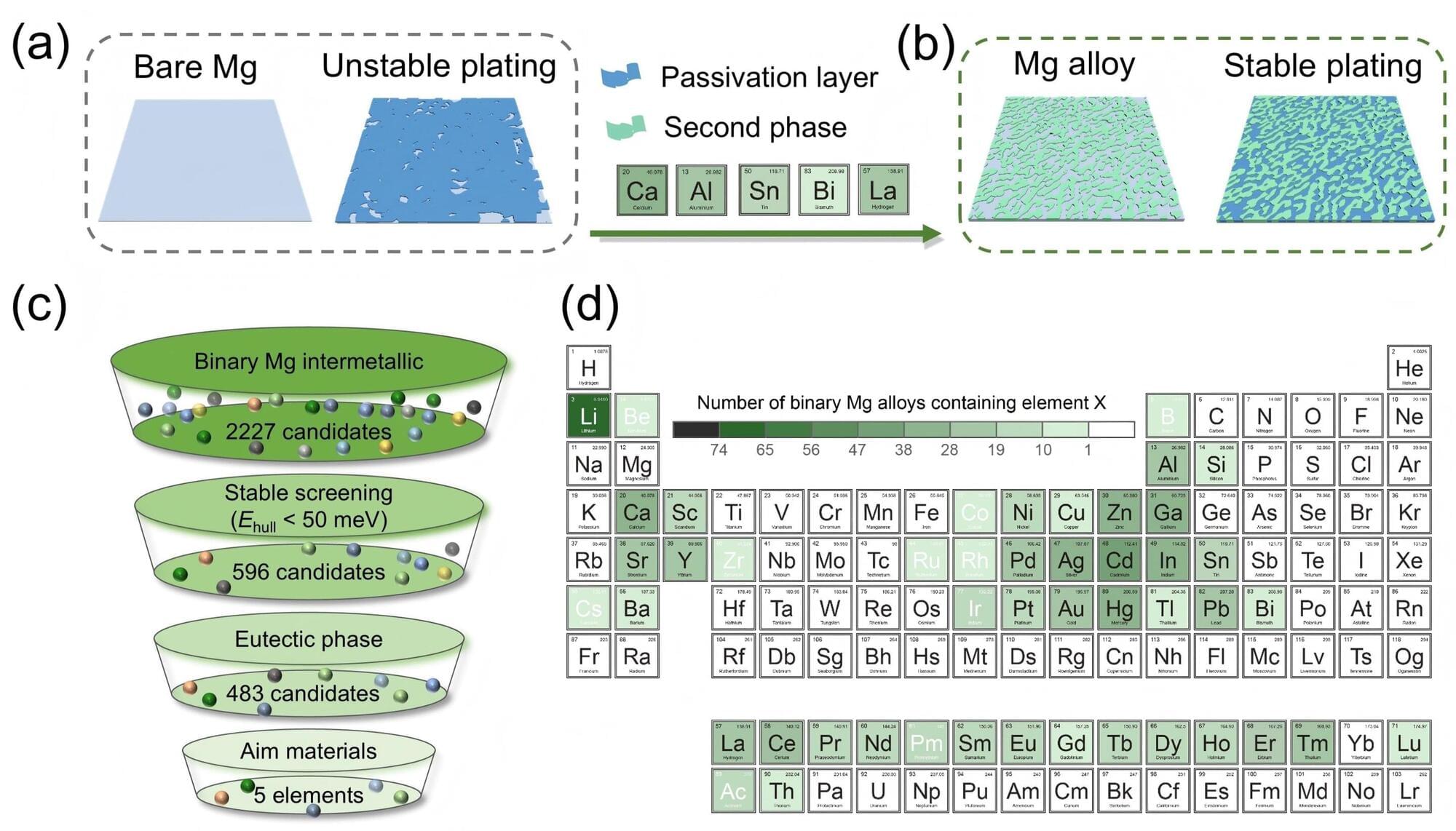

The modern world runs on invisible energy. Hidden inside smartphones, laptops, and electric vehicles, are batteries that quietly power everyday life. As society becomes increasingly dependent on portable and sustainable energy, the development of compact and reliable battery technology has become one of the most important technological challenges of our time.

Lithium-ion batteries currently dominate the battery industry, but alternatives that could offer improved safety, lower cost, and higher energy density are being actively explored. Solid-state magnesium batteries have long been considered a promising next-generation energy technology. However, instability inside these batteries remains a major obstacle to their development.

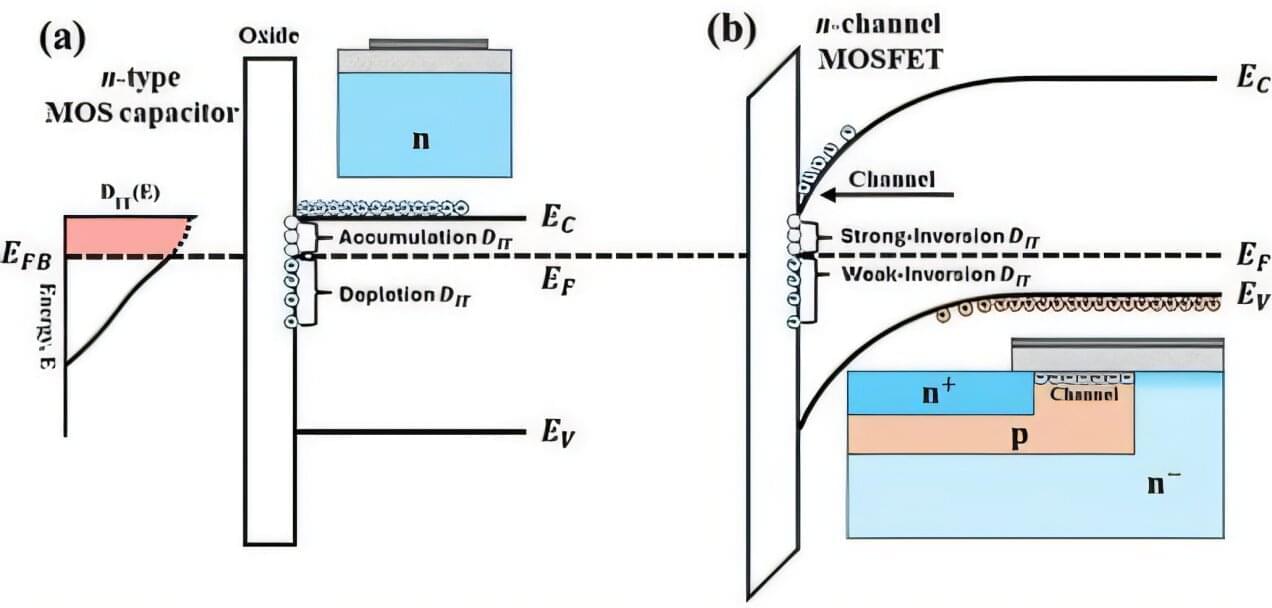

Researchers at Sandia National Laboratories and Auburn University have developed a new method to more accurately detect atomic-scale defects in electronic materials, an advance that could help improve technologies ranging from electric vehicles to high-power electronics. The study, appearing in the Journal of Applied Physics, addresses a longstanding challenge in understanding what happens at the critical boundary where a semiconductor meets an insulating layer.

At this interface, microscopic defects can trap electrical charge and quietly reduce device performance, even when the device otherwise appears to function normally. These defects can limit efficiency, increase electrical losses, and reduce the performance of advanced semiconductor devices.

Scientists commonly study these defects by comparing how a device responds to slow and fast electrical signals. However, the technique depends on knowing a key device property, the insulator capacitance, with very high accuracy. Even tiny errors can produce misleading results, sometimes making it appear that far more defects exist than are actually present.

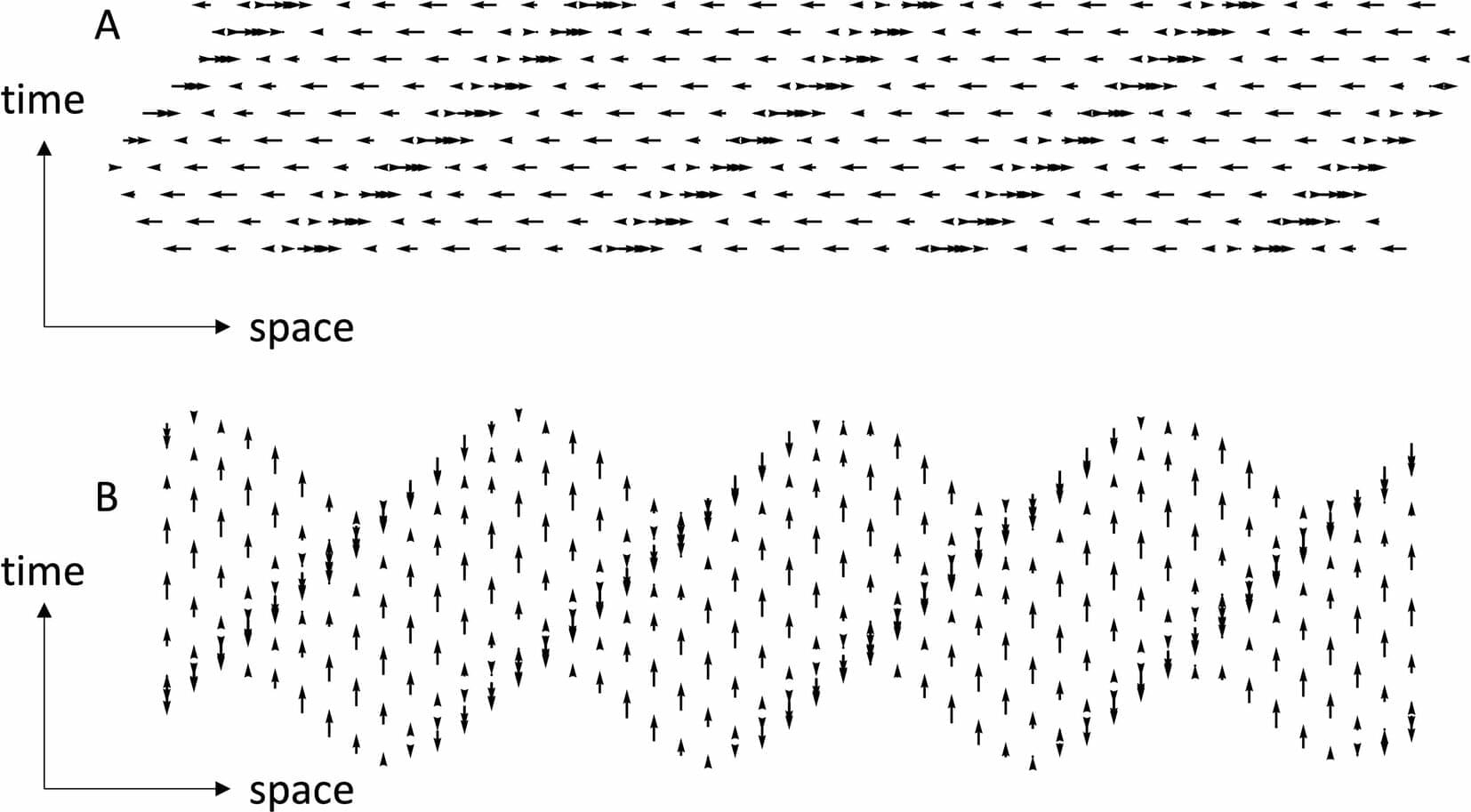

An international team of scientists has developed a new analysis of how sound waves behave, revealing surprising effects that have largely been overlooked for decades. In the new paper in Scientific Reports, which was led by researchers from City St George’s, University of London, the team explored how sound waves move through air and how those movements might be perceived visually.

Sound travels as a longitudinal wave, meaning air molecules vibrate back and forth rather than moving up and down like waves in a violin string. These vibrations are usually assumed to be smooth and regular, and as a physical phenomenon they form the basis of acoustics and some forms of seismic transmission. However, the new theoretical analysis of physical longitudinal wave motion reveals that the behavior of sound waves changes dramatically when they become stronger (i.e. above 160 dB at 10 kHz, which is similar to the noise level inside a high-pitched jet engine), and the prior assumptions are only true for moderate sounds.

Using computer simulations, the researchers—namely Professor Christopher Tyler and Professor Joshua Solomon at City St George’s and Professor Stuart M. Anstis from the University of California, San Diego—created animations where each dot represents an air molecule. Each dot moves back and forth in place, slightly out of step with its neighbors. This tiny delay between dots creates the appearance of a wave traveling through space as the dots move back and forth in place, just as sound does in real life.