VIEWPOINT: DeepSouth could help unlock the secrets of the mind and advance AI.

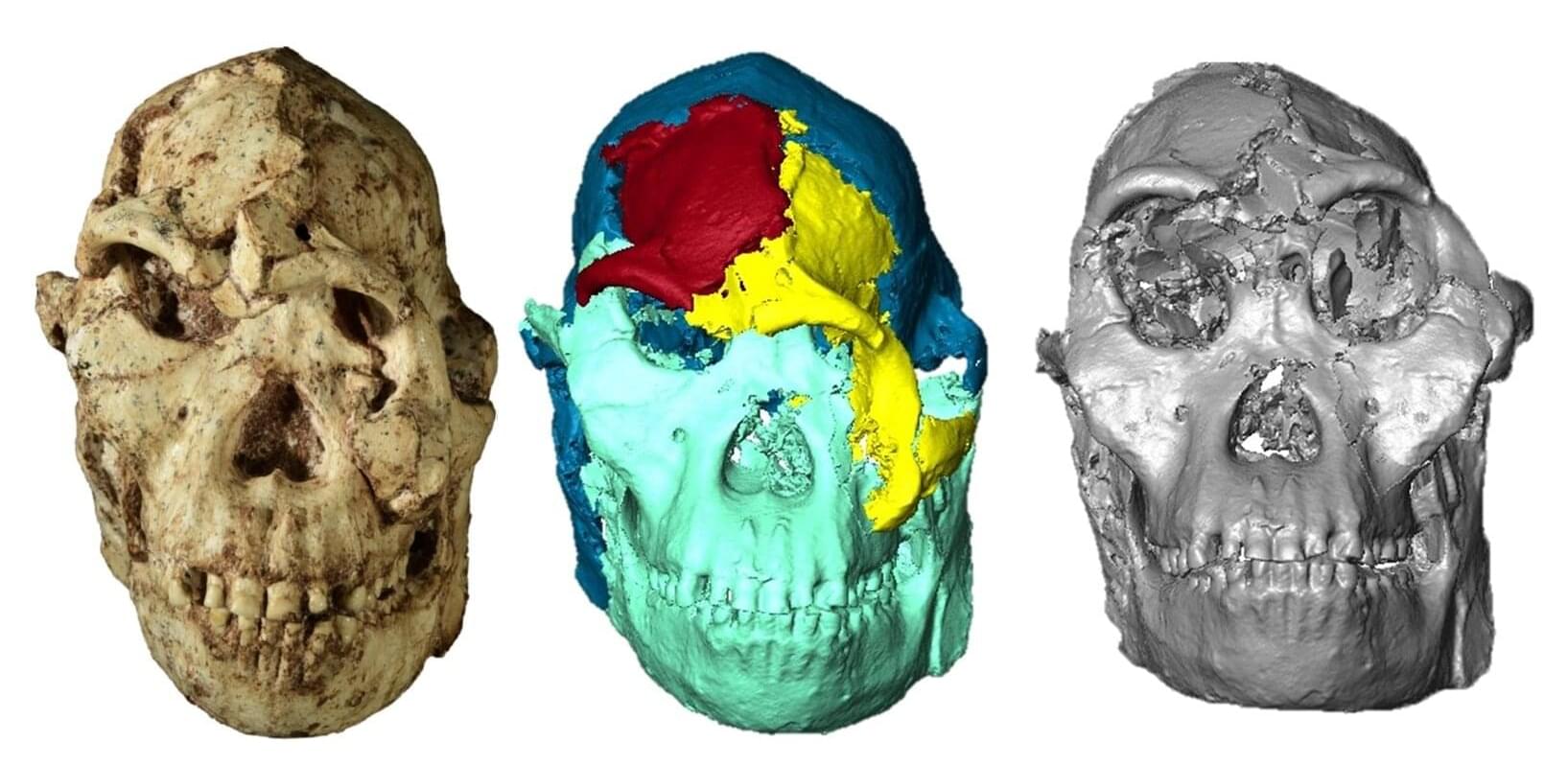

Identified as the most complete Australopithecus fossil discovered to date, “Little Foot” was buried in sediments whose movement and weight caused fractures and deformations, making analysis of its skull—and more particularly its face—difficult. This anatomical region, which is essential for understanding the adaptations of our ancestors and relatives to their environment, has now been virtually reconstructed for the first time by a CNRS researcher and her British and South African colleagues. These are published in Comptes Rendus Palevol.

A comparative analysis of this reconstruction with several extant great apes and three other Australopithecus specimens reveals that the face of “Little Foot” is closer in terms of size and morphology to Australopithecus specimens from eastern Africa than to those from southern Africa. This finding raises questions about the relationships between these different populations and about the chronology of the evolutionary processes that reshaped the faces of these hominins, particularly the orbital region, which appears to have been subject to strong selective pressures.

The skull was first transported to the Diamond Light Source synchrotron (United Kingdom), where it was carefully digitized. The research team then virtually isolated the bone fragments using semi-automated methods and supercomputers. Their realignment resulted in a 3D reconstruction with a resolution of 21 microns. More than five years were required to complete this reconstruction.

Using the Perlmutter supercomputer, researchers achieved a record-scale simulation of a quantum microchip to refine and validate next-generation quantum hardware designs. Researchers from Lawrence Berkeley National Laboratory (Berkeley Lab) and the University of California, Berkeley have complete

Advances in supercomputing have made solving a long‐standing astronomical conundrum possible: How can we explain the changes in the chemical composition at the surface of red giant stars as they evolve?

For decades, researchers have been unsure exactly how the changing chemical composition at the center of a red giant star, caused by nuclear burning, connects to changes in composition at the surface. A stable layer acts as a barrier between the star’s interior and the outer connective envelope, and how elements cross that layer remained a mystery.

In a Nature Astronomy paper, researchers at the University of Victoria’s (UVic) Astronomy Research Center (ARC) and the University of Minnesota solved the problem.

#Quantum #CyberSecurity

Quantum computing is not merely a frontier of innovation; it is a countdown. Q-Day is the pivotal moment when scalable quantum computers undermine the cryptographic underpinnings of our digital realm. It is approaching more rapidly than many comprehend.

For corporations and governmental entities reliant on outdated encryption methods, Q-Day will not herald a smooth transition; it may signify a digital catastrophe.

Comprehending Q-Day: The Quantum Reckoning

Q-Day arrives when quantum machines using Shor’s algorithm can dismantle public-key encryption within minutes—a task that classical supercomputers would require billions of years to accomplish.

Neuromorphic computers modeled after the human brain can now solve the complex equations behind physics simulations — something once thought possible only with energy-hungry supercomputers. The breakthrough could lead to powerful, low-energy supercomputers while revealing new secrets about how our brains process information.

Elon Musk Announces MAJOR Company Changes as XAI/SpaceX ## Elon Musk is announcing significant changes and advancements across his companies, primarily focused on developing and integrating artificial intelligence (AI) to drive innovation, productivity, and growth ## ## Questions to inspire discussion.

Product Development & Market Position.

🚀 Q: How fast did xAI achieve market leadership compared to competitors?

A: xAI reached number one in voice, image, video generation, and forecasting with the Grok 4.20 model in just 2.5 years, outpacing competitors who are 5–20 years old with larger teams and more resources.

📱 Q: What scale did xAI’s everything app reach in one year?

A: In one year, xAI went from nothing to 2M Teslas using Grok, deployed a Grok voice agent API, and built an everything app handling legal questions, slide decks, and puzzles.

The unveiling by IBM of two new quantum supercomputers and Denmark’s plans to develop “the world’s most powerful commercial quantum computer” mark just two of the latest developments in quantum technology’s increasingly rapid transition from experimental breakthroughs to practical applications.

There is growing promise of quantum technology’s ability to solve problems that today’s systems struggle to overcome, or cannot even begin to tackle, with implications for industry, national security and everyday life.

So, what exactly is quantum technology? At its core, it harnesses the counterintuitive laws of quantum mechanics, the branch of physics describing how matter and energy behave at the smallest scales. In this strange realm, particles can exist in several states simultaneously (superposition) and can remain connected across vast distances (entanglement).

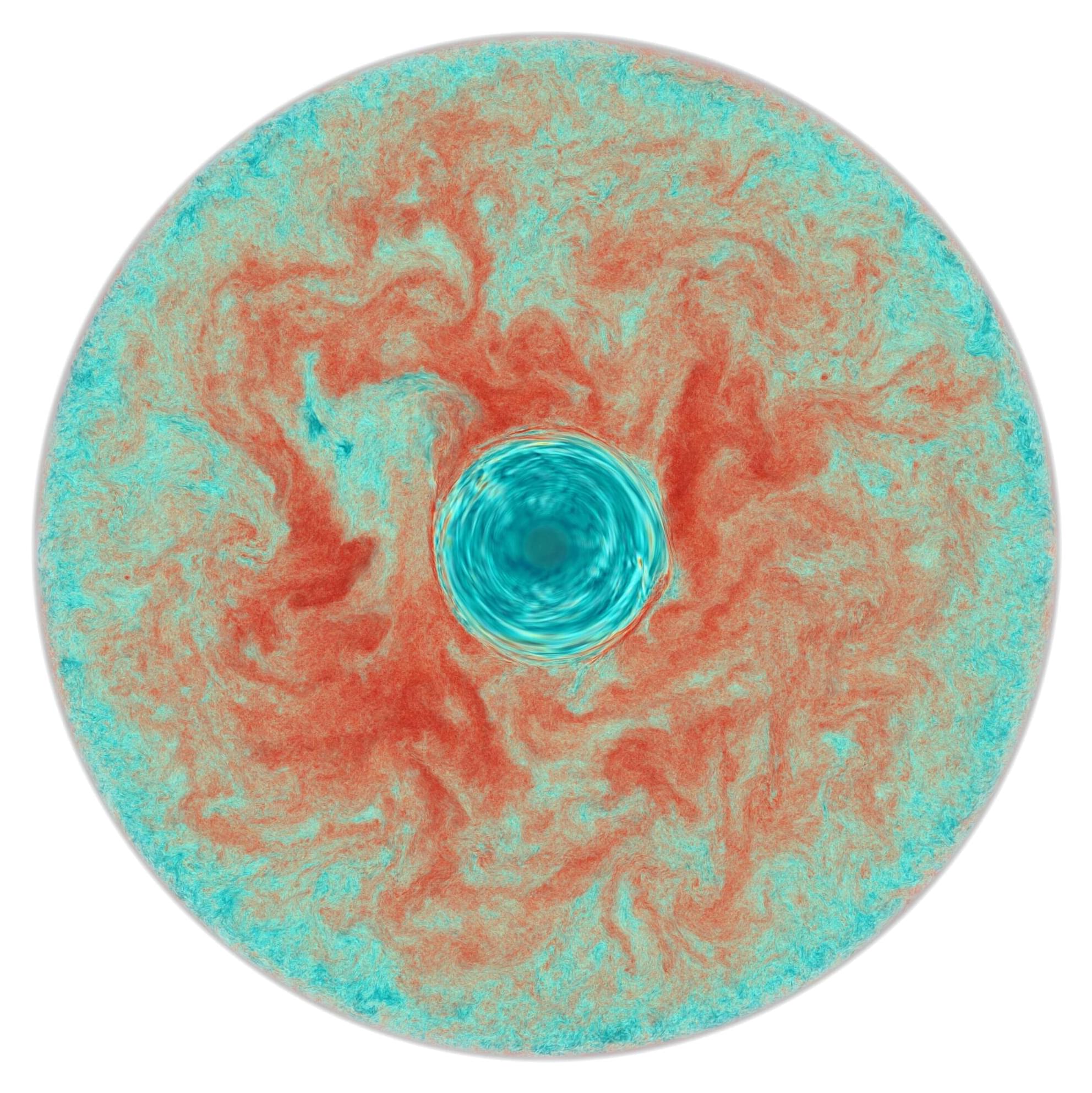

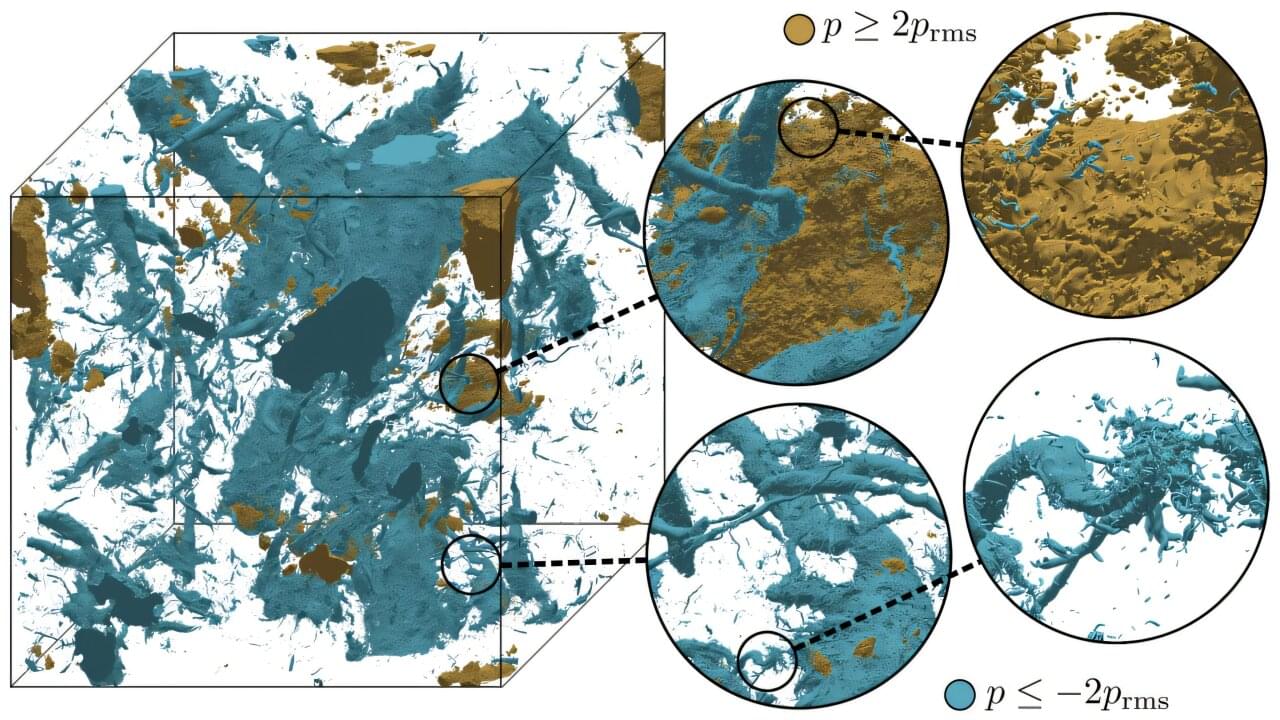

Using the Frontier supercomputer at the Department of Energy’s Oak Ridge National Laboratory, researchers from the Georgia Institute of Technology have performed the largest direct numerical simulation (DNS) of turbulence in three dimensions, attaining a record resolution of 35 trillion grid points. Tackling such a complex problem required the exascale (1 billion billion or more calculations per second) capabilities of Frontier, the world’s most powerful supercomputer for open science.

The team’s results offer new insights into the underlying properties of the turbulent fluid flows that govern the behaviors of a variety of natural and engineered phenomena—from ocean and air currents to combustion chambers and airfoils. Improving our understanding of turbulent fluctuations can lead to practical advancements in many areas, including more accurately predicting the weather and designing more efficient vehicles.

The work is published in the Journal of Fluid Mechanics.

Even supercomputers can stall out on problems where nature refuses to play by everyday rules. Predicting how complex molecules behave or testing the strength of modern encryption can demand calculations that grow too quickly for classical hardware to keep up. Quantum computers are designed to tackle that kind of complexity, but only if engineers can build systems that run with extremely low error rates.

One of the most promising routes to that reliability involves a rare class of materials called topological superconductors. In plain terms, these are superconductors that also have built-in “protected” quantum behavior, which researchers hope could help shield delicate quantum information from noise. The catch is that making materials with these properties is famously difficult.