Researchers found that centromeric proteins localize to telomeres in alternative lengthening of telomeres (ALT) tumors, offering a new biomarker target.

Thousands of small earthquakes, detected for the first time by a machine-learning process, reveal the distinct, razor-sharp edge of the Yakutat microplate as it subducts beneath the North American plate.

The Yakutat oceanic plateau is caught in the middle of a tectonic traffic jam with the Pacific plate as it subducts beneath the North American plate. The position and structure of the plates in this congested zone play a significant role in the earthquake and volcanic landscape of south-central Alaska.

The research published by Meghan Miller of Australian National University and her colleagues in The Seismic Record now shows the edge and extent of the Yakutat plate in astonishing detail.

As a philosopher and philosophical counselor, I research the connection between virtue and happiness. In particular, I’ve noticed a connection between sophrosyne and eudaimonia, the Greek philosophical concept for happiness, or living well.

Harmony of the soul

For the Greeks, sophrosyne represented excellence of character, moderation and self-control. It was connected to phronesis, or practical wisdom, and stood in marked contrast with hubris: excessive pride, dangerous overconfidence and lack of self-insight. Heraclitus, a philosopher who lived around 500 B.C.E., taught that sophrosyne was the most important virtue of all.

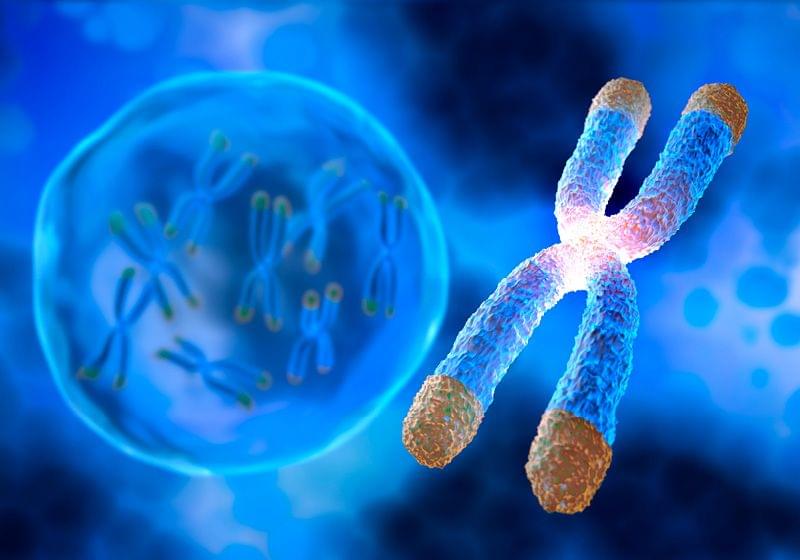

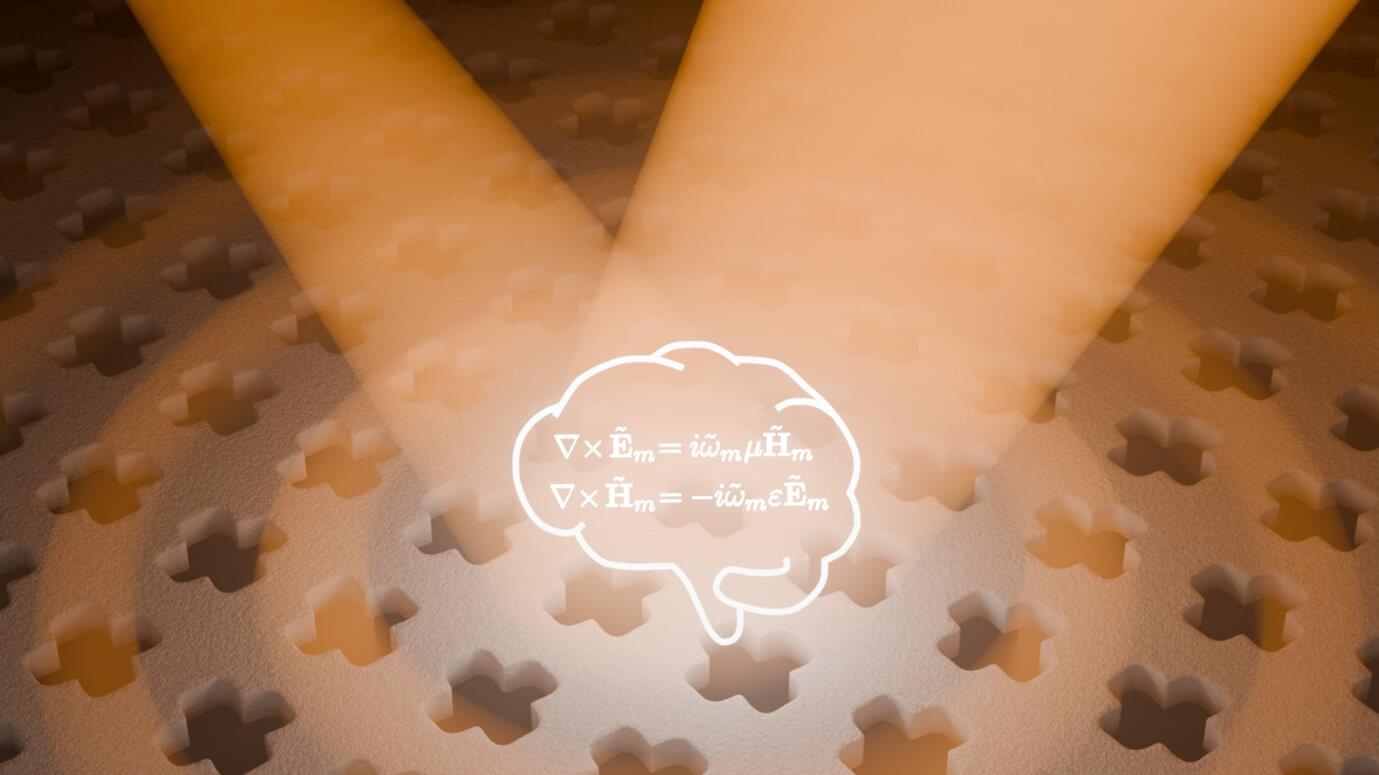

Studying physics can be very useful—even when it comes to machine learning. A digital “super-brain” with built-in knowledge of the fundamental laws of nature can speed up the development of optical components for everything from quantum computers to eyeglasses or camera lenses, according to a new study from Chalmers University of Technology in Sweden.

“When we fed the super-brain information about the laws of physics, it immediately got much smarter. Our calculations now take one tenth of the time previously required,” says Philippe Tassin, professor at the Department of Physics and Astronomy, Chalmers University of Technology.

The research team led by Tassin designs optical components in a field called nanophotonics. On a small scale—less than one wavelength—light can be controlled and manipulated in a completely different way than on larger scales. But there are also limitations on how light can be controlled in advanced ways in natural optical materials.

New research shows that specific types of brain cells become active after brain injuries and exhibit properties similar to those of neural stem cells. Astrocyte plasticity might correlate with the upregulation of the Galectin 3 protein, which may significantly contribute to discovery of additional biomarkers. The study discovered that a specific protein regulates these cells and could be a target for therapy and contribute to development of better treatments options for brain injuries. The loss of neurons, which subsequently causes impairment of brain function, is caused by the onset and progression of neurological disorders, like strokes, spinal cord injuries and neurodegenerative diseases such as Parkinson’s, Alzheimers / Dementia, ALS and MND. Effective treatment options still need to be improved. However, preclinical research has shown a promising response involving reactive astrocytes, a specific type of glial cell, which is a crucial part of the nervous system alongside neurons. Microglia and Glial cells are regarded as a safeguard for neurons, demonstrating the ability to resume cell proliferation, a mechanism essential for protecting the injury-affected brain from invasion by immune cells.[1]

Differentiation of Mesenchymal Stem Cells to Neuroglia.

Given the importance of astrocyte proliferation, these findings are relevant for understanding how changes in cerebrospinal fluid composition (upregulation of Galectin 3 protein) support the maintenance of astrocyte plasticity in the brain. Identifying Galectin 3 protein as an inducer of astrocyte plasticity has helped discover other biomarkers that offer beneficial modulation inside the injured brain parenchyma. These regulators of astrocyte proliferation after acute injury offer great promise for the future clinical applications of these biomarkers as indicators for detecting a beneficial reaction of glial stem cell therapy or help identify the presence of other cells with stemness potential in an injured patient’s brain [5].

To learn more, please visit the YouTube Help Center: https://www.youtube.com/help

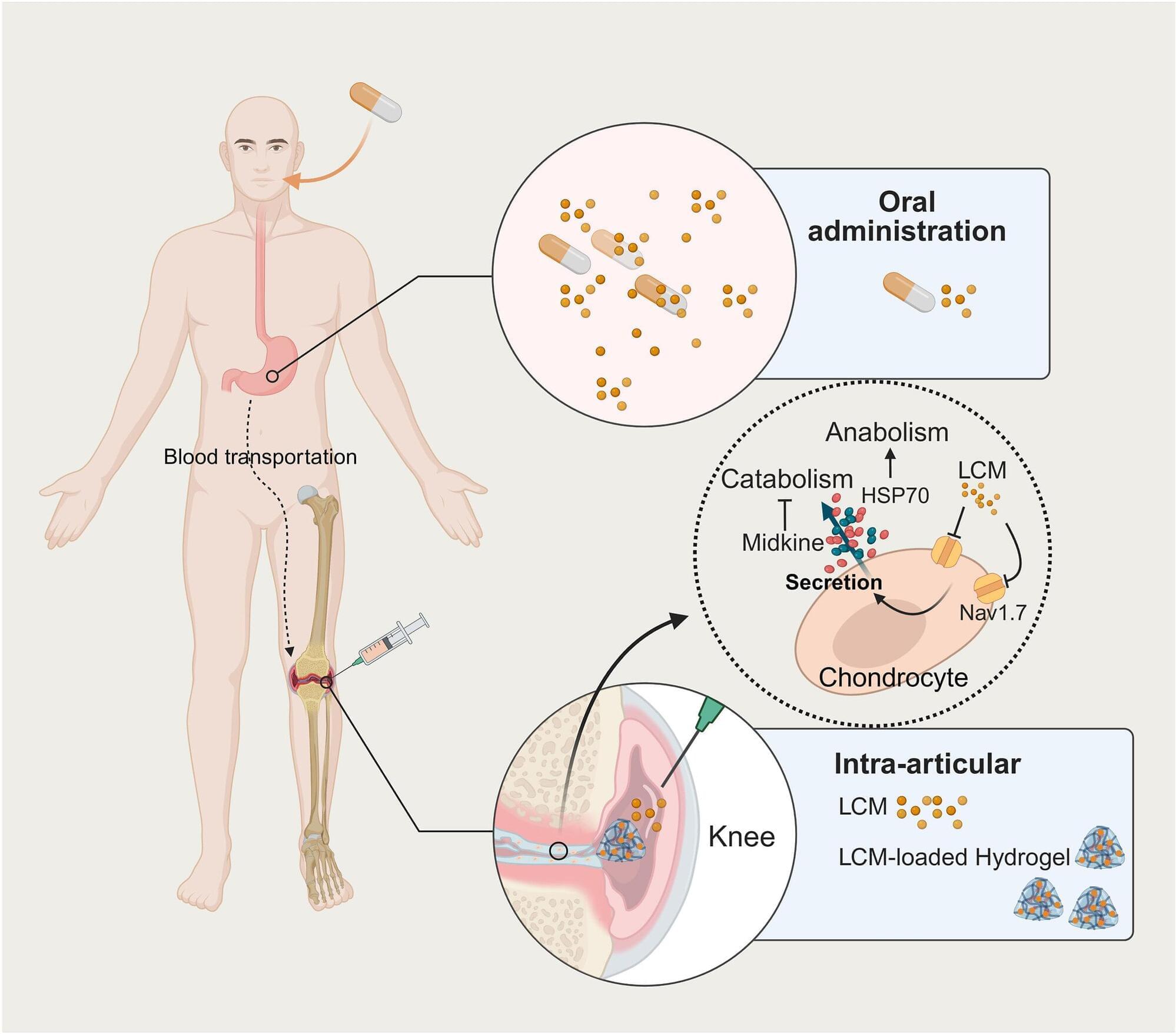

For millions of people living with osteoarthritis, daily life can involve a frustrating cycle of pain and stiffness. While current treatments like over-the-counter medications or steroid injections can temporarily dull the ache, they do not stop the joint from deteriorating. A Yale study published in the journal Bioactive Materials found that the medication lacosamide acts as a highly effective, dual-purpose treatment that relieves joint pain and reverses cartilage damage in osteoarthritis, especially when a specialized hydrogel delivers the drug directly into the joint.

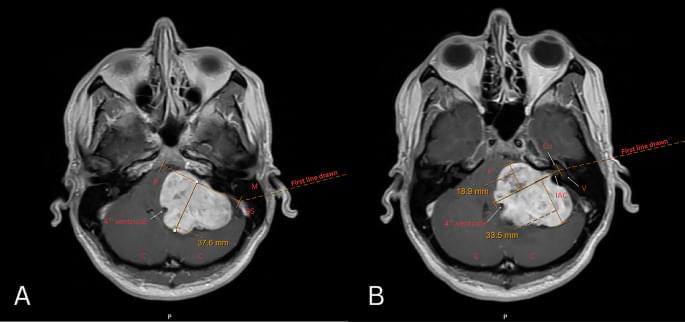

Large vestibular schwannomas (VS) often compress the brainstem and differ in their relation to the internal auditory canal (IAC); the significance of these radiographic features on postoperative outcomes remains unclear. This study quantifies the impact of brainstem compression (BSC) and position relative to the IAC on surgical outcomes in VS.

We retrospectively identified 116 patients with sporadic unilateral VS ≥ 3 centimeters (2017–2022). Neurofibromatosis 2 cases were excluded. BSC was quantified with MRI T1 post-contrast axial images as the perpendicular distance from the brainstem-cerebellum to the point of maximal compression. Anterior and posterior IAC extension were measured relative to a line bisecting the IAC from the porus to fundus. Outcomes included postoperative facial nerve (FN) function, extent of resection (EOR), and length of stay (LOS).

Greater anterior extension was associated with decreased EOR in univariate analysis (OR = 1.12, p = 0.03), but not after controlling for tumor size and age (OR = 1.09, p = 0.158). Greater BSC was associated with worse FN function at 2–3 weeks postoperatively on univariate (OR = 1.08, p = 0.036) and approached significance on multivariate analysis (OR = 1.07, p = 0.08). Posterior extension was associated with increased LOS in univariate (β = 217.57 min, p = 0.024), but not multivariate analysis. Neither anterior extension nor BSC were associated with LOS. Older age correlated with a lower rate of GTR and longer LOS in multivariate analysis (EOR: OR = 1.05, p = 0.003; LOS: β = 79.84 min, p = 0.026).

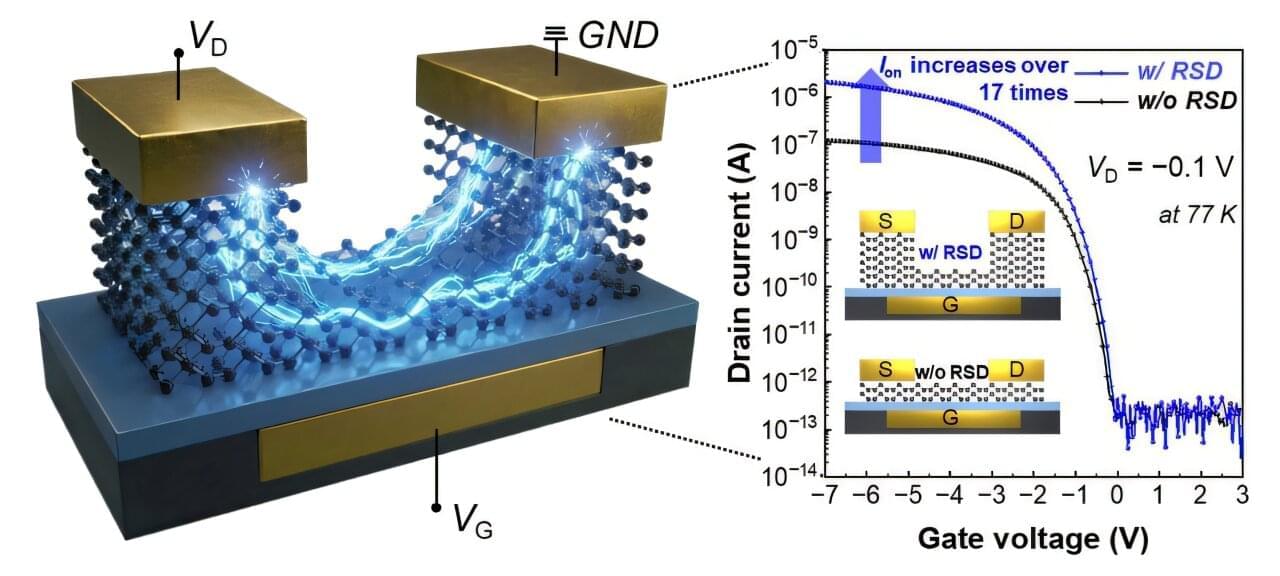

As semiconductor chips become increasingly thinner, the components inside chips are locked in a fierce race to achieve the ultimate ultra-thin state. However, this has presented a structural limitation: the thinner the device, the harder it is for electricity to flow.

Recently, a research team at POSTECH (Pohang University of Science and Technology) successfully resolved this issue through a simple yet innovative approach: “thickening only the necessary parts.”

The research team, led by Professor Byoung Hun Lee from POSTECH’s Department of Electrical Engineering and the Department of Semiconductor Engineering, has developed a technology that dramatically lowers contact resistance by redesigning the metal-semiconductor contact structure in ultra-thin tellurium (Te) transistors.