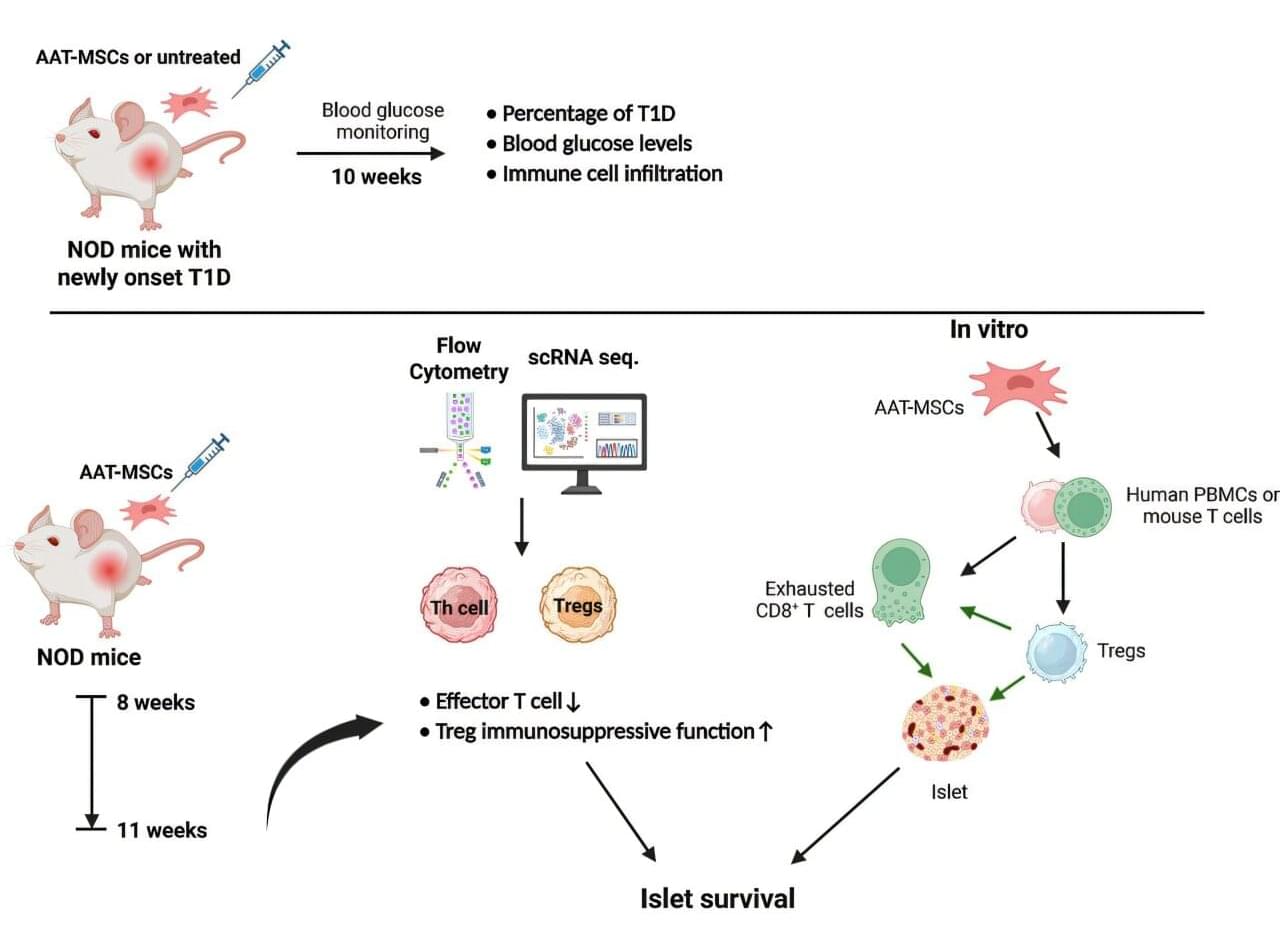

A group of researchers at the Medical University of South Carolina (MUSC) has recently developed a new stem cell therapy with a remarkable ability to reverse new-onset type 1 diabetes (T1D) in a mouse model of the disease. The work is published in the journal Molecular Therapy.

Hongjun Wang, Ph.D., associate director of the South Carolina Clinical & Translational Research (SCTR) Institute Pilot Program and co-scientific director for the Center for Cellular Therapy, led the team. Co-first authors Hua Wei, Ph.D.; Judong Kim, Ph.D.; and Wenyu Gou, Ph.D., together with other collaborators, conducted most of the work to establish these findings.

This research study marks a pivotal move away from the current standard of managing blood sugar through multiple daily insulin injections and toward a lasting way to reprogram the immune system itself. For the millions of people currently living with T1D, this could be a game-changer.