White matter pathways allow distant parts of the brain to communicate, supporting memory, emotion, and language. One such pathway, the uncinate fasciculus, connects the front of the temporal lobe with regions of the frontal cortex involved in decision-making and social behavior. Despite its importance, little is known about the microscopic structure of this tract in the human brain.

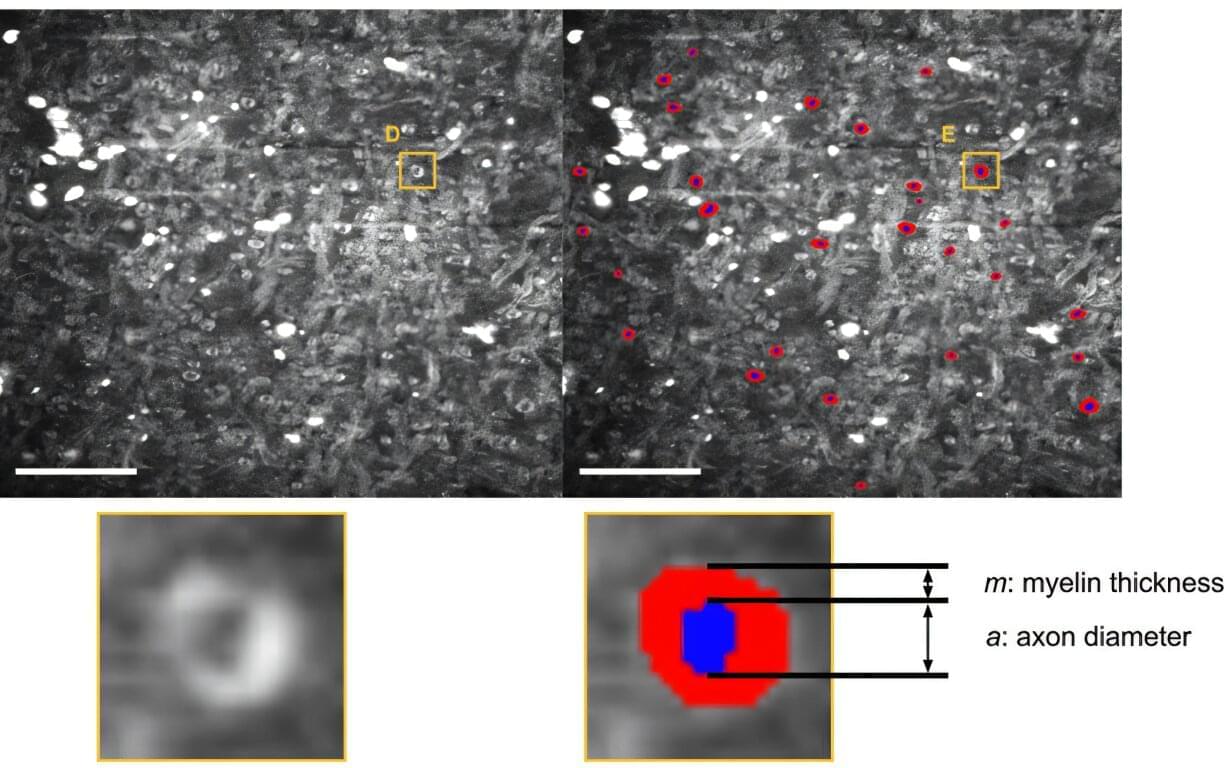

Traditional techniques such as electron microscopy can reveal fine details, but they often fail when applied to postmortem human tissue, which is frequently degraded.

In a study published in Biophotonics Discovery, researchers report a new way to examine white matter structure in postmortem human brains.