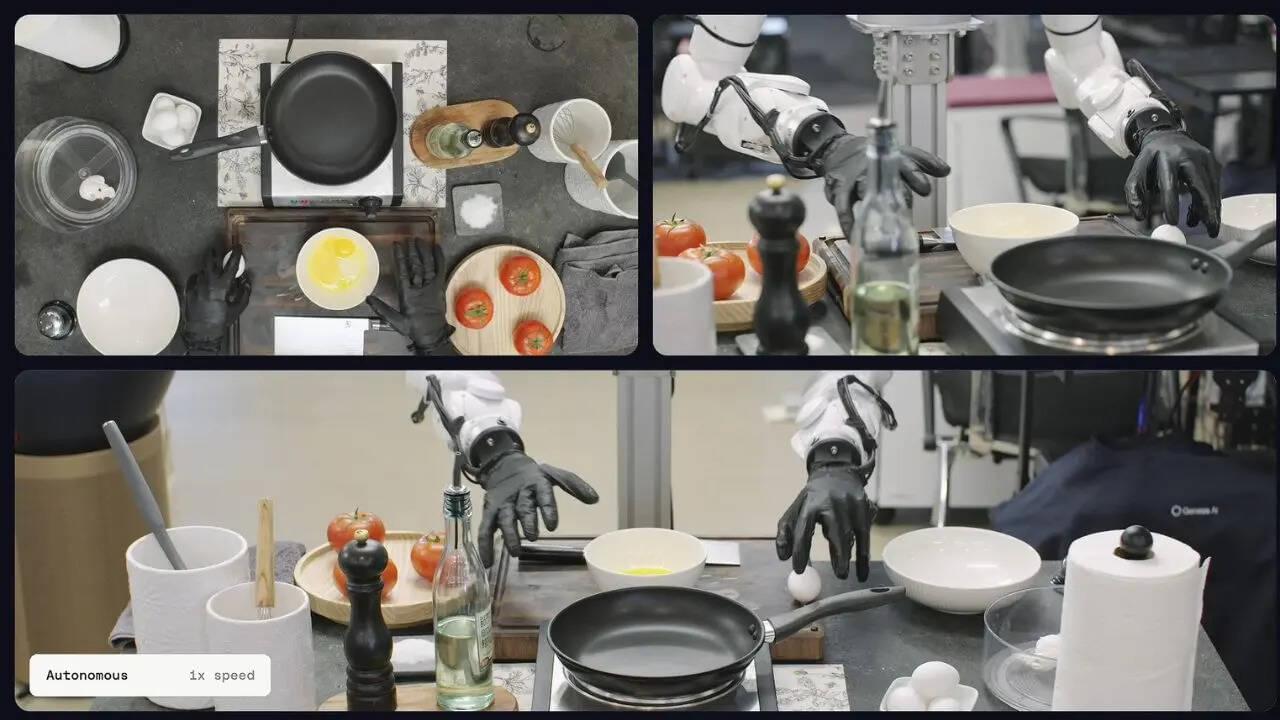

Most robots today are excellent at repetitive factory work. They can move boxes, weld metal parts or follow pre-programmed routines without getting tired. But ask them to crack an egg without crushing it or cut vegetables evenly and things usually fall apart pretty quickly. That is exactly the challenge Genesis AI claims it is trying to solve. The robotics company has introduced a new robotic foundation model system called ‘GENE-26.5’, which it says can help robots perform highly delicate, human-like tasks with impressive precision.

According to Genesis AI, its robotic system can handle surprisingly complex activities including cooking meals, solving Rubik’s cubes, wiring cables, lab pipetting and even playing the piano in real time. The company showcased these capabilities using a robotic hand called ‘Genesis Hand 1.0’, which has been designed to mimic the movement and flexibility of a human hand. It reportedly features 20 degrees of freedom along with soft-contact surfaces to improve grip and precision…

…These gloves reportedly capture how human hands move, grip objects and apply pressure during everyday tasks. That data is then used to train the robot so it can imitate those same actions more naturally. According to a Business Insider interview, Genesis AI CEO Zhou Xian claimed his team managed to teach the robot a new piano song in roughly one hour. He also explained that a ‘30-second complex skill,’ like certain cooking tasks shown in demos, can be learned using a few hours of human data combined with less than 30 minutes of robot training data. ‘I think these are probably the most complex tasks ever being performed by a robot in a very human-like way at the efficiency, speed, and performance similar to a human,’ Xian said. He added that the robot currently performs at roughly 60 to 70 percent of human speed.

Genesis AI has introduced a new robotic foundation model, “GENE-26.5,” designed to enable robots to perform intricate tasks akin to human actions with precision., Technology & Science, Times Now.