Google DeepMind, Anthropic and Meta study whether AI could become conscious — and what follows for humans if it does

Humanity has long regarded intelligence as an ability unique to human beings. The capacities to think, remember, reason, and solve problems were considered central to the human mind itself. To understand language, anticipate the future, and engage in creative thought was believed to belong exclusively to humanity.

Yet today, humanity stands before an entirely new kind of presence.

Hackers are ruthless. They can take control of your computer, delete files and disappear without a trace. However, FIU cybersecurity researcher Weidong Zhu has discovered a way to transform a computer’s storage chip into an additional tool for cyber defense. Working with collaborators at the University of Florida, Zhu created a system that makes data on these chips last longer—extending the lifespan of your files in the critical window after your computer is compromised. The work is published in the journal Proceedings of the 2025 ACM SIGSAC Conference on Computer and Communications Security.

“Our system extends recoverable data history up to 126 days,” said Zhu, an assistant professor at FIU’s Knight Foundation School of Computing & Information Sciences whose work is part of the Center for Integrated Security, Privacy, and Trustworthy AI (CIERTA). “Even if your computer is infected, your data can survive on your drive.”

Storage chips, known as solid-state drives (SSDs), have intrigued cybersecurity researchers for years. As hardware—not software—they offer unique safety benefits during an attack.

Dutch authorities have announced the takedown of a botnet that enslaved millions of infected devices, including computers, tablets, smartphones, and IoT devices, to carry out malicious attacks.

The bot network, per the Dutch Politie and the National Cyber Security Center (NCSC), consisted of at least 17 million infected devices. More than 200 servers located in the Netherlands acted as the platform’s backend infrastructure.

According to a statement issued by the NCSC, police officials seized a subset of these servers from a hosting provider that provided the infrastructure. The provider is said to have subsequently taken the botnet offline following its use for criminal purposes.

Researchers interviewed almost 30 religious leaders across all six major religious faiths in the UK to find out how AI was affecting them.

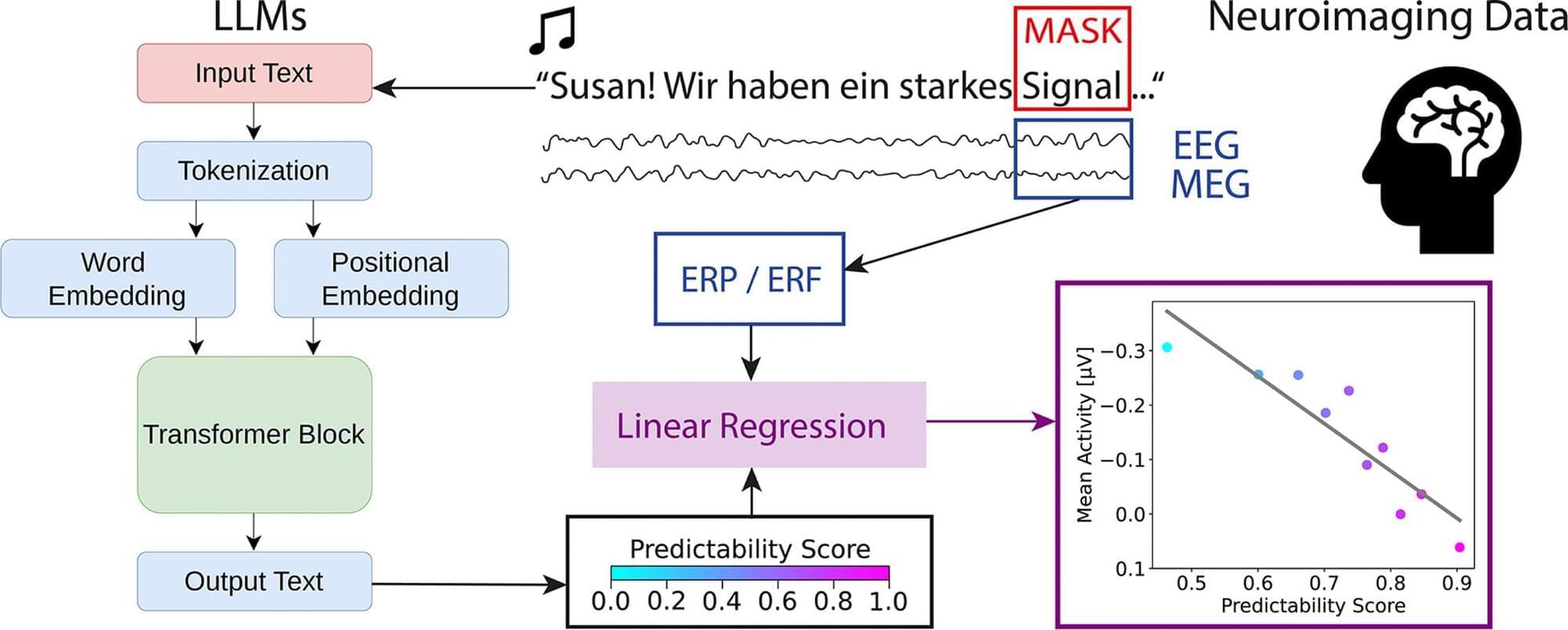

Even while listening, the brain attempts to anticipate the next words. This is the conclusion reached by a current study conducted by an interdisciplinary team of researchers led by PD Dr. Patrick Krauss, Friedrich-Alexander-Universität Erlangen-Nürnberg (FAU), and PD Dr. Achim Schilling, Heidelberg University. The researchers combined three methods: a natural listening situation, high resolution measurements of brain activity, and an AI language model as reference.

The higher the probability of a certain word occurring in the relevant context, the weaker the neural reaction during processing. At the same time, the data indicate a rise in pre-onset activity before the word begins, suggesting the brain works with predictions. The work is published in the journal NeuroImage.

Are humans born with innate grammatical scaffolding, or does language develop on the basis of use and experience? This is a question that is still debated by the various linguistic schools of thought. Recently, powerful AI language models (Large Language Models, LLMs), which process language by predicting subsequent words, have fueled this debate.

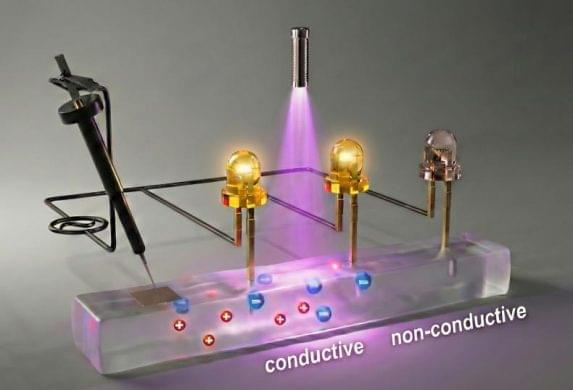

Consider the chief difference between living systems and electronics: the first is generally soft and squishy, while the latter is hard and rigid. Now, in work that could impact human-machine interfaces, biocompatible devices, soft robotics, and more, MIT engineers and colleagues have developed a soft, flexible gel that dramatically changes its conductivity upon the application of light.

Enter the growing field of ionotronics, which involves transferring data through ions, or charged molecules. Electronics does the same with electrons. But while the latter is well established, ionotronics is still being developed, with one huge exception: living systems. The cells in our bodies communicate with a variety of ions, from potassium to sodium.

Ionotronics, in turn, can provide a bridge between electronics and biological tissues. Potential applications range from soft wearable technology to human-machine interfaces.