Two investigations underscore the role of orbital instabilities in accounting for the diversity of planetary systems.

Statistical physics is shedding light on how network architecture and data structure shape the effectiveness of neural-network learning.

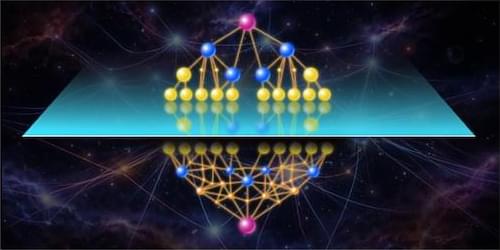

Machine-learning technologies have profoundly reshaped many technical fields, with sweeping applications in medical diagnosis, customer service, drug discovery, and beyond. Central to this transformation are neural networks (NNs), models that learn patterns from data by combining many simple computational units, or neurons, linked by weighted connections. Acting collectively, these neurons can process data to learn complex input–output relationships. Despite their practical success, the fundamental mechanisms by which NNs learn remain poorly understood at a theoretical level. Statistical physics offers a promising framework for exploring central questions in machine-learning theory, potentially clarifying how learning depends on the layout of the network—the NN architecture—and on statistics of the data—the data structure (Fig. 1).

Three recent papers in a special Physical Review E collection (See Collection: Statistical Physics Meets Machine Learning — Machine Learning Meets Statistical Physics) provide significant insights into these questions. Francesca Mignacco of City University of New York and Princeton University and Francesco Mori of the University of Oxford in the UK derived analytical results on the optimal fraction of neurons that should be active at a given time [1]. Abdulkadir Canatar and SueYeon Chung of the Flatiron Institute in New York and New York University investigated the influence of the precision with which a network is “trained” on the amount of data the NN can reliably decode [2]. Francesco Cagnetta at the International School for Advanced Studies in Italy and colleagues showed that NNs whose structure mirrors that of the data learn faster [3].

The world has far more bees than anyone realized. Scientists have, for the first time, estimated just how many species of bees are out there on a global scale, offering a clearer look at how these vital pollinators are distributed around the planet. The landmark study, led by University of Wollongong (UOW) evolutionary biologist Dr. James Dorey, provides the most comprehensive count to date—broken down by continent and country—calculating there are, at a minimum, between 3,700 and 5,200 more bee species buzzing around the world than currently recognized.

The research, outlined in a new paper published Tuesday, February 24, in Nature Communications, lifts global estimates to between 24,705 and 26,164 bee species and reveals a richer and more complex picture of the world’s bees than ever before. The findings highlight how many bee species remain unclassified or overlooked, showing that even our much-loved pollinators are not fully understood, and that closing these knowledge gaps is crucial for conservation and food security.

“Knowing how many species exist in a place, or within a group like bees, really matters. It shapes how we approach conservation, land management, and even big-picture science questions about evolution and ecosystems,” Dr. Dorey said. “Bees are a perfect example. They’re keystone species; their diversity underpins healthy environments and resilient agriculture. If we don’t understand how many bee species there are, we’re missing a key part of the puzzle for protecting both nature and farming.”

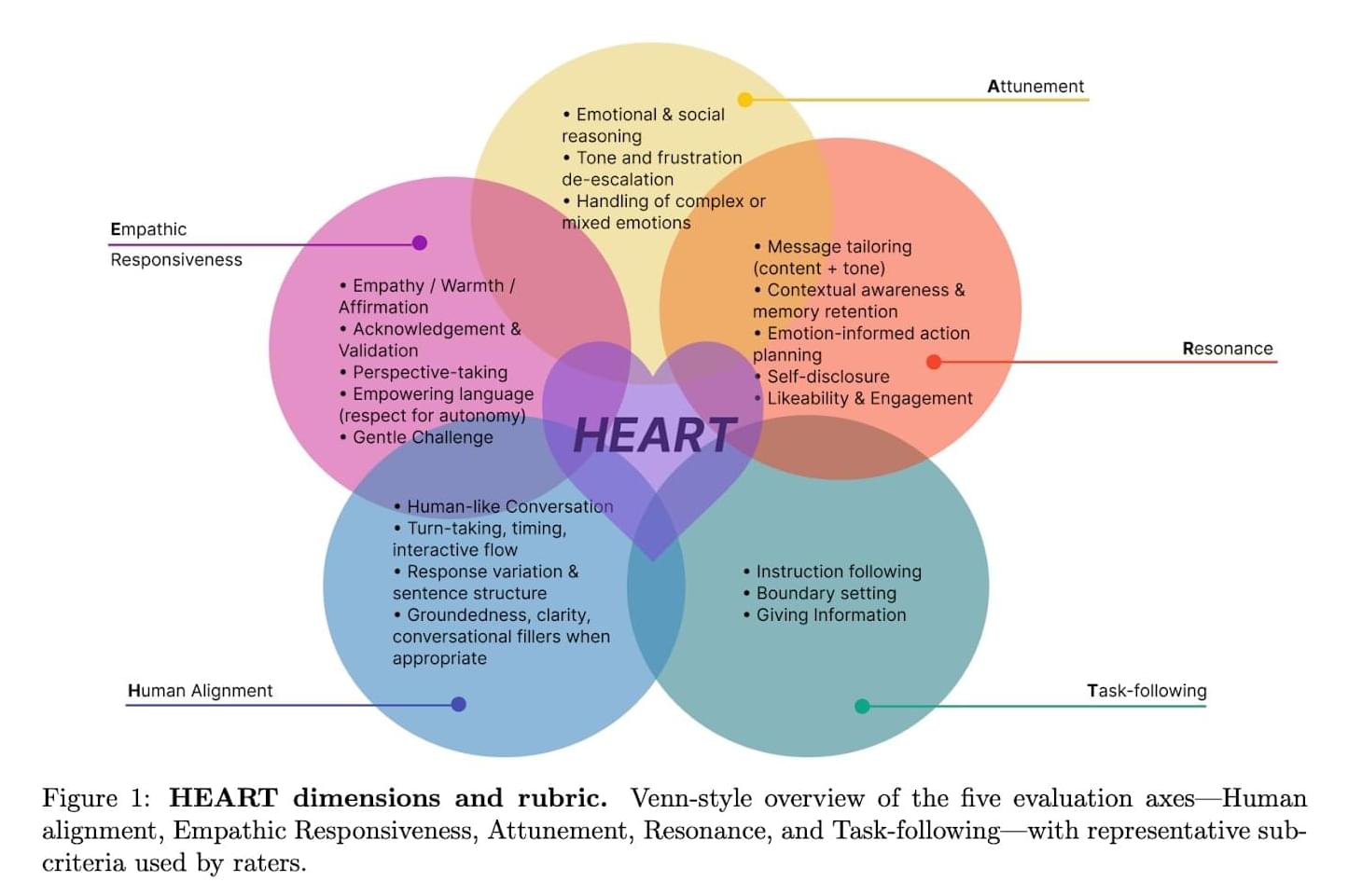

Large language models (LLMs), artificial intelligence (AI) systems that can process human language and generate texts in response to specific user queries, are now used daily by a growing number of people worldwide. While initially these models were primarily used to quickly source information or produce texts for specific uses, some people have now also started approaching the models with personal issues or concerns.

This has given rise to various debates about the value and limitations of LLMs as tools for providing emotional support. For humans, offering emotional support in dialogue typically entails recognizing what another is feeling and adjusting their tone, words and communication style accordingly.

Researchers at Hippocratic AI, Stanford University, University of California San Diego and University of Texas at Austin recently developed a new structured method to evaluate the ability of both LLMs and humans to offer emotional support during dialogues marked by several back-and-forth exchanges. This framework, dubbed HEART, was introduced in a paper is published on the arXiv preprint server.

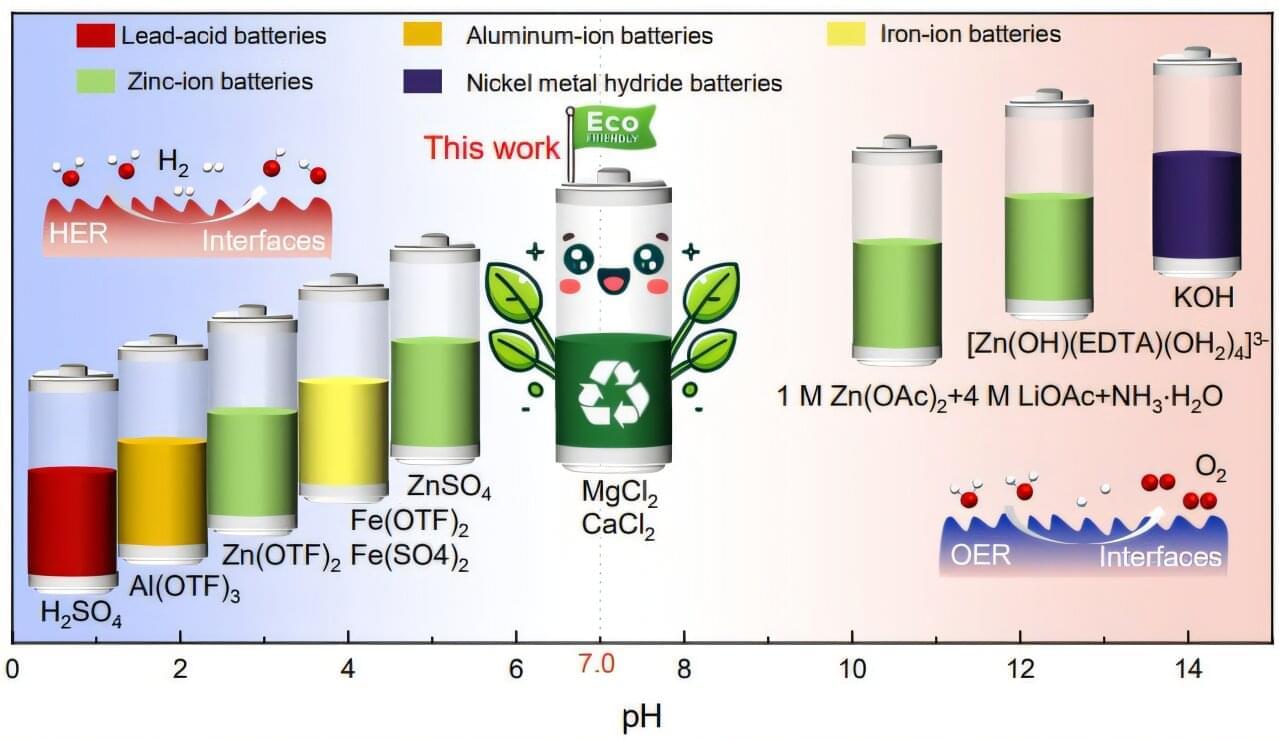

The problem with many types of modern batteries is that they rely on harsh chemicals to work. Not only can these corrosive liquids damage internal parts over time, but they can also leach into soil and water when disposed of, contaminating it. But researchers from the City University of Hong Kong and Southern University of Science and Technology have developed an alternative, a new kind of eco-friendly battery that runs on a solution similar to the minerals used in tofu brine.

The team describes their work in a paper published in the journal Nature Communications.

The scientists replaced traditional acids and alkalis with neutral salts of magnesium and calcium to create the electrolyte. These are the same minerals used as brine in tofu production. Keeping this liquid at a neutral pH of 7.0 prevents the type of corrosive reactions that can destroy a battery from the inside out.

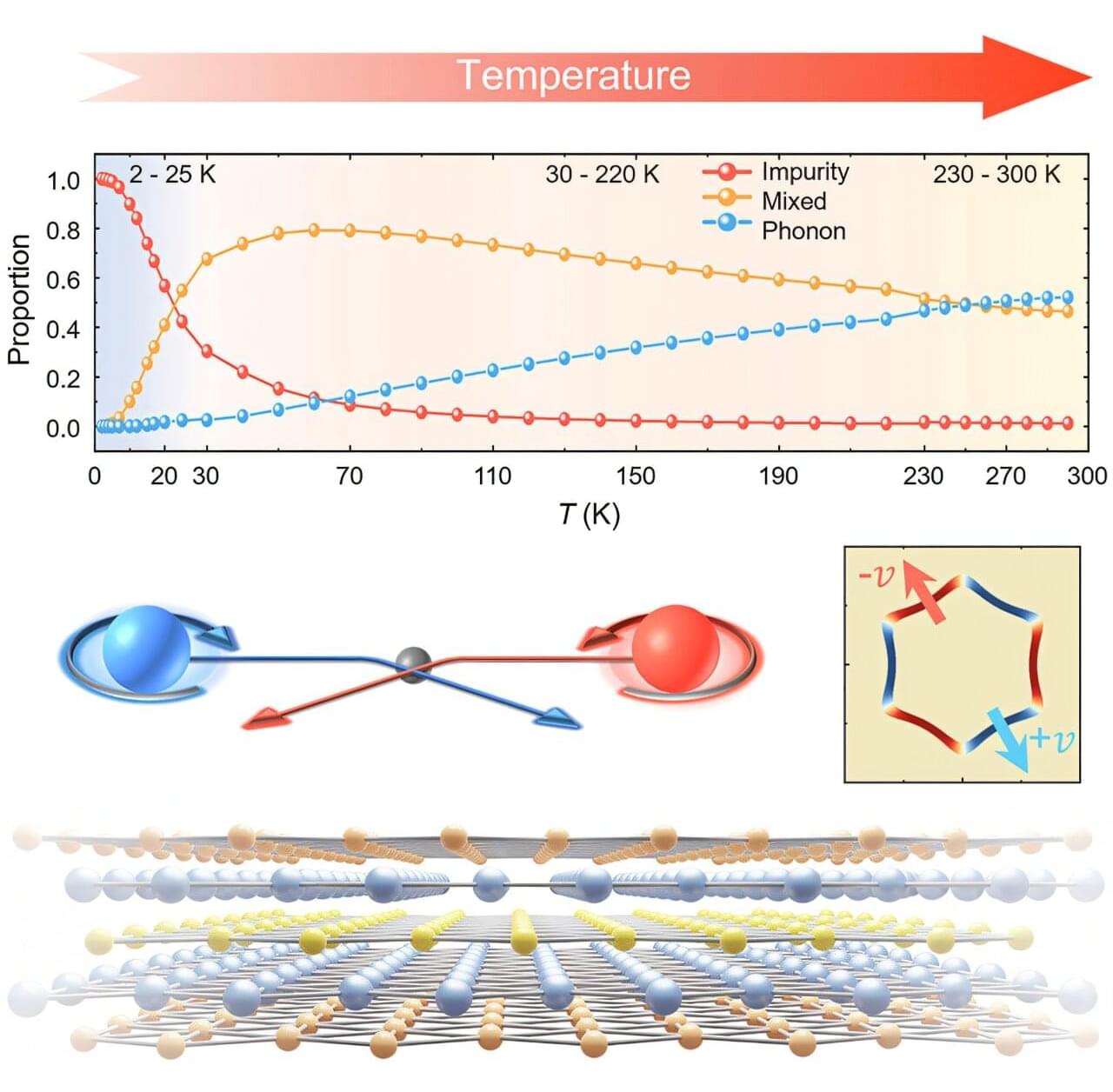

A new study has revealed how tiny imperfections and vibrations inside a promising quantum material could be used to control an unusual quantum effect, opening new possibilities for smaller, faster, and more efficient energy-harvesting devices.

The international team, led by Professor Dongchen Qi from the QUT School of Chemistry and Physics and Professor Xiao Renshaw Wang from Nanyang Technological University in Singapore, studied the mechanism governing the so-called nonlinear Hall effect (NLHE). The research is published in the journal Newton.

Unlike the classical Hall effect, this quantum version allows alternating electrical signals, like those found in wireless or ambient energy sources, to be converted directly into usable direct current without the need for traditional diodes or bulky components.

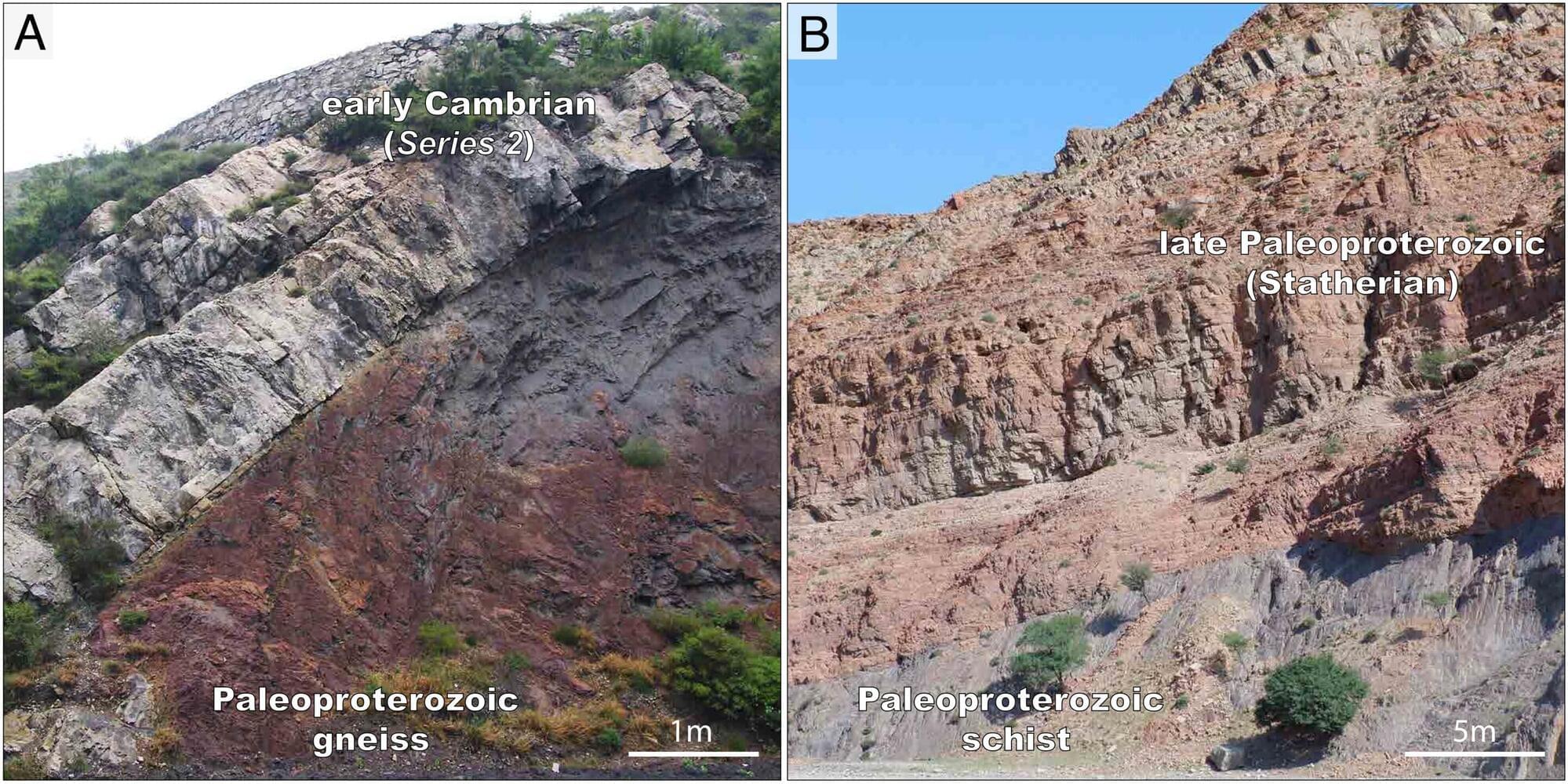

The Great Unconformity is a major gap in Earth’s geologic record. The missing layer between Precambrian and Cambrian rocks represents a gap of around a billion years of history. Among much debate surrounding the cause of the gap, a new study, published in the Proceedings of the National Academy of Sciences, indicates that the timing of the erosion leading to the Great Unconformity aligns with the assembly of the Columbia supercontinent, and that glaciation only contributed minimally.

The origin of the Great Unconformity is debated among geologists. Some believe evidence points to the ancient glaciation associated with “snowball Earth,” which occurred around 700 million years ago, is to blame. Others think tectonic processes associated with Columbia and Rodinia supercontinent cycles are the main cause.

The Great Unconformity was first recognized in the layers of the Grand Canyon, and many subsequent studies took place there to attempt to determine a cause. Those studies showed variable timing and mechanisms. The authors of the new study think that the evidence for Neoproterozoic-period snowball Earth glaciation causing the unconformity at such large scales is weak.

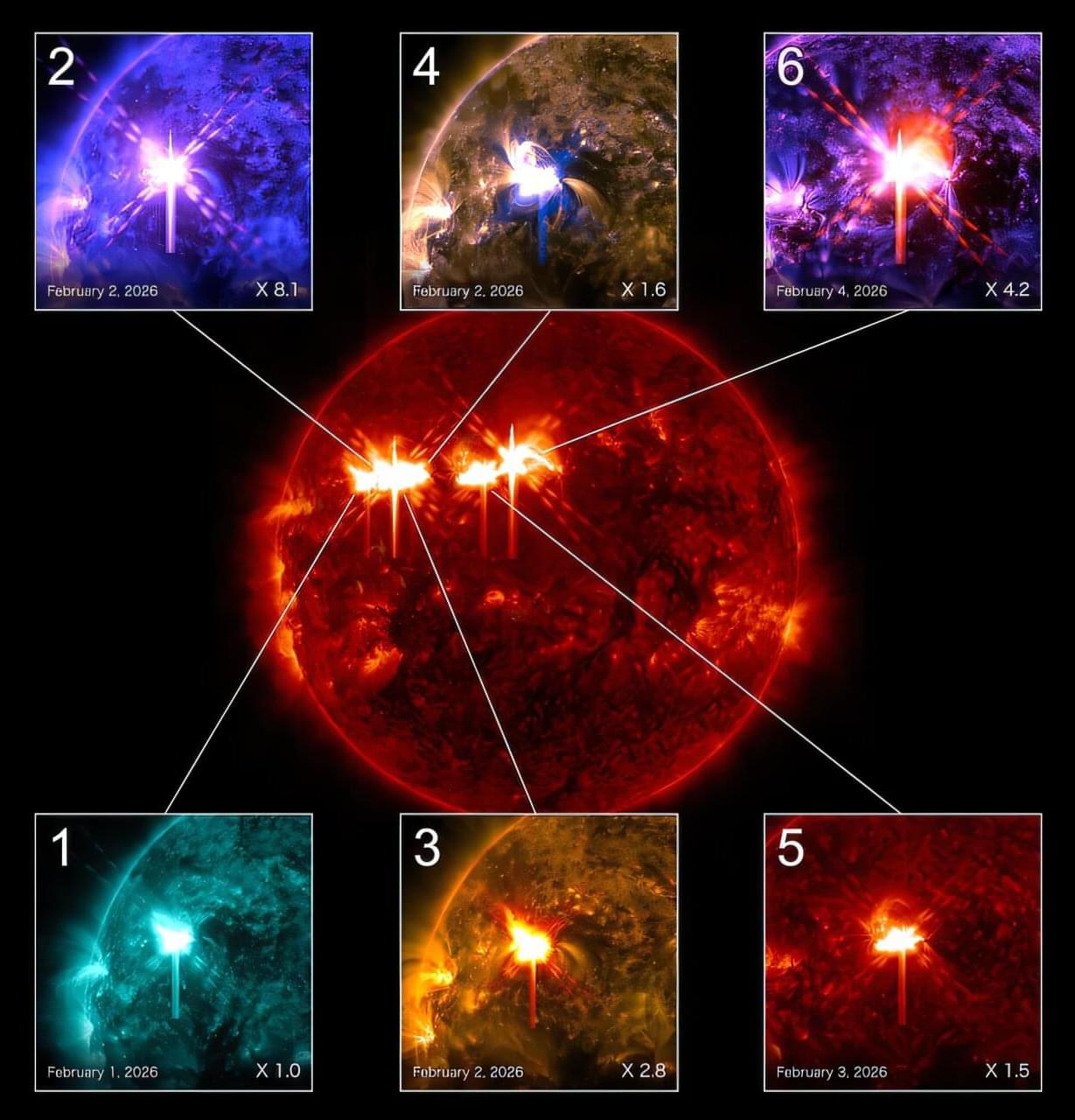

Co-author Dr. Willie Soon, from the Center for Environmental Research and Earth Sciences (CERES), added, “Nature gave us the perfect test. These far-side discoveries essentially validated our method in real time, proving that the underlying patterns we identified are reliable and work everywhere on the sun’s surface.”

Solar superflares are the most powerful eruptions the sun can produce. A direct hit from one of these storms could cause widespread power outages, damage satellites, disrupt GPS navigation, interfere with radio communications, and create radiation hazards for astronauts and airline passengers at high altitudes.

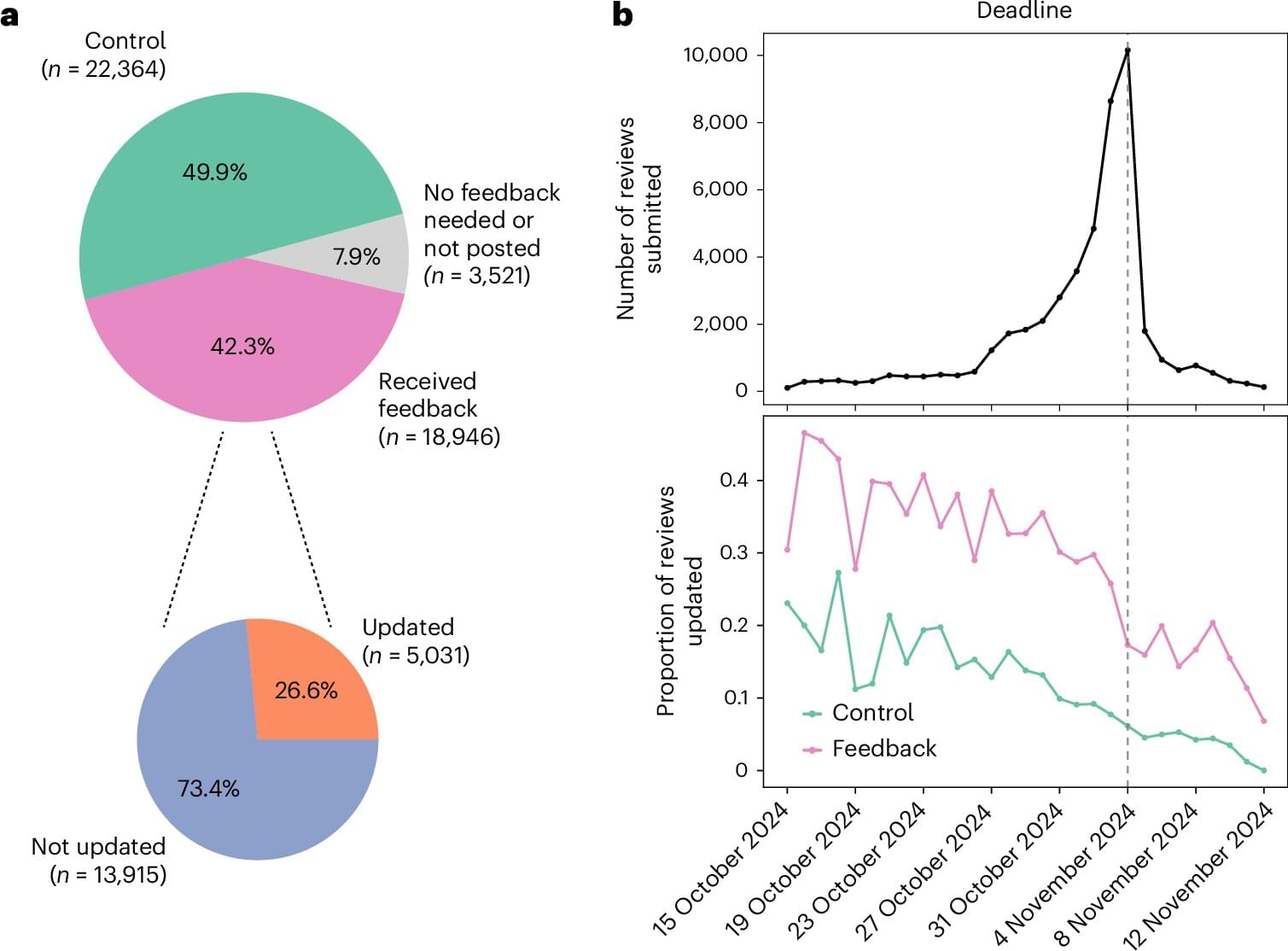

A new AI coach for scientists has been shown to significantly improve the quality of peer reviews, making them clearer and more helpful for authors. Peer review is essential to ensuring the integrity of scientific publications, but many researchers are dissatisfied with the quality of the feedback they receive. Common complaints include vague, short, and unhelpful reviews. For example, in a survey of 11,800 researchers, only 55.4% of respondents reported being satisfied with the quality of the feedback. The problem is exacerbated by the sheer volume of papers, which has left reviewers feeling overwhelmed.

But help for stressed-out reviewers may be at hand. A team of researchers has developed the Review Feedback Agent, a system that uses five large language models to scan reviews and provide private feedback to reviewers before the authors see them. They trained their AI reviewer by carefully prompting existing large language models, as they explain in a paper published in Nature Machine Intelligence.

The researchers tested their system in the paper review cycle before ICLR 2025, a leading conference in deep learning and machine learning. They randomly assigned around 20,000 reviews to receive AI feedback shortly after they were written. These automated “reviews of the reviews” were then sent back to the human reviewers as private feedback. Another 20,000 were placed in a control group that received no feedback at all.

We have known for several decades that the universe is expanding. Scientists use multiple techniques to measure the present-day expansion rate of the universe, known as the Hubble constant. These methods are internally consistent and based on the same physics, so all observed values of the Hubble constant should agree. But those that come from early-universe datasets disagree with those that come from late-universe datasets. This problem is known as the Hubble tension and is considered to be one of the most significant open questions in cosmology.

Now a team of astrophysicists, cosmologists, and physicists at The Grainger College of Engineering at the University of Illinois Urbana-Champaign and at the University of Chicago has developed a novel way to compute the Hubble constant using gravitational waves—tiny ripples in the spacetime fabric. The researchers were able to improve upon the accuracy of prior gravitational-wave methods of measuring the Hubble constant. As our capability to observe gravitational waves improves in the future, this new method can be used to make even more accurate measurements of the Hubble constant, bringing scientists closer to resolving the Hubble tension.

Illinois Physics Professor Nicolás Yunes said, “This result is very significant—it’s important to obtain an independent measurement of the Hubble constant to resolve the current Hubble tension. Our method is an innovative way to enhance the accuracy of Hubble constant inferences using gravitational waves.” Yunes is the founding director of the Illinois Center for Advanced Studies of the Universe (ICASU) on the Urbana campus.