Large language models (LLMs), artificial intelligence (AI) systems that can process human language and generate texts in response to specific user queries, are now used daily by a growing number of people worldwide. While initially these models were primarily used to quickly source information or produce texts for specific uses, some people have now also started approaching the models with personal issues or concerns.

This has given rise to various debates about the value and limitations of LLMs as tools for providing emotional support. For humans, offering emotional support in dialogue typically entails recognizing what another is feeling and adjusting their tone, words and communication style accordingly.

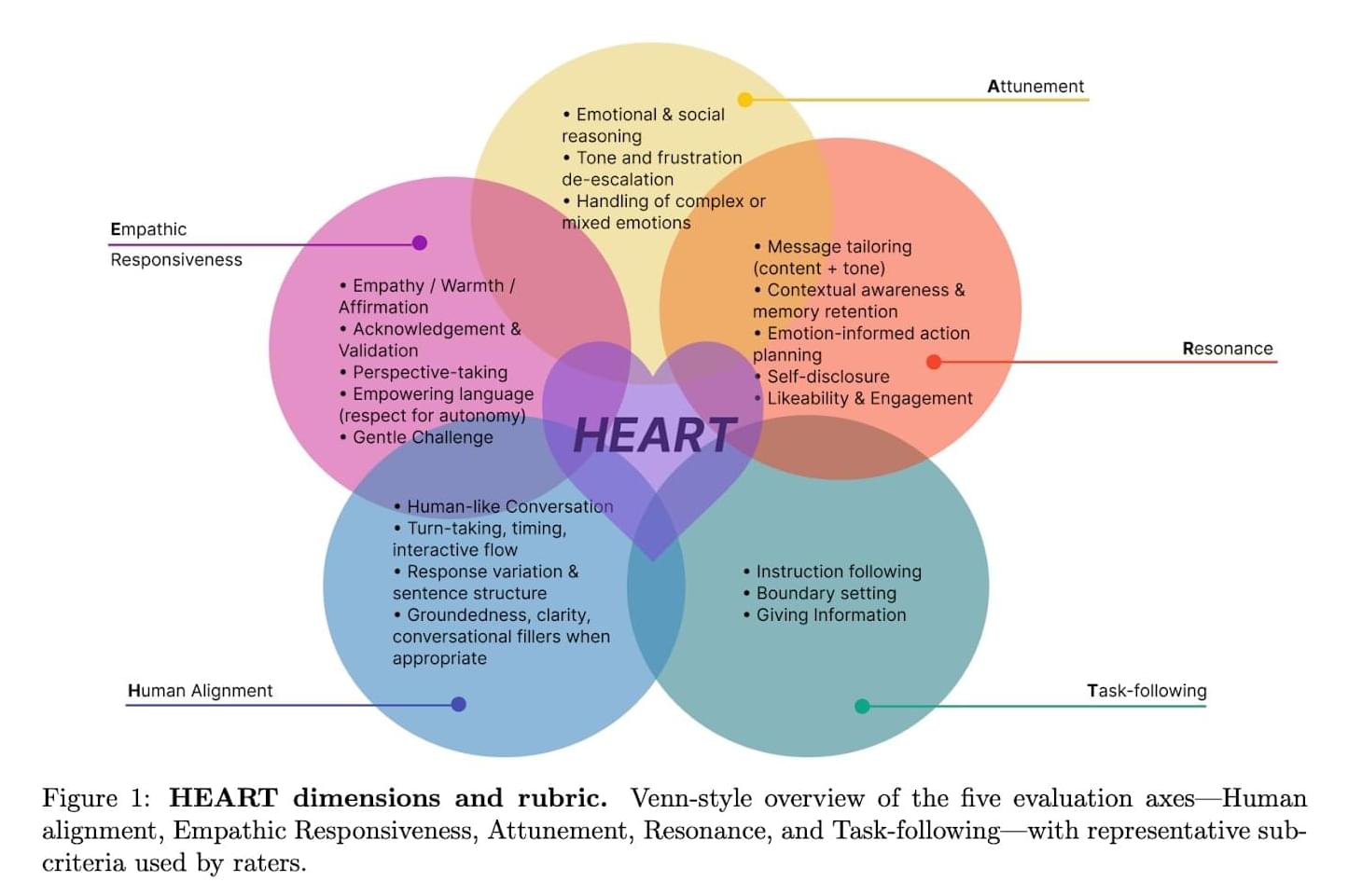

Researchers at Hippocratic AI, Stanford University, University of California San Diego and University of Texas at Austin recently developed a new structured method to evaluate the ability of both LLMs and humans to offer emotional support during dialogues marked by several back-and-forth exchanges. This framework, dubbed HEART, was introduced in a paper is published on the arXiv preprint server.