The ethics of clinical trials that deliberately infect people with a disease aren’t clear-cut – but there’s a strong case for doing more of them

AI companies are explicitly working toward AGI and are likely to succeed soon, possibly within years. Keep the Future Human explains how unchecked development of smarter-than-human, autonomous, general-purpose AI systems will almost inevitably lead to human replacement. But it doesn’t have to. Learn how we can keep the future human and experience the extraordinary benefits of Tool AI…

https://keepthefuturehuman.ai.

Chapters:

0:00 – The AI Race.

1:31 – Extinction Risk.

2:35 – AGI When?

4:08 – AI Will Take Power.

5:12 – Good News.

6:52 – Bad News.

8:11 – More Money, More Capability.

10:10 – AI-Assisted Alignment?

11:32 – Obedient vs Sovereign AI

12:53 – AI Is a New Lifeform.

Original Videos:

• Keep the Future Human (with Anthony Aguirre)

• AI Ethics Series | Ethical Horizons in Adv…

Editing:

https://zeino.tv/

The journey “Up from Eden” could involve humanity’s growth in understanding, comprehending and appreciating with greater love true and wisdom, shaping a future worth living for.

AI is accelerating faster than human biology. What happens to humanity when the future moves faster than we can evolve?

Oxford philosopher Nick Bostrom, author of Superintelligence, says we are entering the biggest turning point in human history — one that could redefine what it means to be human.

In this talk, Bostrom explains why AI might be the last invention humans ever make, and how the next decade could bring changes that once took thousands of years in health, longevity, and human evolution. He warns that digital minds may one day outnumber biological humans — and that this shift could change everything about how we live and who we become.

Superintelligence will force us to choose what humanity becomes next.

Follow Closer To Truth on Instagram for daily videos, updates, and announcements: https://www.instagram.com/closertotruth/

AI consciousness, its possibility or probability, has burst into public debate, eliciting all kinds of issues from AI ethics and rights to AI going rogue and harming humanity. We explore diverse views; we argue that AI consciousness depends on theories of consciousness.

Make a tax-deductible donation of any amount to help support Closer To Truth continue making content like this: https://shorturl.at/OnyRq.

Philip Goff is a British author, panpsychist philosopher, and professor at Durham University whose research focuses on philosophy of mind and consciousness. Specifically, it focuses on how consciousness can be part of the scientific worldview.

Closer To Truth, hosted by Robert Lawrence Kuhn and directed by Peter Getzels, presents the world’s greatest thinkers exploring humanity’s deepest questions. Discover fundamental issues of existence. Engage new and diverse ways of thinking. Appreciate intense debates. Share your own opinions. Seek your own answers.

The Lifeboat Foundation Guardian Award is annually bestowed upon a respected scientist or public figure who has warned of a future fraught with dangers and encouraged measures to prevent them.

This year’s winner is Professor Roman V. Yampolskiy. Roman coined the term “AI safety” in a 2011 publication titled * Artificial Intelligence Safety Engineering: Why Machine Ethics Is a Wrong Approach*, presented at the Philosophy and Theory of Artificial Intelligence conference in Thessaloniki, Greece, and is recognized as a founding researcher in the field.

Roman is known for his groundbreaking work on AI containment, AI safety engineering, and the theoretical limits of artificial intelligence controllability. His research has been cited by over 10,000 scientists and featured in more than 1,000 media reports across 30 languages.

Watch his interview on * The Diary of a CEO* at [ https://www.youtube.com/watch?v=UclrVWafRAI](https://www.youtube.com/watch?v=UclrVWafRAI) that has already received over 11 million views on YouTube alone. The Singularity has begun, please pay attention to what Roman has to say about it!

Professor Roman V. Yampolskiy who coined the term “AI safety” is winner of the 2025 Guardian Award.

Today UNESCO’s Member States took the final step towards adopting the first global normative framework on the ethics of neurotechnology. The Recommendation, which will enter into force on November 12, establishes essential safeguards to ensure that neurotechnology contributes to improving the lives of those who need it the most, without jeopardizing human rights.

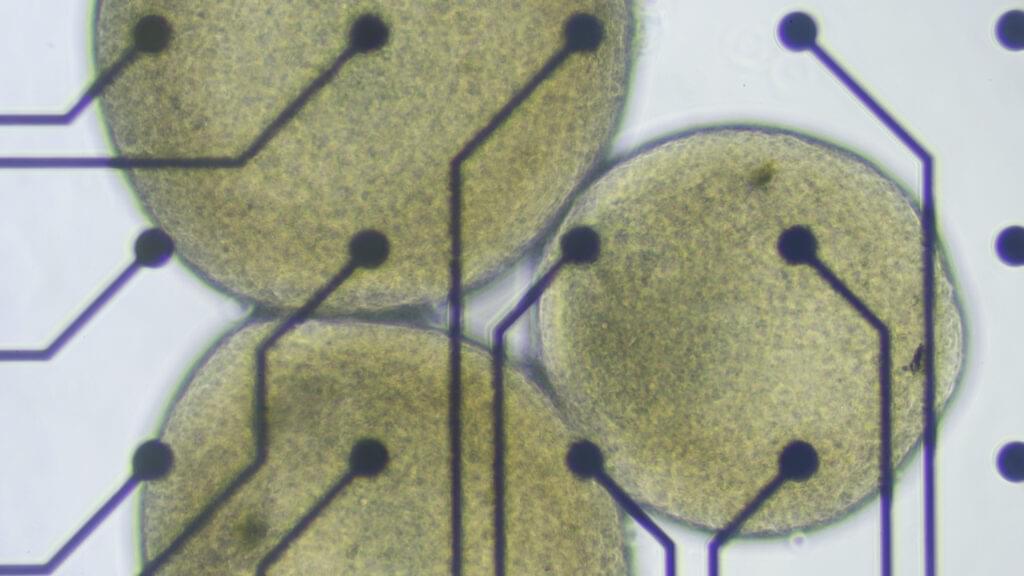

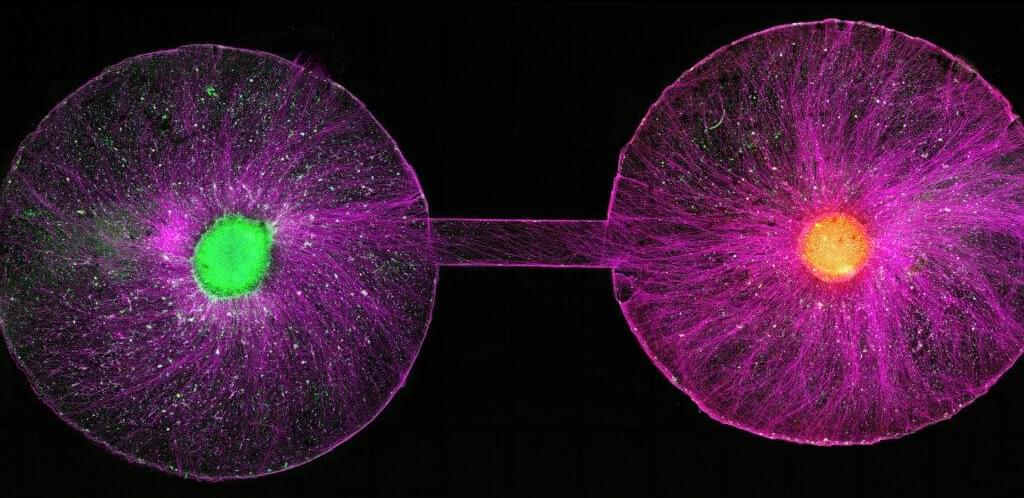

For the brain organoids in Lena Smirnova’s lab at Johns Hopkins University, there comes a time in their short lives when they must graduate from the cozy bath of the bioreactor, leave the warm, salty broth behind, and be plopped onto a silicon chip laced with microelectrodes. From there, these tiny white spheres of human tissue can simultaneously send and receive electrical signals that, once decoded by a computer, will show how the cells inside them are communicating with each other as they respond to their new environments.

More and more, it looks like these miniature lab-grown brain models are able to do things that resemble the biological building blocks of learning and memory. That’s what Smirnova and her colleagues reported earlier this year. It was a step toward establishing something she and her husband and collaborator, Thomas Hartung, are calling “organoid intelligence.”

Tead More

Another would be to leverage those functions to build biocomputers — organoid-machine hybrids that do the work of the systems powering today’s AI boom, but without all the environmental carnage. The idea is to harness some fraction of the human brain’s stunning information-processing superefficiencies in place of building more water-sucking, electricity-hogging, supercomputing data centers.

Despite widespread skepticism, it’s an idea that’s started to gain some traction. Both the National Science Foundation and DARPA have invested millions of dollars in organoid-based biocomputing in recent years. And there are a handful of companies claiming to have built cell-based systems already capable of some form of intelligence. But to the scientists who first forged the field of brain organoids to study psychiatric and neurodevelopmental disorders and find new ways to treat them, this has all come as a rather unwelcome development.

At a meeting last week at the Asilomar conference center in California, researchers, ethicists, and legal experts gathered to discuss the ethical and social issues surrounding human neural organoids, which fall outside of existing regulatory structures for research on humans or animals. Much of the conversation circled around how and where the field might set limits for itself, which often came back to the question of how to tell when lab-cultured cellular constructs have started to develop sentience, consciousness, or other higher-order properties widely regarded as carrying moral weight.

The study points to a few other benefits: Modern antivenoms don’t address the tissue damage that can be wrought by snake venom, but the nanobodies in the new product seemed to decrease tissue injury in mice, even with delayed treatment. And since nanobodies are less likely to cause serious immune reactions, per the statement, clinicians could theoretically administer the new antivenom before the appearance of clear symptoms, instead of waiting in an attempt to avoid severe side effects.

Still, because the experiments were performed on lab animals, not humans, the new antivenom is nowhere near commercial availability—first, the concept must be proven in human subjects. As such, the team is working on improving the antivenom and securing more funding.

“We have both a moral and global responsibility to contribute to solving this problem,” Laustsen-Kiel says in the statement. “Our antivenom has the potential to fundamentally change how snakebites are treated around the world.”

In an effort to address these ethical grey areas, 17 leading scientists and bioethicists from five countries are urging the establishment of an international oversight body to monitor advances in the rapidly expanding field of human neural organoids and to provide ethical and policy guidance as the science continues to evolve. The call to action, published Thursday in Science, comes as U.S. government agencies are making new investments in organoid science aimed at accelerating drug discovery and reducing reliance on animal models of disease.

In September, the National Institutes of Health announced $87 million in initial contracts to establish a new center dedicated to standardizing organoid research. The move followed an earlier pledge by both the NIH and the Food and Drug Administration to reduce, and possibly replace, testing on mice, primates, and other animals with other methods — including organoids and organ-on-a-chip technologies — for developing certain medicines.

Government promotion of human stem cell models more broadly will only increase the recruitment of new researchers into the field of neural organoids, which has seen an explosion from a few dozen labs a decade ago to hundreds around the world now, said Sergiu Pasca, a pioneering neuroscientist and stem cell biologist at Stanford University who co-authored the Science commentary.

“Over the last 10 or 15 years, scientists have really started to understand the fundamental underlying biology of the aging process. And they broke this down into 12 hallmarks of aging.”

Up next, Why 2025 is the single most pivotal year in our lifetime | Peter Leyden ► • Why 2025 is the single most pivotal year i…

We track age by the number of birthdays we’ve had, but scientists are arguing that our cells tell a different, more truthful story. Our biological age reveals how our bodies are actually aging, from our muscle strength to the condition of our DNA.

The gap between these two numbers may hold the key to treating aging – which could help save 100,000 lives per day and win us $38 trillion dollars.

00:00 Rethinking longevity.

01:27 Understanding aging.

02:58 Biological age and epigenetics.

04:29 New frontiers in longevity science.

08:04 Future possibilities and ethical questions.

10:24 The moral debate around living longer.