Are minds just processes? Can AI become conscious, morally wiser, or even part of a larger collective intelligence? Anders Sandberg and Joscha Bach discuss consciousness, AGI, hybrid minds, moral uncertainty, collective agency and the future of the cyborg Leviathan. It’s a deep and winding discussion with so many interesting topics covered!

0:00 Intro.

0:37 What is consciousness? Phenomenology — functionalism & panpsychism.

1:54 Causal boundaries — the mind is a causally organised process with a non-arbitrary functional boundary, sustained through time by feedback, control, and internal continuity.

3:20 Minds are not states — they are processes. We don’t see causal filtering in tables.

5:54 Epiphenomenalism is self-undermining if it has no causal role, and taking causation seriously pushes towards functionalism.

9:49 Methodological humility about armchair philosophy of mind.

12:41 Putnam-style Brain-in-a-vat — and why standard objections to AI minds fall flat.

16:37 Is sentience required (or desired) for not just moral competence in AI, but moral motivation as well?

22:35 Why stepping outside yourself is powerful — seeing.

25:12 Are AIs born enlightened?

26:25 Are LLMs AGI yet? What’s still missing.

28:16 AI, hybrid minds, and the limits of human augmentation.

32:32 Can minds be extended — in humans, dogs, and cats?

36:19 Why human language may not be open-ended enough.

39:41 Why AI is so data-hungry — and why better algorithms must exist.

43:39 Why better representations matter more than raw compute (grokking was surprising)

48:46 How babies build a world model from touch and perception.

51:05 What comes after copilots: agent teams, multimodality and new AI workflows.

55:32 Can AI help us discover new forms of taste and aesthetics.

59:49 Using AI to learn art history and invent a transhumanist aesthetic.

1:01:47 When AI helps everyone looks professional, what still counts as real skill?

1:03:56 What happens when the self starts to merge with AI

1:05:43 How AI changes the way we think and create.

1:08:10 What happens when AI starts shaping human relationships.

1:11:18 Why feeling in control can matter more than being right.

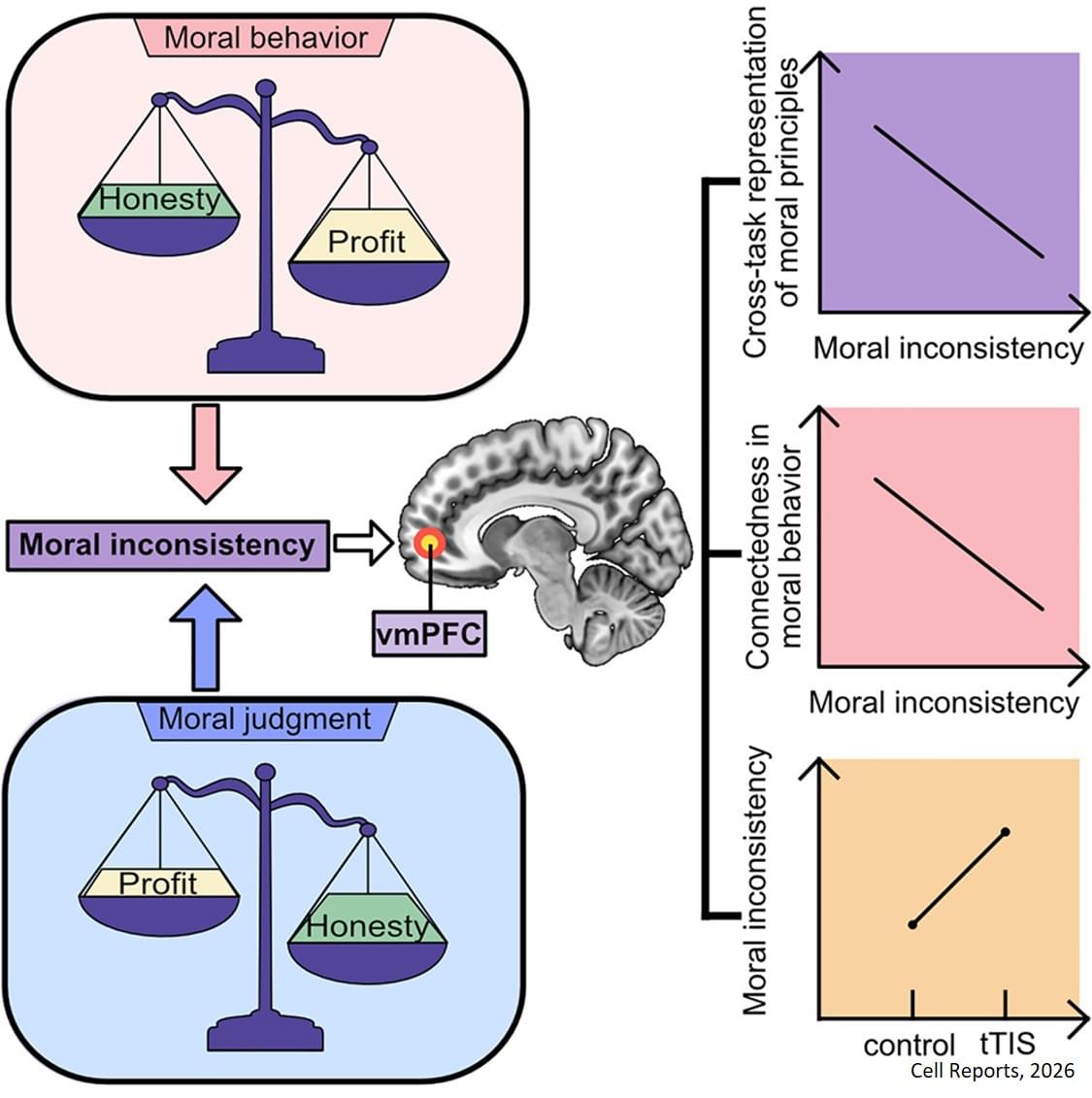

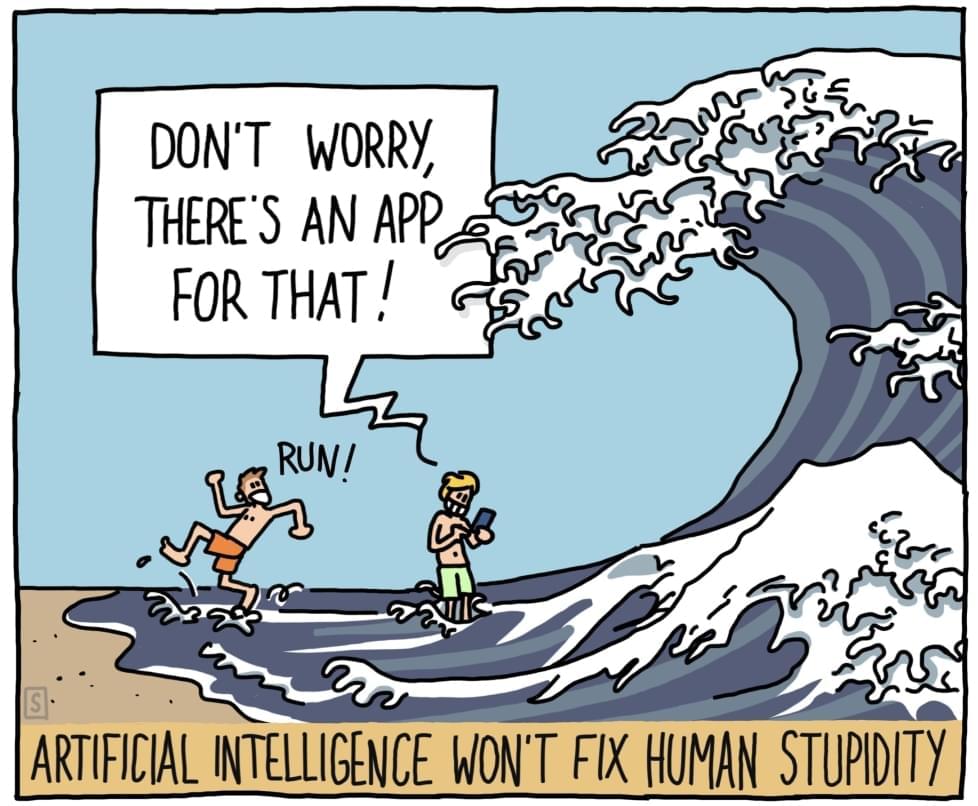

1:12:58 Why intelligence without wisdom is very dangerous.

1:17:45 AI via scaling statistical pattern matching vs symbolic (& causal) reasoning. Can LLMs learn causality or just correlation?

1:23:00 Will multimodal AI replace LLMs or use them as glue everywhere.

1:24:02 10 years to the singularity?

1:25:27 AI, coordination and the corruption problem.

1:29:47 Can AI become more moral than us (humans)? and if so, should it?

1:34:31 Why pluralism still leaves moral collisions unresolved.

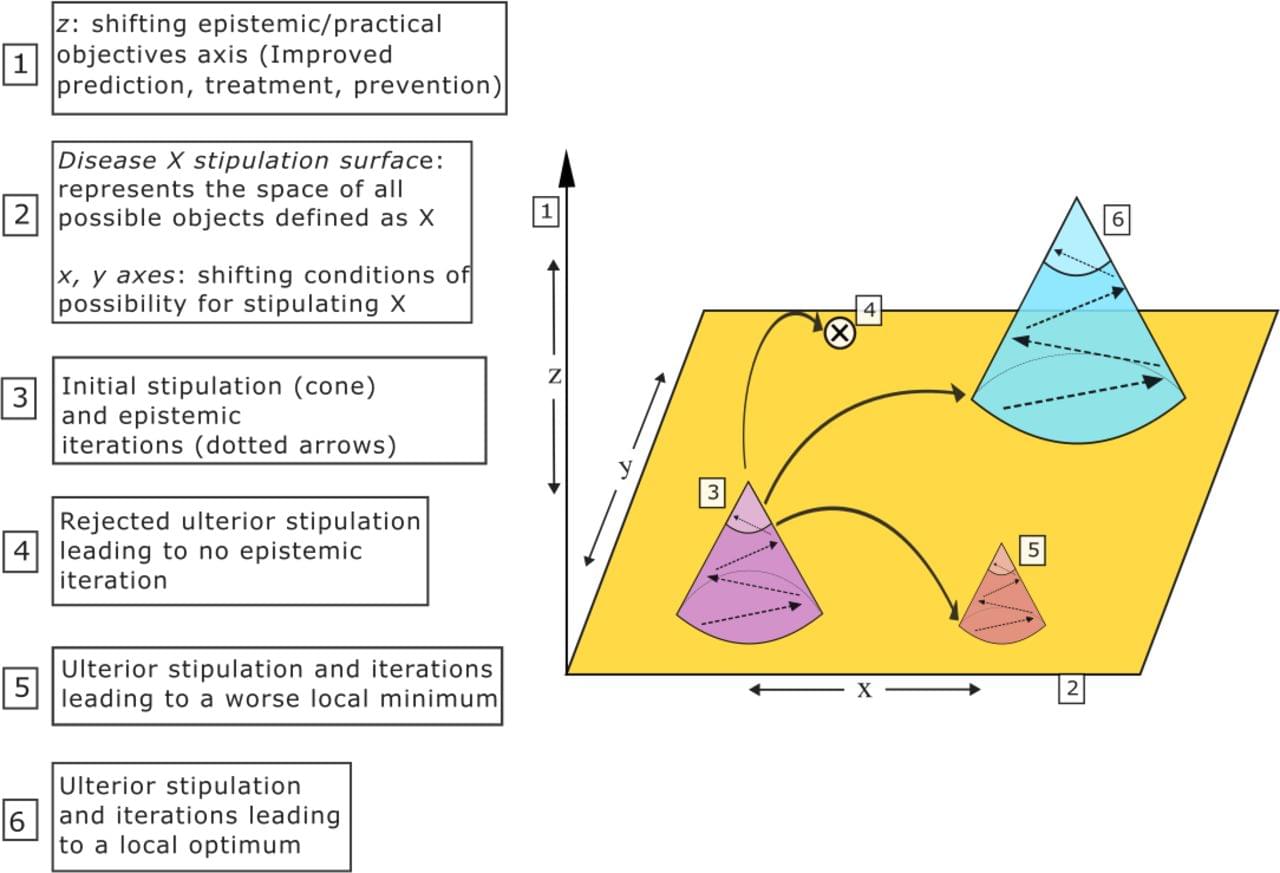

1:34:31 Traversing the landscape of norms (value)

1:38:14 Can ethics work across nested levels of existence? (from the person-effecting-view to the matrioshka-effecting-view)

1:43:08 Moral realism, evolution & game-theoretic symmetries.

1:48:01 Is there a global optimum of moral coordination? Is that god?

1:55:12 Metaphors of the body-politic, the body of Christ, Omega Point theory, Leviathan.

1:59:36 Will superintelligences converge into a cosmic singleton?

Post: https://www.scifuture.org/minds-in-th… thanks for tuning in! Please support SciFuture by subscribing and sharing! Buy me a coffee? https://buymeacoffee.com/tech101z Have any ideas about people to interview? Want to be notified about future events? Any comments about the STF series? Please fill out this form: https://docs.google.com/forms/d/1mr9P… Kind regards, Adam Ford

- Science, Technology & the Future — #SciFuture — http://scifuture.org

Many thanks for tuning in!

Please support SciFuture by subscribing and sharing!

Buy me a coffee? https://buymeacoffee.com/tech101z.

Have any ideas about people to interview? Want to be notified about future events? Any comments about the STF series?

Please fill out this form: https://docs.google.com/forms/d/1mr9P…

Kind regards.