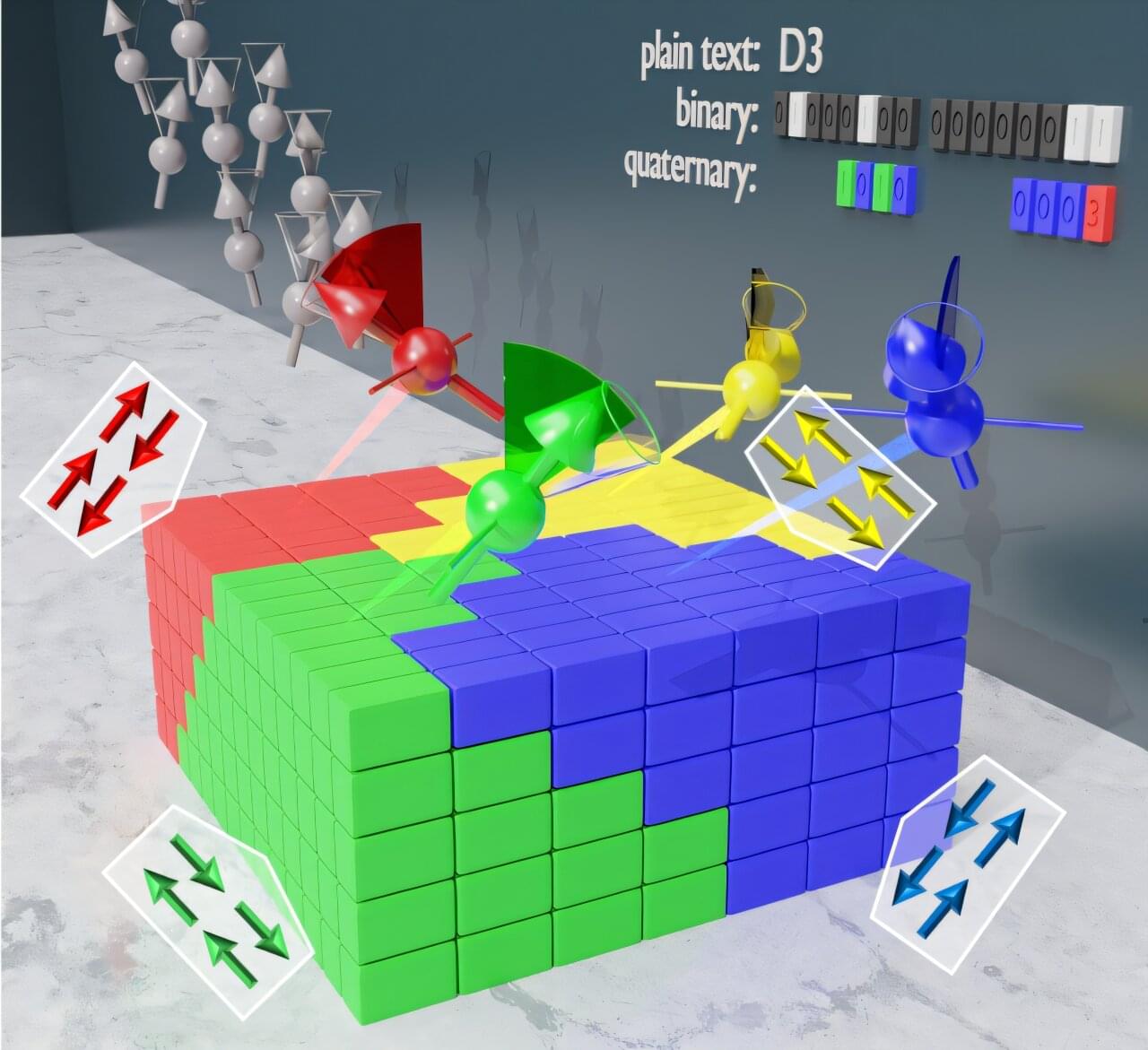

Today’s computers store information using only two values: 0 and 1. But as electronic devices become smaller and reach their limits, scientists are searching for new ways to pack more information into the same space. One idea is to use magnetism. In some materials, atoms behave like tiny magnets that can arrange themselves in different patterns. If each pattern represents a different value, one memory element could store more than just two possibilities.

In a study recently published in Nature Communications, researchers have found a material in which these atomic magnets can form four different magnetic states. They showed that these states can be controlled using electric and magnetic fields and remain stable once created.

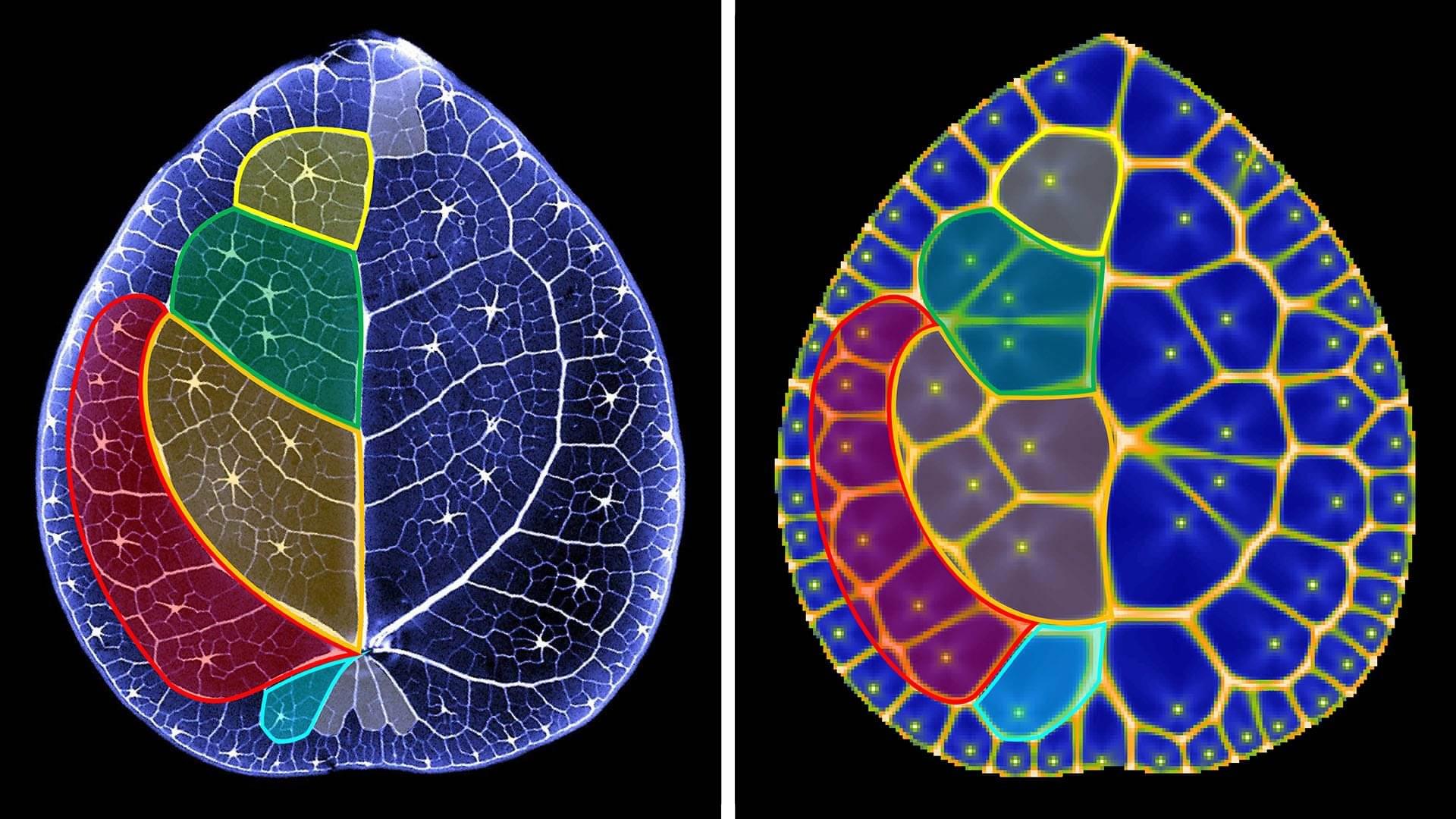

Using neutron experiments at the Institut Laue-Langevin, the scientists were able to observe each of the four magnetic states that were created by applying electric and magnetic fields. This discovery hints at a future where computers might store significantly more information than today’s binary technologies.