Towards raising young developers across the Urhobo Nation, the Urhobo Innovation Hub has completed the training of 40 youths on Website design, Internet of Things (IOT), Robotics and Virtual Reality.

The boot camp training, which drew its participants from Urhobo youths within the age bracket of 13–38 years old, held at the Michael and Cecilia Ibru University, Agbaro-Otor, Delta State.

The Hub is a brainchild of the Urhobo Economic and Investment Summit (Ekpobaro) and was initiated to raise young entrepreneurs of Urhobo extraction who will key into the reality of the new normal and raise seasoned developers to make Urhobo Nation proud.

It seeks to raise about 200 young developers with projects to show before the end of the 1st quarter of 2024.

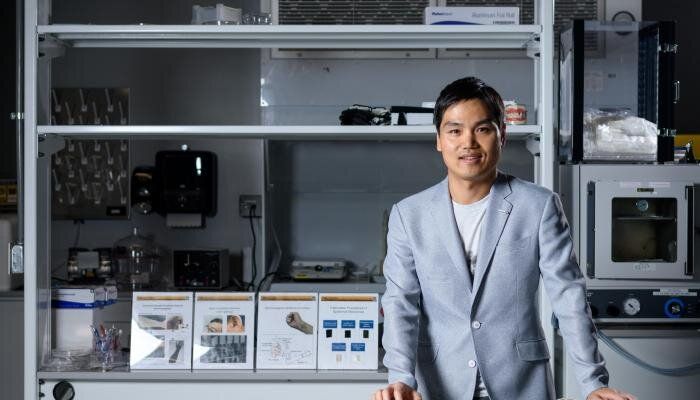

The Principal Partner of the Hub and Convener of the Urhobo Economic and Investment Group, Mr. Kingsley Ubiebi, enjoined all stakeholders to support young developers across Urhobo Nation as they are the problems solvers and leaders of tomorrow.

The boot camp ended with the issuance of certificate to participants who were advised to embark on relevant projects before the 2nd edition of the training.