This is an interesting story in one of Italy’s top 3 papers/sites about life extension science and millennials living beyond 100 years of age. It also features transhumanism: http://numerus.corriere.it/2015/08/06/i-millennials-camperan…ermettere/ and the English: https://translate.google.com/translate?hl=en&sl=it&u=http://…rev=search

Le tendenze della statistica ci dicono che chi oggi ha vent’anni ha ottime probabilità di arrivare ai cento in buona salute. Forse anche molto di più, se avranno successo le battaglie del Partito Transumanista che si presenta alle elezioni americane del 2016 con l’obiettivo di puntare più risorse sulla lotta all’invecchiamento. Ma come si configura un mondo di persone tanto longeve? L’allungamento della vita potrà beneficiare tutte le popolazioni o soltanto una fascia di privilegiati? Già oggi la speranza di vita nei Paesi più poveri è mediamente inferiore di 18 anni rispetto ai Paesi più ricchi e anche in Italia ci sono tre anni di differenza tra Milano e Napoli. E come si ridisegna un sistema sociale nel quale le persone vivranno venti o trent’anni più di oggi?

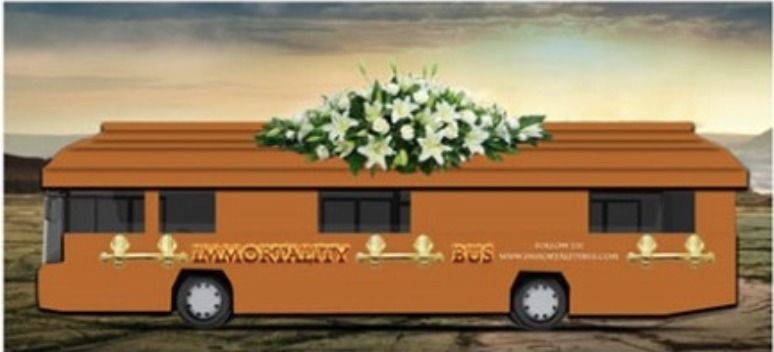

Un autobus rosso a forma di bara, con tanto di fiori finti sul tetto, percorre le strade degli Stati Uniti. Lo ha voluto il leader del Partito transumanista Zoltan Istvan, candidato alle elezioni presidenziali del 2016. Istvan non diventerà presidente, ma il suo messaggio non è banale: con il suo tour elettorale, vuole attirare l’attenzione sulla battaglia contro l’invecchiamento. Chiede più fondi per la ricerca e per le cure sanitarie, più carriere nelle attività tecnologiche, nell’intelligenza artificiale e nella medicina.

.

.

Read more

.

.