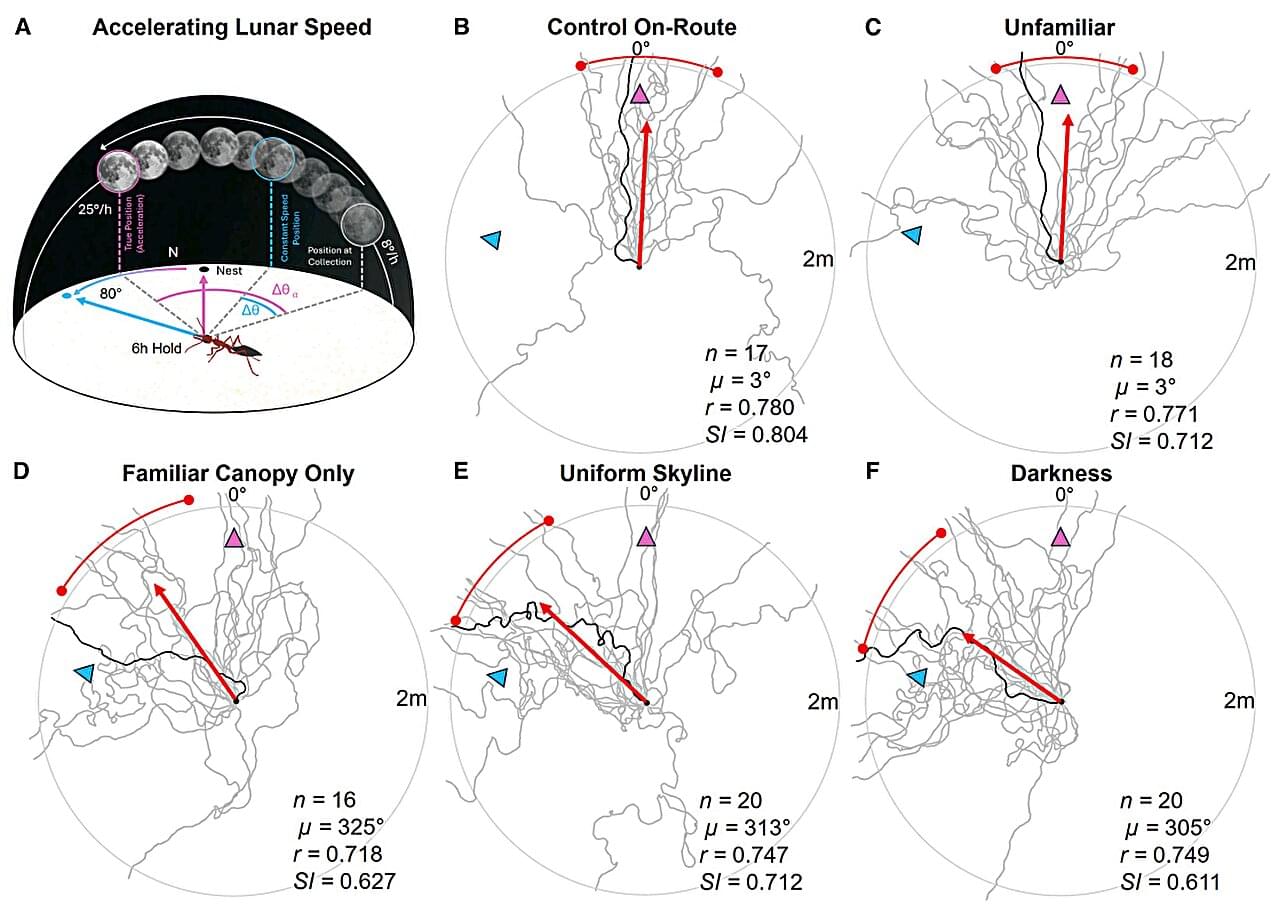

It’s well known that many animals, including migratory birds, butterflies, and even fish, use the sun for navigational purposes. Nocturnal animals are dealt a more difficult hand, however, as the moon’s path is far more variable. But a new study, published in Current Biology, has shown that nocturnal bull ants (Myrmecia midas) not only use the moon as a compass, but are also capable of accounting for speed variations in its movement.

Although there is a slight change every day, the sun’s path in the sky is much more stable than the moon’s. As most people know, the moon goes through monthly phases, varying dramatically in its placement in the sky throughout the month and being entirely absent from the night sky for a portion of time. Additional speed variations further complicate things. Because of this, it was unclear whether nocturnal animals could accurately predict the moon’s complex movement for navigation.

Some diurnal insects, like certain ants and bees, use time-compensated sun compasses that adjust for the sun’s daily movement. Past studies have shown that some nocturnal insects use lunar or polarized light cues, but did not show whether these were used for time-compensated lunar navigation.