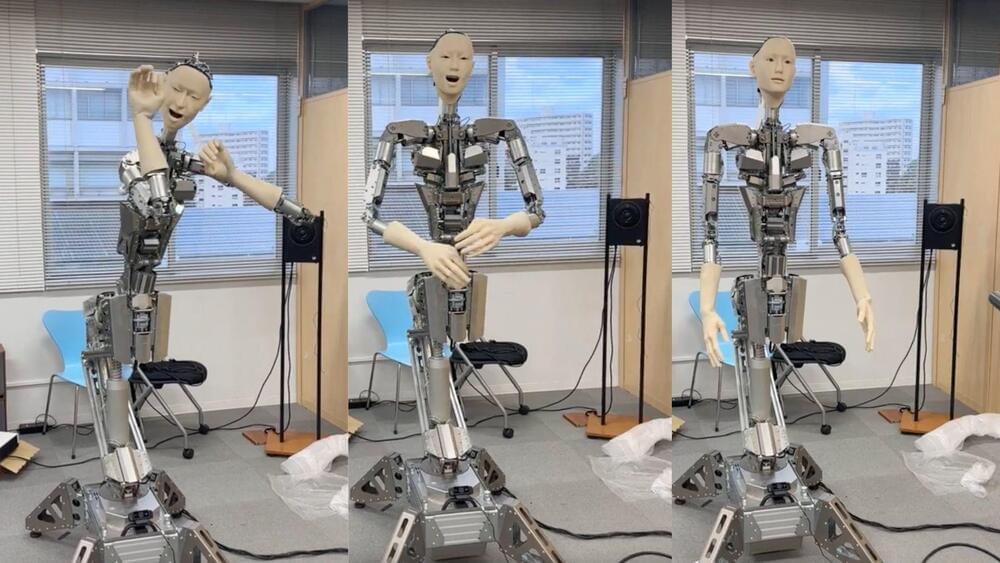

Google has released a new Pro model of its latest AI, Gemini, and company sources say it has outperformed GPT-3.5 (the free version of ChatGPT) in widespread testing. According to performance reports, Gemini Ultra exceeds current state-of-the-art results on 30 of the 32 widely-used academic benchmarks used in large language model (LLM) research and development. Google has been accused of lagging behind OpenAI’s ChatGPT, widely regarded as the most popular and powerful in the AI space. Google says Gemini was trained to be multimodal, meaning it can process different types of media such as text, pictures, video, and audio.

Insider also reports that, with a score of 90.0%, Gemini Ultra is the first model to outperform human experts on MMLU (massive multitask language understanding), which uses a combination of 57 subjects such as math, physics, history, law, medicine and ethics for testing both world knowledge and problem-solving abilities.

The Google-based AI comes in three sizes, or stages, for the Gemini platform: Ultra, which is the flagship model, Pro and Nano (designed for mobile devices). According to reports from TechCrunch, the company says it’s making Gemini Pro available to enterprise customers through its Vertex AI program, and for developers in AI Studio, on December 13. Reports indicate that the Pro version can also be accessed via Bard, the company’s chatbot interface.