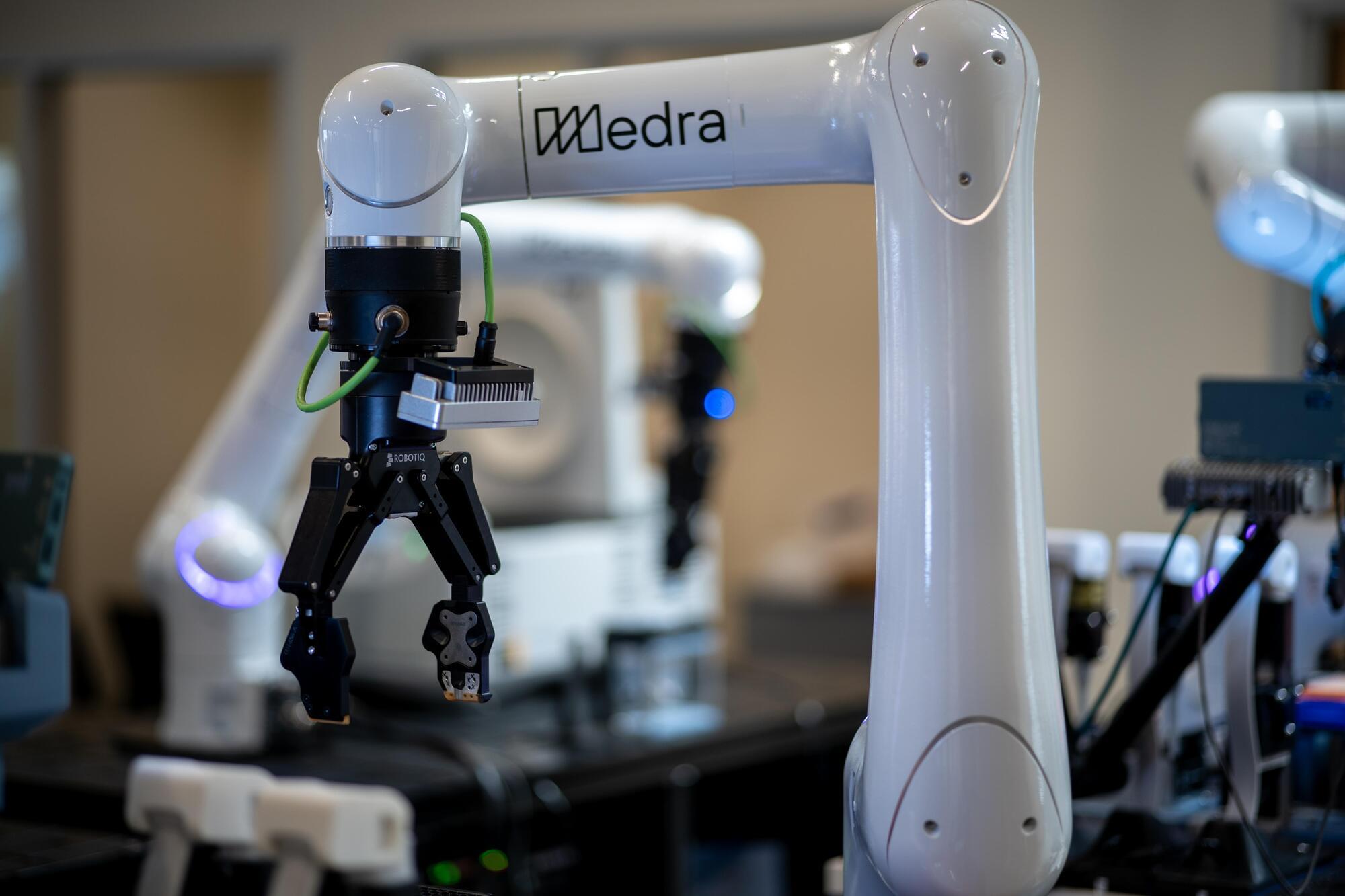

Over the past decades, technological advances have fueled great innovation in a wide range of fields. Emerging and rapidly developing technologies, such as artificial intelligence (AI) systems, three-dimensional (3D) and four-dimensional (4D) printing, digital twins (i.e., virtual representations of physical objects, systems or processes) and advanced robots, are set to further transform many industries and sectors.

Researchers at London South Bank University explored the idea of metaverse manufacturing, an industrial ecosystem that would blend technology-enhanced physical production processes with immersive visual environments. In a paper published in Journal of the Royal Society Interface, they tried to envision how this ecosystem could work and what technologies it would rely on, while also considering its possible advantages in terms of sustainability and productivity.

The study was conducted within the Mechanical Intelligence (MI) Research Group at London South Bank University, which focuses on bioinspired design and adaptive engineering systems.