Regulators had reviewed whether deal violated Beijing’s investment rules

A reader asked me a question this week that I have been thinking about ever since.

She did not ask whether AI could malfunction. She did not ask whether bad actors could misuse it. She asked something sharper:

Can a system produce bad outcomes systematically, even when intent is good, and nothing is broken?

The answer is yes. And it is the most dangerous category of bad outcome, because nobody is at fault and nothing is broken.

We have all the evidence we need. Amazon ran into it. YouTube ran into it. Hospitals are running into it now. AI labs are about to run into it at a planetary scale. And almost nobody is talking about why.

A 1975 economic principle explains it cleanly. A reader’s question forced me to refine an argument I have been making for years.

New essay: [ https://www.singularityweblog.com/goodharts-law-ai/](https://www.singularityweblog.com/goodharts-law-ai/)

Aggregated AI represents a fundamental rezoning of this digital landscape. It is the architectural foundation of the ultimate digital 15-minute city.

Modern urban planners are increasingly rallying around a transformational concept known as the “15-minute city.” The philosophy is simple but profound: a neighborhood should be designed so that everything a resident needs for daily life—work, groceries, healthcare, education, and leisure—is accessible within a 15-minute walk or bike ride. It is a direct rejection of the sprawling, car-centric metropolis that forces people to spend large fractions of their lives commuting from one isolated zone to another.

When you look at the architecture of the modern internet, it becomes painfully obvious that we are living in the digital equivalent of urban sprawl.

For the past two decades, we have built a digital environment defined by vast distances and fragmented zones. We have distinct destinations for every conceivable task. You commute to one platform to analyze data, travel to another to manage client relationships, drive over to a different interface to write code, and navigate a maze of disparate chat windows to communicate. The modern knowledge worker spends an inordinate amount of their day stuck in digital traffic, constantly context-switching, moving data between incompatible silos, and navigating a sprawling ecosystem that was built for the benefit of the platforms, not the people who inhabit them.

Until a few years ago, no one had heard of bixonimania. Then, in 2024, a group of scientists posted findings online announcing the condition, which they claimed affected the eyes after computer use. However, the scientists had made it up—not just the work, but the authors’ names, affiliations, locations and funding, which was the University of Fellowship of the Ring and the Galactic Triad.

Large language models like ChatGPT and Gemini treated it as real anyway, and in doing so, helped turn a fictional disease into a legitimate-sounding health concern.

Bixonimania is not an isolated case. Being deceived—whether you are a person or an AI model—is concerningly common, in science and beyond. Whether we’re talking about AI hallucinations, state-backed disinformation or just everyday lies, humans have a remarkable knack for naivety, owing to our biases and increasing need to outsource learning to others. These are problems we—individually and collectively—urgently need to better understand and overcome.

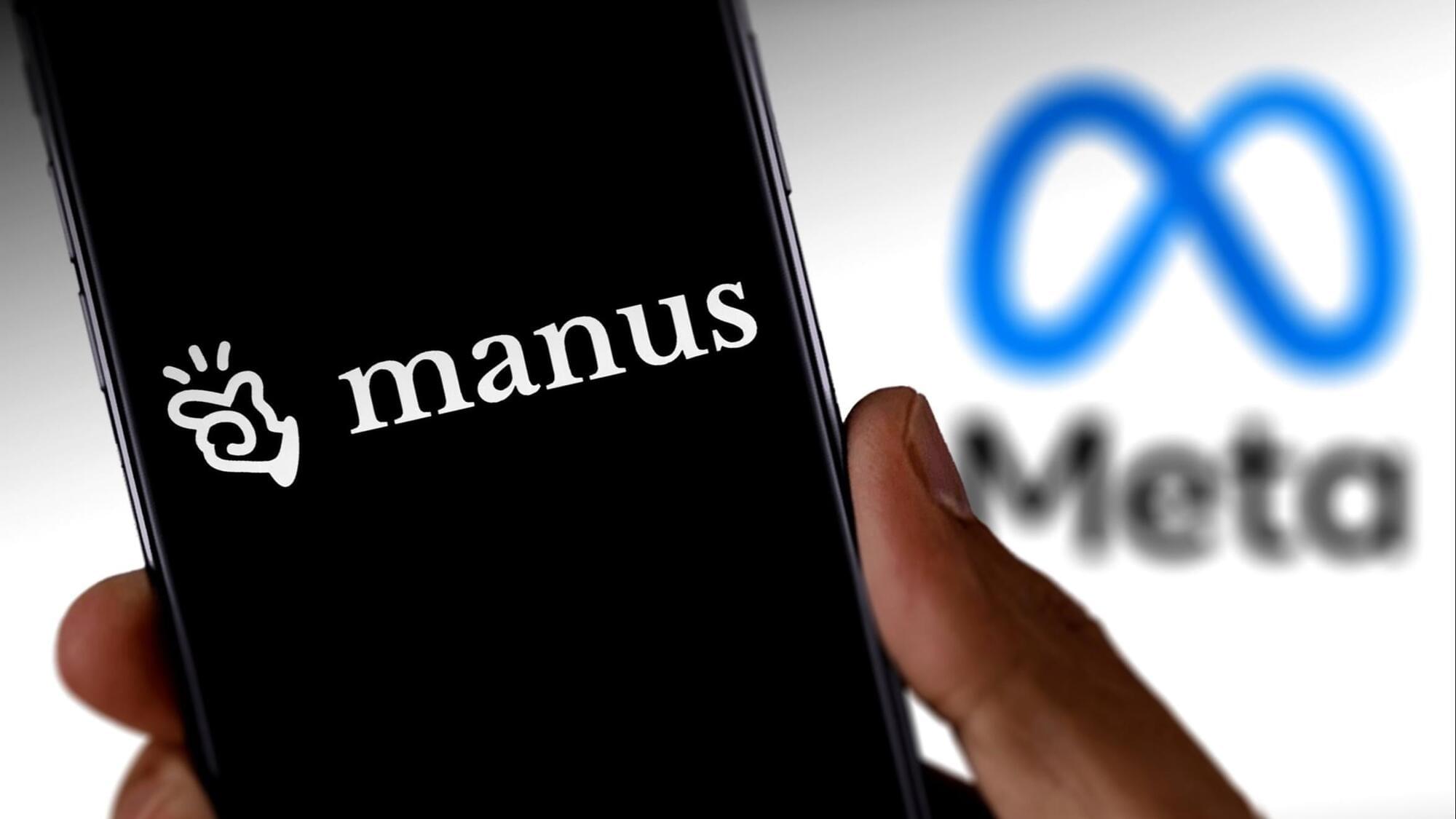

Ultimately, QIML proves that we don’t need a fully fault-tolerant quantum computer to see results. By using quantum processors to learn the complex “rules” of chaos, we can give classical computers the boost they need to make reliable, long-term predictions about the most turbulent environments in the natural world.

Modeling high-dimensional dynamical systems remains one of the most persistent challenges in computational science. Partial differential equations (PDEs) provide the mathematical backbone for describing a wide range of nonlinear, spatiotemporal processes across scientific and engineering domains (1–3). However, high-dimensional systems are notoriously sensitive to initial conditions and the floating-point numbers used to compute them (4–7), making it highly challenging to extract stable, predictive models from data. Modern machine learning (ML) techniques often struggle in this regime: While they may fit short-term trajectories, they fail to learn the invariant statistical properties that govern long-term system behavior. These challenges are compounded in high-dimensional settings, where data are highly nonlinear and contain complex multiscale spatiotemporal correlations.

ML has seen transformative success in domains such as large language models (8, 9), computer vision (10, 11), and weather forecasting (12–15), and it is increasingly being adopted in scientific disciplines under the umbrella of scientific ML (16). In fluid mechanics, in particular, ML has been used to model complex flow phenomena, including wall modeling (17, 18), subgrid-scale turbulence (19, 20), and direct flow field generation (21, 22). Physics-informed neural networks (23, 24) attempt to inject domain knowledge into the learning process, yet even these models struggle with the long-term stability and generalization issues that high-dimensional dynamical systems demand. To address this, generative models such as generative adversarial networks (25) and operator-learning architectures such as DeepONet (26) and Fourier neural operators (FNO) (27) have been proposed. While neural operators offer discretization invariance and strong representational power for PDE-based systems, they still suffer from error accumulation and prediction divergence over long horizons, particularly in turbulent and other chaotic regimes (28, 29). Recent work, such as DySLIM (30), enhances stability by leveraging invariant statistical measures. However, these methods depend on estimating such measures from trajectory samples, which can be computationally intensive and inaccurate in all forms of chaotic systems, especially in high-dimensional cases. These limitations have prompted exploration into alternative computational paradigms. Quantum machine learning (QML) has emerged as a possible candidate due to its ability to represent and manipulate high-dimensional probability distributions in Hilbert space (31). Quantum circuits can exploit entanglement and interference to express rich, nonlocal statistical dependencies using fewer parameters than their promising counterparts, which makes them well suited for capturing invariant measures in high-dimensional dynamical systems, where long-range correlations and multimodal distributions frequently arise (32). QML and quantum-inspired ML have already demonstrated potential in fields such as quantum chemistry (33, 34), combinatorial optimization (35, 36), and generative modeling (37, 38). However, the field is constrained on two fronts: Fully quantum approaches are limited by noisy intermediate-scale quantum (NISQ) hardware noise and scalability (39), while quantum-inspired algorithms, being classical simulations, cannot natively leverage crucial quantum effects such as entanglement to efficiently represent the complex, nonlocal correlations found in such systems. These challenges limit the standalone utility of QML in scientific applications today. Instead, hybrid quantum-classical models provide a promising compromise, where quantum submodules work together with classical learning pipelines to improve expressivity, data efficiency, and physical fidelity. In quantum chemistry, this hybrid paradigm has proven feasible, notably through quantum mechanical/molecular mechanical coupling (40, 41), where classical force fields are augmented with quantum corrections. Within such frameworks, techniques such as quantum-selected configuration interaction (42) have been used to enhance accuracy while keeping the quantum resource requirements tractable. In the broader landscape of quantum computational fluid dynamics, progress has been made toward developing full quantum solvers for nonlinear PDEs. Recent works by Liu et al. (43) and Sanavio et al. (44, 45) have successfully applied Carleman linearization to the lattice Boltzmann equation, offering a promising pathway for simulating fluid flows at moderate Reynolds numbers. These approaches, typically using algorithms such as Harrow-Hassidim-Lloyd (HHL) (46), promise exponential speedups but generally necessitate deep circuits and fault-tolerant hardware.

Quantum-enhanced machine learning (QEML) combines the representational richness of quantum models with the scalability of classical learning. By leveraging uniquely quantum properties such as superposition and entanglement, QEML can explore richer feature spaces and capture complex correlations that are challenging for purely classical models. Recent successes in quantum-enhanced drug discovery (37), where hybrid quantum-classical generative models have produced experimentally validated candidates rivaling state-of-the-art classical methods, demonstrate the practical potential of QEML even before full quantum advantage is achieved. Despite these strengths, practical barriers remain. QEML pipelines require repeated quantum-classical communication during training and rely on costly quantum data-embedding and measurement steps, which slow computation and limit accessibility across research institutions.

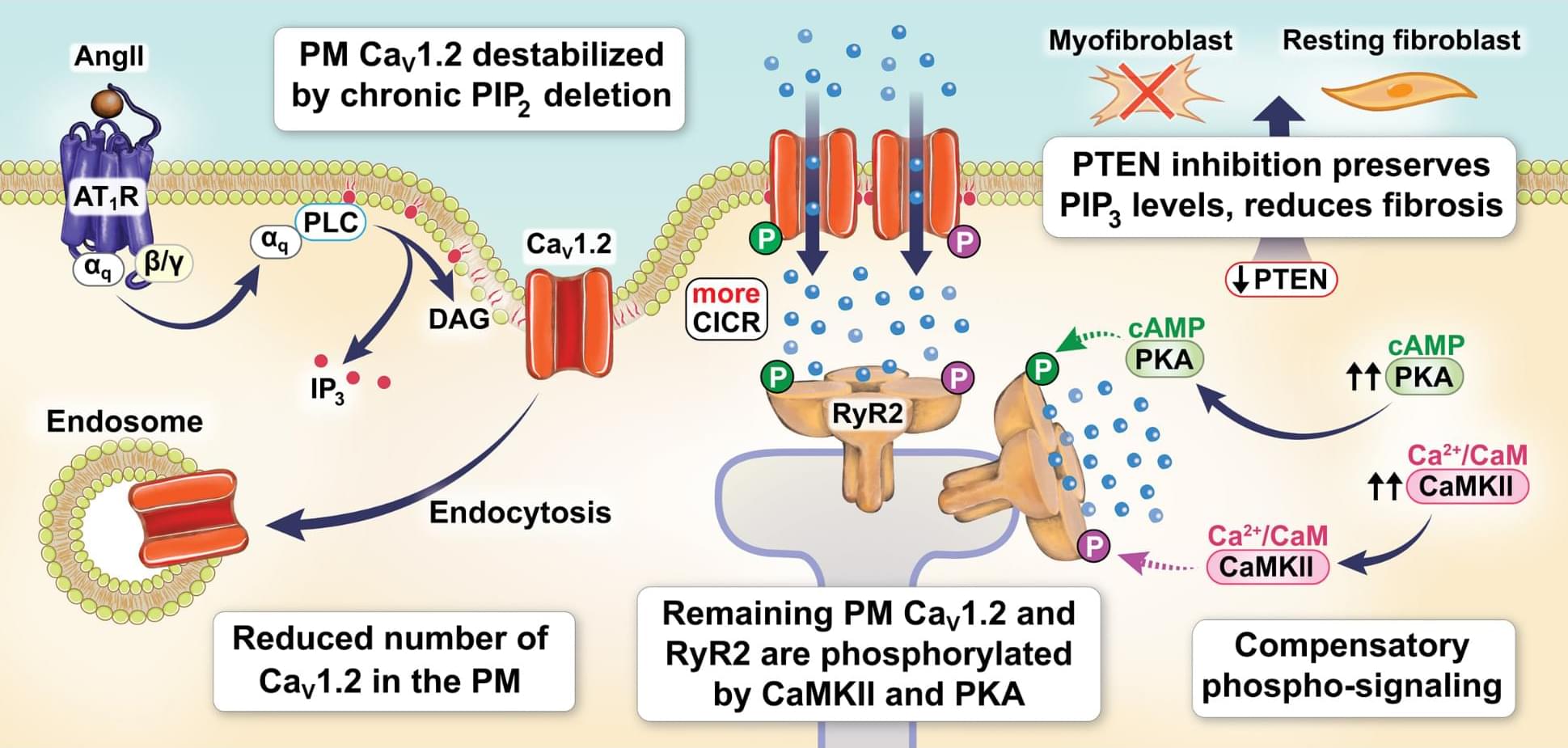

Westhoff & colleagues found that PTEN inhibition reduces cardiac fibrosis caused by the high blood pressure hormone AngII. Learn how to fight fibrosis from hypertension at.

This website uses a security service to protect against malicious bots. This page is displayed while the website verifies you are not a bot.

Hepatocellular carcinoma (HCC) diagnosis relies heavily on well‑timed arterial phase MRI, yet single arterial phase scans often miss the optimal late arterial phase, especially with hepatobiliary contrast agents that are prone to motion artifacts and narrow timing windows. These limitations can compromise image quality and reduce detection of key features such as arterial phase hyperenhancement.

In a study recently published in Radiology: Imaging Cancer, researchers led by Kai Liu, BS, Zhongshan Hospital at Fudan University in Shanghai, compared conventional single phase imaging with an ultrafast, deep learning-based multiphase MRI technique, which can rapidly acquire six high-resolution arterial phases in a single breath hold.

In a cohort of 236 participants, the deep learning–based multiphase MRI technique markedly improved late arterial capture, boosted overall image quality and enhanced detection of lesions and HCC for both extracellular and hepatobiliary agents. The method achieved a late arterial capture rate of 98% (vs. 81% to 85% with single phase imaging) and showed strong performance in identifying small tumors.

“These findings support the potential of deep learning-based multiphase arterial MRI to streamline HCC diagnosis,” the authors conclude.

Read the full article, “Clinical Utility of Deep Learning–based Multiple Arterial Phase MRI in Hepatocellular Carcinoma.”

Technology promised simplicity. It delivered complexity.

AI promised resolution. It is delivering acceleration.

The paradox is not a bug. It is the feature. And the question is what we choose to do about it.

This week I published a new essay, It is the argument I have been circling for a decade, finally in one place.

The short version: as AI’s capabilities grow, so do the risks. They are not separate variables. They climb the same curve. A more powerful model can cure more diseases and design more weapons. A smarter agent can book your travel and drain your bank account. Capability is leverage. Leverage is indifferent to ethics.

Every time we raise the ceiling of what AI can do, we raise the floor of what can go wrong.

We still have the how. We are drowning in the what. What we have neglected, almost completely, is the why.

Designing molecules is one of chemistry’s most complex challenges. From life-saving drugs to advanced materials, each compound requires a precise sequence of reactions. Planning these steps demands both technical knowledge and strategic insight, making it a task that often relies on years of experience.

Two problems plague much of modern chemistry. The first is retrosynthesis: Chemists start from a target molecule and work backward to identify simpler building blocks and viable reaction pathways. Retrosynthesis involves countless decisions, from choosing starting materials to determining when to form rings or protect sensitive functional groups. While computers can explore vast “chemical spaces,” they often struggle to capture the strategic reasoning used by human experts.

The second problem is reaction mechanisms. These describe how chemical reactions unfold step by step through the movements of electrons. Mechanistic insight helps scientists predict new reactions, improve efficiency, and reduce costly trial and error. Existing computational methods can generate many possible pathways, but often lack the chemical intuition needed to identify the most plausible ones.