Agentic GRC automates workflows, forcing teams to rethink their role beyond operations. Anecdotes explains why the biggest challenge is shifting from execution to risk leadership.

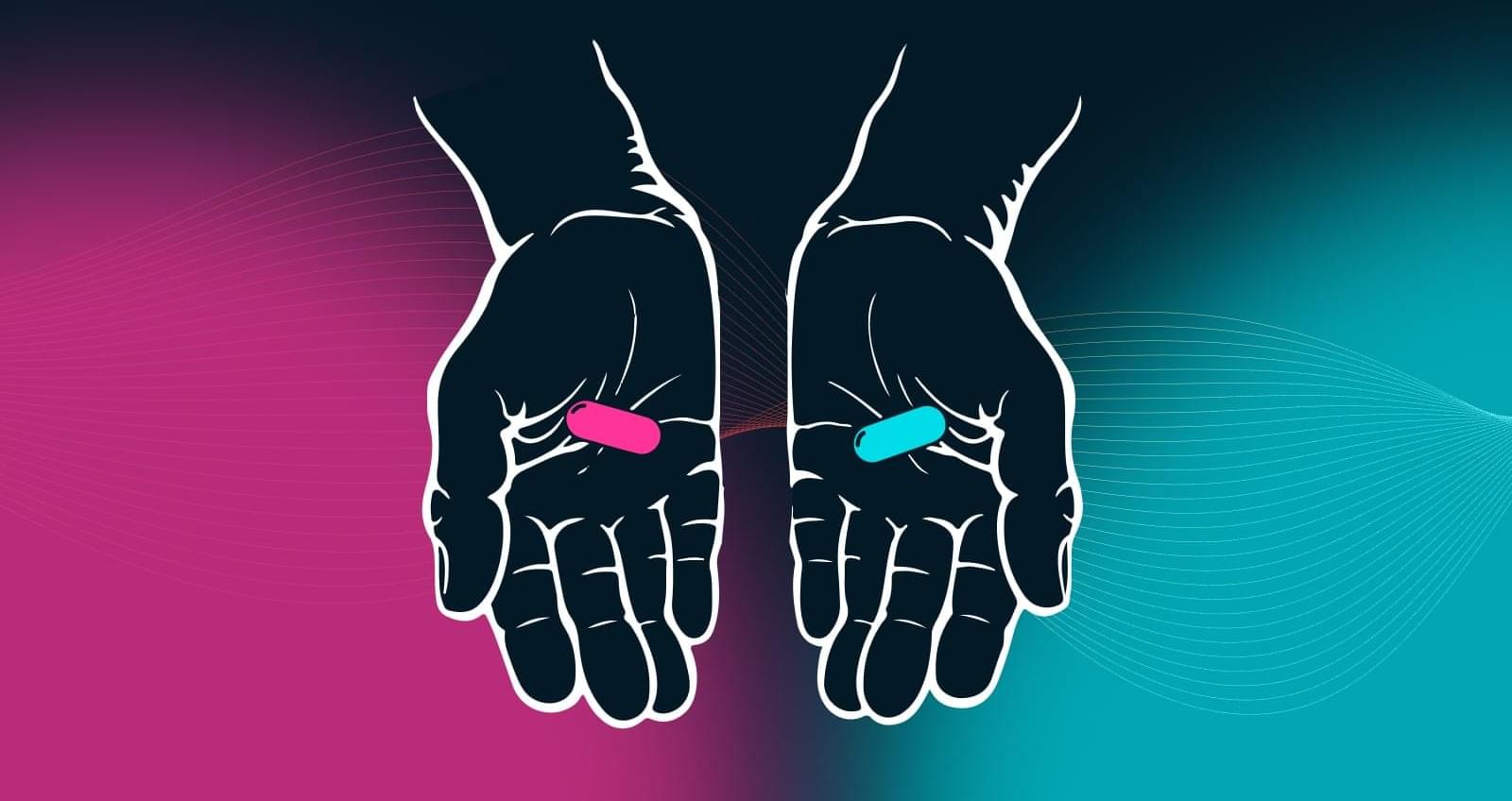

Massachusetts Institute of Technology (MIT) engineers have developed an ultrasound wristband that precisely tracks hand movements in real-time for robotics and virtual reality control.

The next time you’re scrolling your phone, take a moment to appreciate the feat: The seemingly mundane act is possible thanks to the coordination of 34 muscles, 27 joints, and over 100 tendons and ligaments in your hand. Indeed, our hands are the most nimble parts of our bodies. Mimicking their many nuanced gestures has been a longstanding challenge in robotics and virtual reality.

Now, MIT engineers have designed an ultrasound wristband that precisely tracks a wearer’s hand movements in real-time. The wristband produces ultrasound images of the wrist’s muscles, tendons, and ligaments as the hand moves, and is paired with an artificial intelligence algorithm that continuously translates the images into the corresponding positions of the five fingers and palm.

Northwestern University engineers have developed the first modular robots with athletic intelligence. They can be combined and recombined in the wild, recover from injury and keep moving no matter what’s thrown at them.

Called “legged metamachines,” the creations are made from autonomous, Lego-like modules that snap together into an endless number of configurations. Each module by itself is a complete robot with its own motor, battery and computer. Alone, a module can roll, turn and jump. But the real agility and indestructibility emerges when the modules combine.

The study was published in the Proceedings of the National Academy of Sciences.

Specifically, the XSS vulnerability enables the execution of arbitrary JavaScript code in the context of “a-cdn.claude[.]ai.” A threat actor could leverage this behavior to inject JavaScript that issues a prompt to the Claude extension.

The extension, for its part, allows the prompt to land in Claude’s sidebar as if it’s a legitimate user request simply because it comes from an allow-listed domain.

“The attacker’s page embeds the vulnerable Arkose component in a hidden, sends the XSS payload via postMessage, and the injected script fires the prompt to the extension,” Yomtov explained. “The victim sees nothing.”

Threat actors are targeting TikTok for Business accounts in a phishing campaign that prevents security bots from analyzing malicious pages.

TikTok Business accounts may be targeted due to their high potential for abuse in malvertising campaigns, ad fraud, and the distribution of malicious content.

Browser threat detection and response company Push Security links the campaign to one documented last year, which targeted Google Ad Manager accounts.

WhatsApp is rolling out multiple features designed to make the app easier to use, including AI-powered message replies and photo retouching, support for two accounts on iOS, and chat history transfer between iOS and Android devices.

Meta said that after the new updates, users will be able to touch up images in the chat before sharing them with contacts or in groups using Meta AI.

The Writing Help feature enables users to quickly draft a response based on the active conversation, with Meta saying it uses Private Processing to ensure messages are completely private.

A breakthrough holographic system uses AI and multidimensional light to store vastly more data in less space.

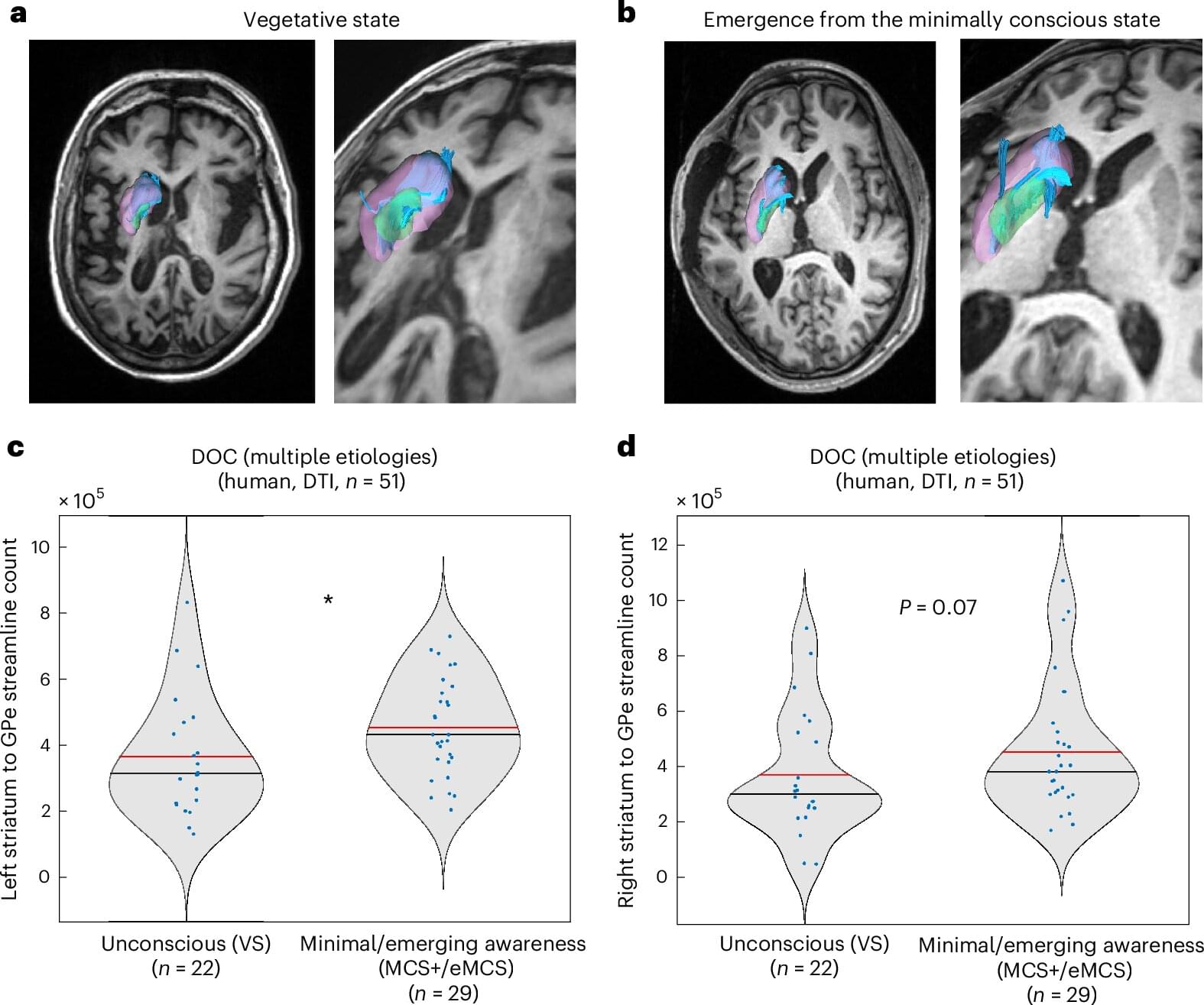

Consciousness, and the ways in which it can become impaired after certain brain injuries, are not well understood, making disorders of consciousness (DOC), like coma, vegetative states and minimally conscious states difficult to treat. But a new study, published in Nature Neuroscience, indicates that AI might be able to help researchers gain some traction with this problem. The research team involved in the new study has developed an adversarial AI framework to help them determine what exactly is going on in states of reduced consciousness and how to approach a solution.

To better understand the mechanisms behind impaired consciousness, the researchers developed two types of AI models and had them play a kind of game where one model determined different levels of consciousness based on EEGs simulated to look like those of real unconscious and conscious brains. The AI agents guessing consciousness levels, called deep convolutional neural networks (DCNNs), were first trained on 680,000 ten-second recordings of brain activity from conscious and unconscious humans, monkeys, bats and rats to detect which neural signals related to differing levels of consciousness. The AI showing EEG data was a biologically plausible simulation of the human brain.

“To decode consciousness from these signals, we trained three separate DCNNs, each specialized for a different brain region, to output a continuous score from 0 (unconscious) to 1 (fully conscious): a cortical consciousness detector (ctx-DCNN), a thalamic consciousness detector (th-DCNN) and a pallidal consciousness detector (pal-DCNN). The ctx-DCNN was trained on continuous consciousness levels derived from clinical scales (GCS and CRS-R), enabling it to recognize graded states of consciousness,” the study authors explain.

‘The present results strengthen the possibility of a paradigm shift in neuroscience… moving from the fragmented mapping of isolated cognitive tasks toward the use of unified, predictive foundation models of brain and cognitive functions By aligning the representations of Al systems to those of the human brain, we demonstrate that a single architecture can integrate a vast range of fMRI responses across hundreds of individuals, extending the framework that led the 2025 Algonauts competition. The observed log-linear scaling of encoding accuracy mirroring power laws in both artificial intelligence and neuroscience suggests that the ceiling for predicting human brain activity is yet to be reached.’

Cognitive neuroscience is fragmented into specialized models, each tailored to specific experimental paradigms, hence preventing a unified model of cognition in the human brain. Here, we introduce TRIBE v2, a tri-modal (video, audio and language) foundation model capable of predicting human brain activity in a variety of naturalistic and experimental conditions. Leveraging a unified dataset of over 1,000 hours of fMRI across 720 subjects, we demonstrate that our model accurately predicts high-resolution brain responses for novel stimuli, tasks and subjects, superseding traditional linear encoding models, delivering several-fold improvements in accuracy. Critically, TRIBE v2 enables in silico experimentation: tested on seminal visual and neuro-linguistic paradigms, it recovers a variety of results established by decades of empirical research.