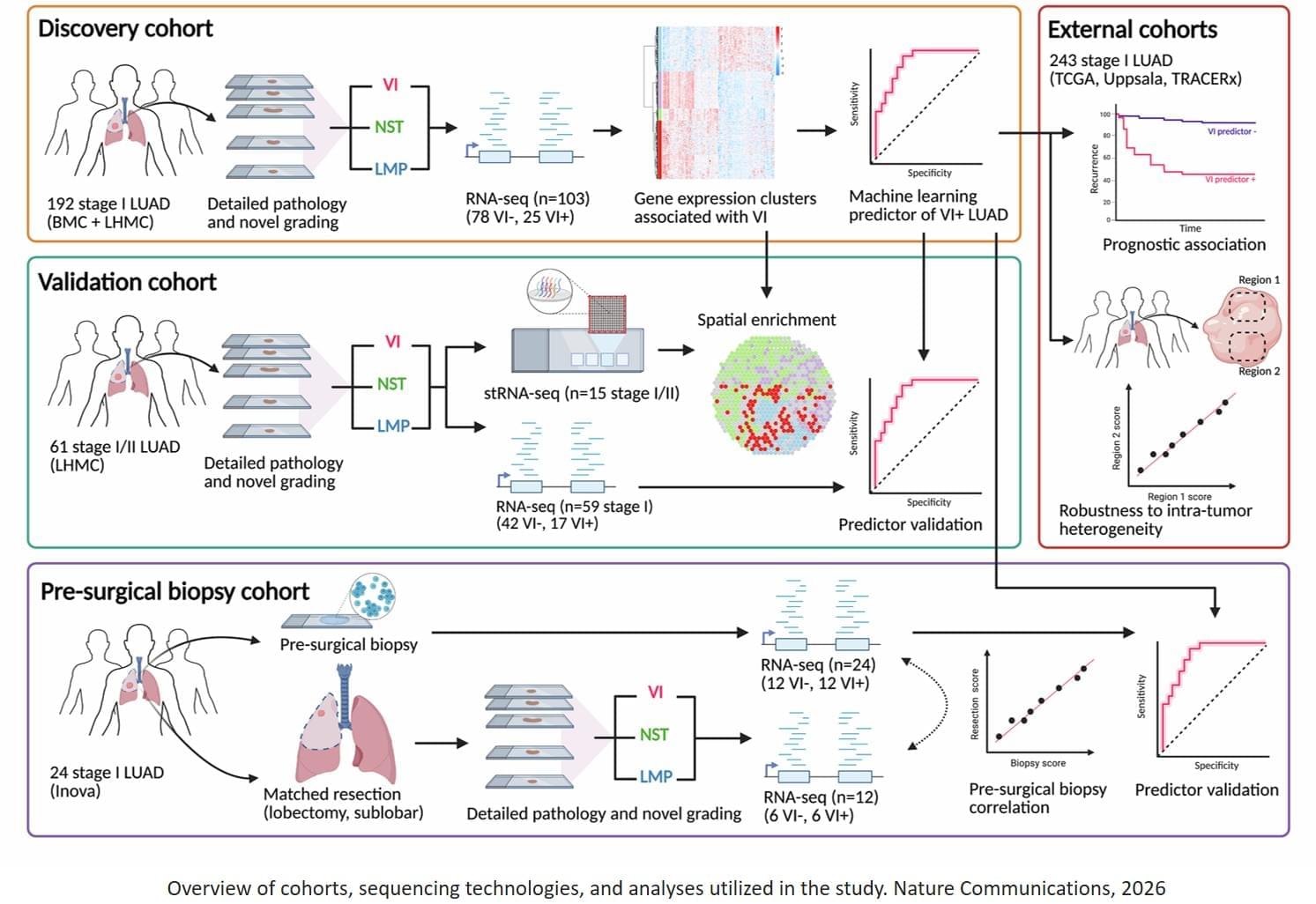

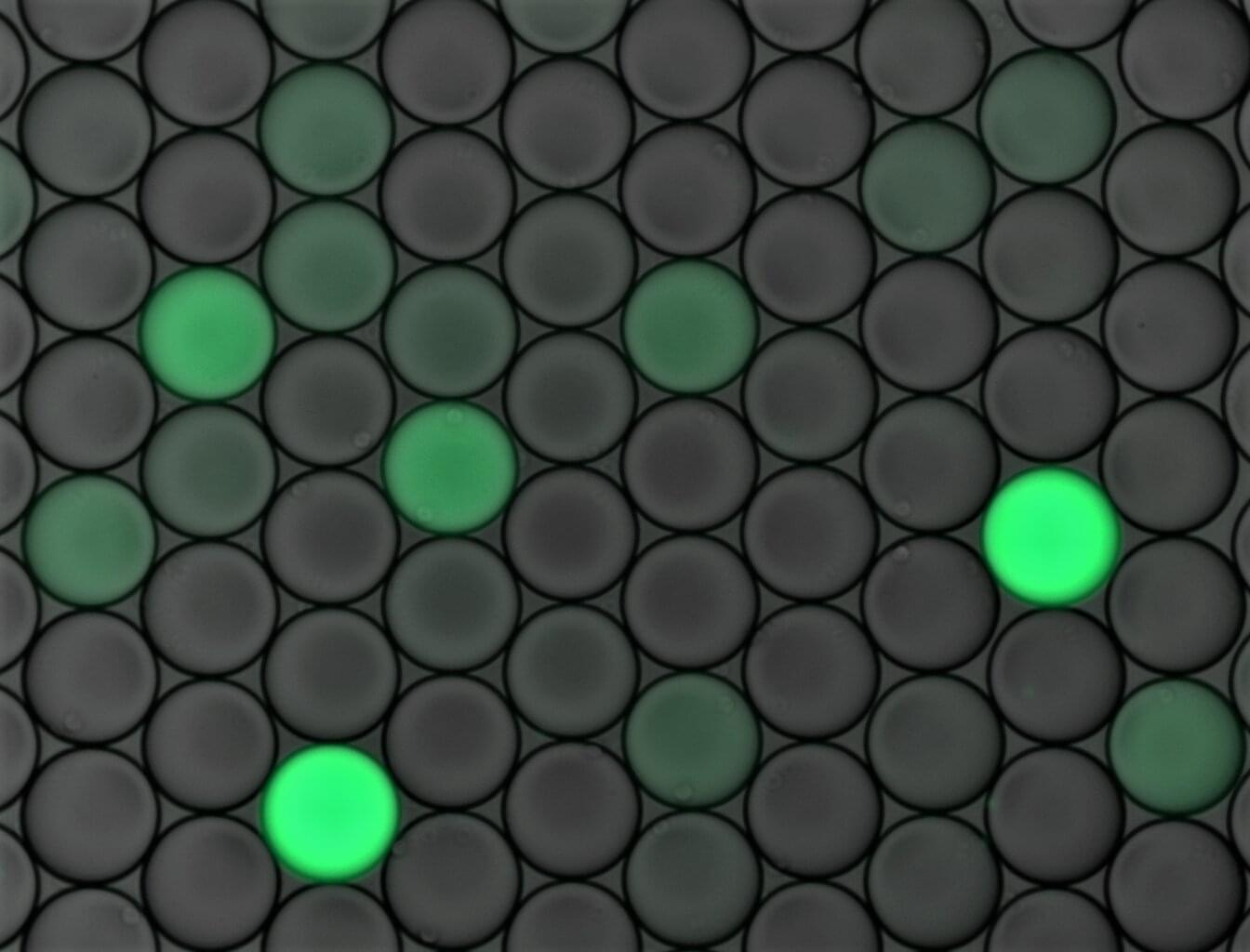

Using gene activity measurements, the researcher found more than 400 genes that differ between tumors with and without vascular invasion and confirmed these patterns in an independent cohort. They then developed and validated a machine-learning predictor that predicts whether vascular invasion is present. They found this test worked well at predicting tumor recurrence in other datasets and, crucially, gave accurate results about vascular invasion when measured in tiny biopsy samples taken before surgery.

The researchers believe this predictor will play an important role in picking a treatment matched to how aggressive the tumor is.

According to the researchers, there is growing evidence that vascular invasion is associated with poor prognosis in other kinds of cancer, such as breast, liver and gastric cancer. The researchers need to determine if the same genes that are active in vascular-invasive lung adenocarcinoma are altered in other cancers. ScienceMission sciencenewshighlights.

Lung cancer is the leading cause of death from cancer. It kills more people in the U.S. than breast, prostate and colon cancer combined. When lung adenocarcinoma, the most common primary lung cancer in the U.S., grows into nearby blood vessels (a process called vascular invasion), the tumor is more likely to recur even if surgically removed. Pathologists can identify areas of vascular invasion post-operatively, but surgeons could perform more extensive surgery to lower the risk of recurrence if they could predict which tumors were more likely to have vascular invasion.

Researchers believe they have, for the first time, identified genes whose activity changes in lung tumors with vascular invasion. Additionally, they also discovered that they could detect these changes in small pieces of the tumor collected during a presurgical biopsy procedure.

“We think this is a potential game changer for patients with early-stage lung cancer,” says the corresponding author. “Our findings suggest a simple biopsy-based test could help doctors better identify patients at higher risk of recurrence and guide treatment decisions.”