A double-whammy for systematic education since the need for knowledge-workers will decrease at the same time as AI fundamentally questions the need for / uses of “Knowledge Gatekeepers” — establishment academia, lawyers, even actors — the chatterbot classes.

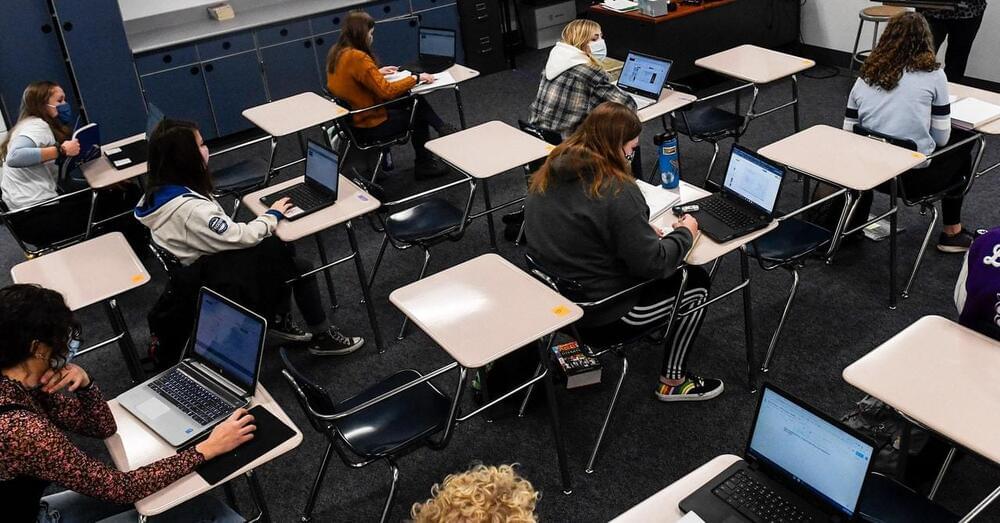

When high school English teacher Kelly Gibson first encountered ChatGPT in December, the existential anxiety kicked in fast. While the internet delighted in the chatbot’s superficially sophisticated answers to users’ prompts, many educators were less amused. If anyone could ask ChatGPT to “write 300 words on what the green light symbolizes in The Great Gatsby,” what would stop students from feeding their homework to the bot? Speculation swirled about a new era of rampant cheating and even a death knell for essays, or education itself. “I thought, ‘Oh my god, this is literally what I teach,’” Gibson says.

But amid the panic, some enterprising teachers see ChatGPT as an opportunity to redesign what learning looks like—and what they invent could shape the future of the classroom. Gibson is one of them. After her initial alarm subsided, she spent her winter vacation tinkering with ChatGPT and figuring out ways to incorporate it into her lessons. She might ask kids to generate text using the bot and then edit it themselves to find the chatbot’s errors or improve upon its writing style. Gibson, who has been teaching for 25 years, likened it to more familiar tech tools that enhance, not replace, learning and critical thinking. “I don’t know how to do it well yet, but I want AI chatbots to become like calculators for writing,” she says.

Gibson’s view of ChatGPT as a teaching tool, not the perfect cheat, brings up a crucial point: ChatGPT is not intelligent in the way people are, despite its ability to spew humanlike text. It is a statistical machine that can sometimes regurgitate or create falsehoods and often needs guidance and further edits to get things right.