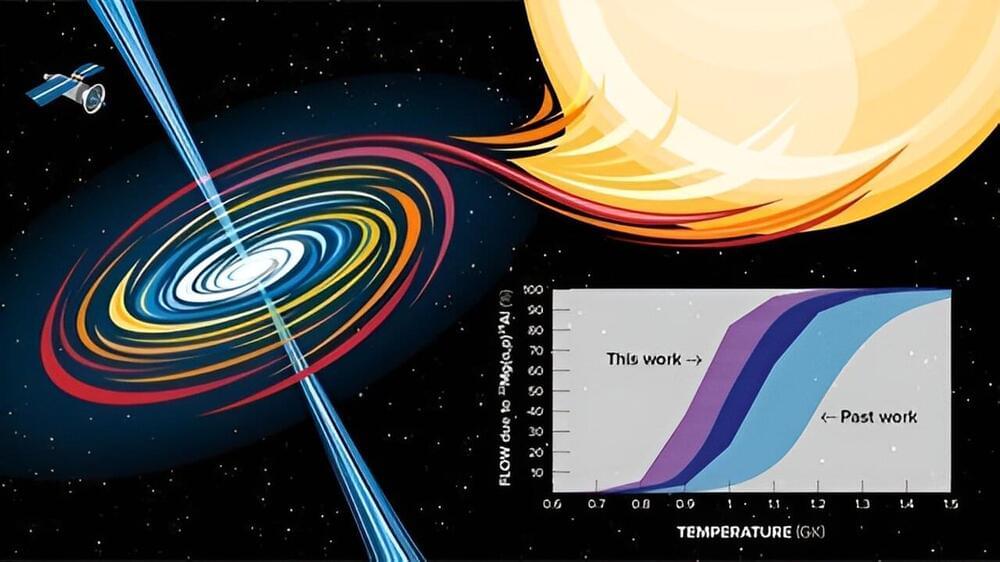

Astronomy Magazine — Project Lyra is the cover feature!

A big thank you to Maciej Rebisz for the images and the entire Project Lyra team for the research work!

Project Lyra develops concepts for reaching interstellar objects such as 1I / ‘Oumuamua and 2I / Borisov with a spacecraft, based on near-term technologies. But what is an interstellar object?

On October 19th 2017, the University of Hawaii’s Pan-STARRS 1 telescope on Haleakala discovered a fast-moving object near the Earth, initially named A/2017 U1. It is now designated as 1I/’Oumuamua. This object was found to be not bound to the solar system. It has a velocity at infinity of ~26 km/s and an incoming radiant (direction of motion) near the solar apex in the constellation Lyra. Due to the non-observation of a tail in the proximity of the Sun, the object does not seem to be a comet but an asteroid. More recent observations from the Palomar Observatory indicate that the object is reddish, similar to Kuiper belt objects. This is a sign of space weathering.

When will such an object visit us again? End of 2019, a second interstellar object, 2I/Borisov was discovered, which is a comet. As 1I/‘Oumuamua and 2I/Borisov are the nearest macroscopic samples of interstellar material, the scientific returns from sampling the object are hard to overstate. Detailed study of interstellar materials at interstellar distances are likely decades away, even if Breakthrough Initiatives’ Project Starshot, for example, is vigorously pursued. Hence, an interesting question is if there is a way to exploit this unique opportunity by sending a spacecraft to 1I/’Oumuamua to make observations at close range.