Learn the basics of neural networks and backpropagation, one of the most important algorithms for the modern world.

Category: information science – Page 60

Direct Solar Power Prediction from Machine Learning

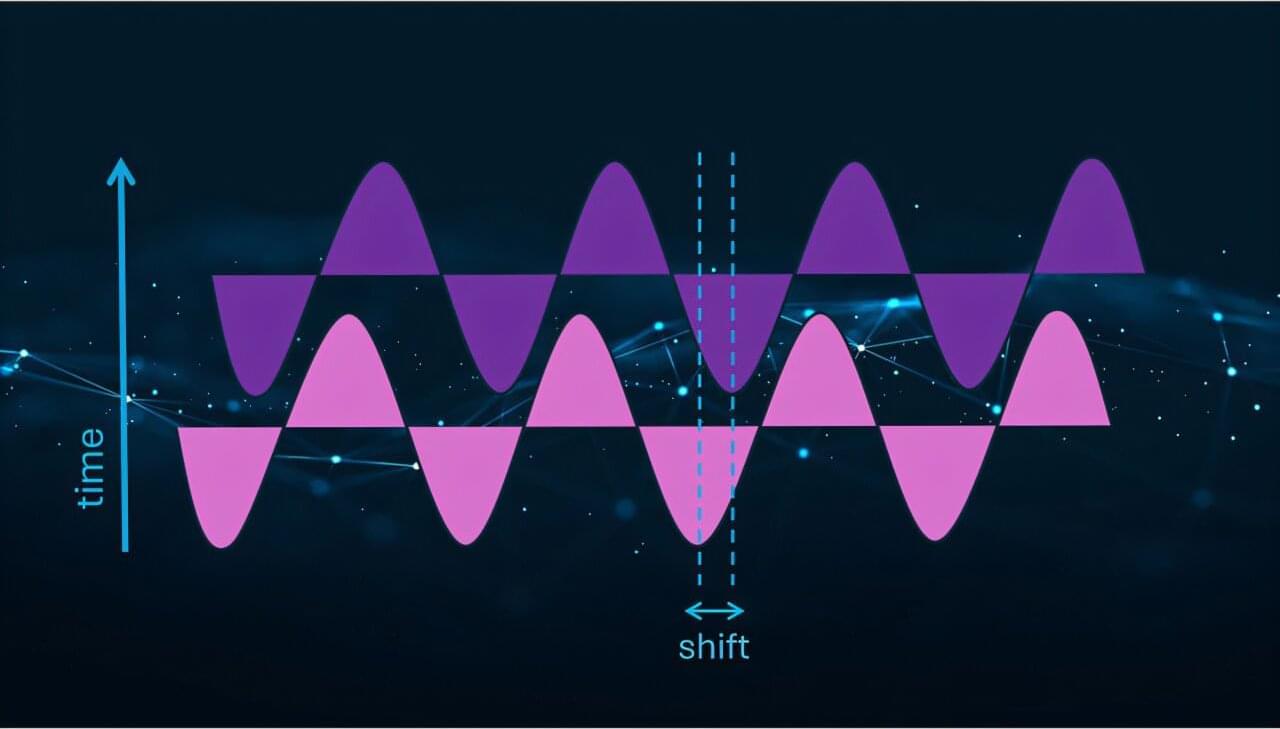

How can machine learning help determine the best times and ways to use solar energy? This is what a recent study published in Advances in Atmospheric Sciences hopes to address as a team of researchers from the Karlsruhe Institute of Technology investigated how machine learning algorithms can be used to predict and forecast weather patterns to enable more cost-effective approaches for using solar energy. This study has the potential to help enhance renewable energy technologies by fixing errors that are often found in current weather prediction models, leading to more efficient use of solar power by predicting when weather patterns will enable the availability of the Sun for solar energy needs.

For the study, the researchers used a combination of statistical methods and machine learning algorithms to help predict the most efficient times of day that photovoltaic (PV) power generation will achieve maximum production output. Their methods used what’s known as post-processing, which involves correcting weather forecasting errors before that data enters PV models, resulting in changing PV model predictions, resulting in establishing more accurate weather forecasting from machine learning algorithms.

“One of our biggest takeaways was just how important the time of day is,” said Dr. Sebastian Lerch, who is a professor at the Karlsruhe Institute of Technology and a co-author on the study. “We saw major improvements when we trained separate models for each hour of the day or fed time directly into the algorithms.”

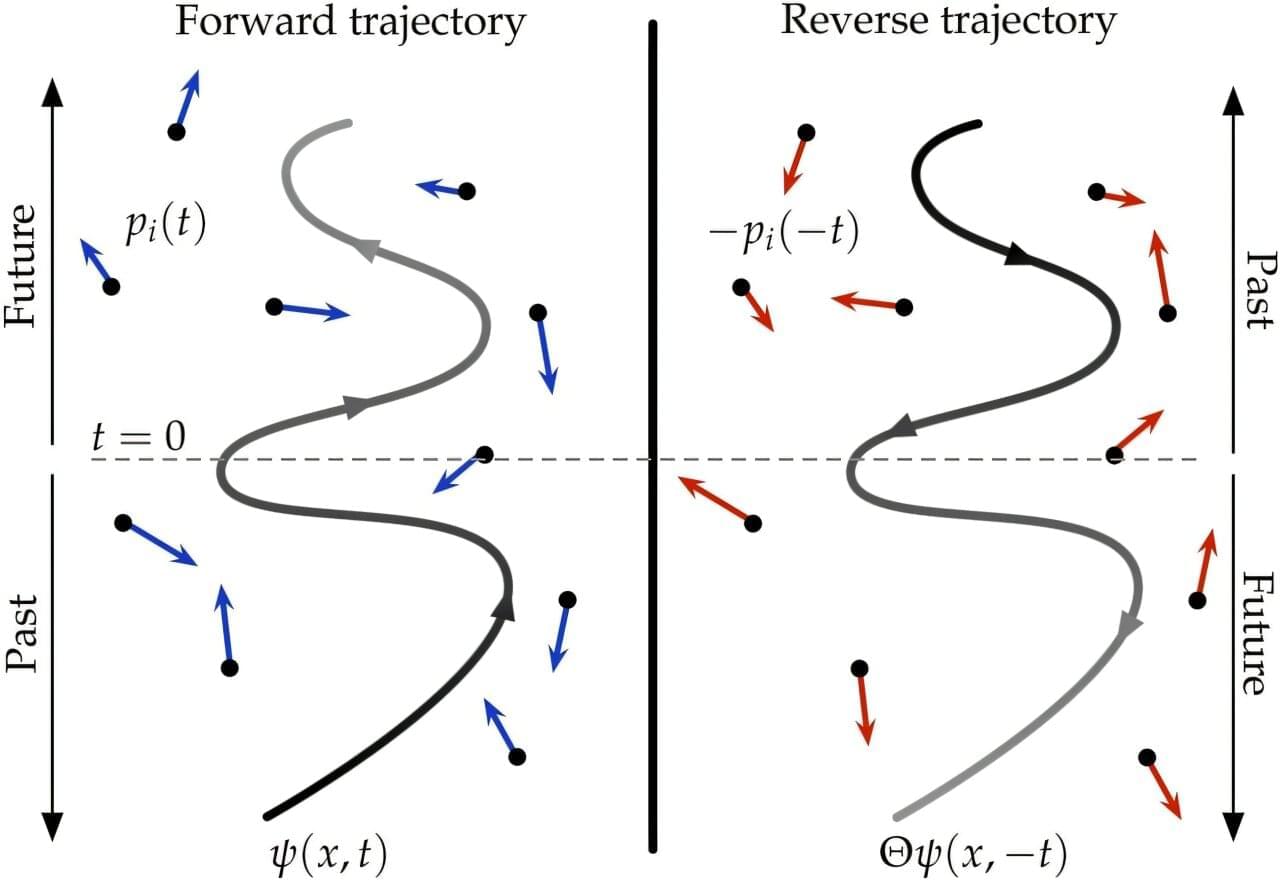

Physicists uncover evidence of two arrows of time emerging from the quantum realm

What if time is not as fixed as we thought? Imagine that instead of flowing in one direction—from past to future—time could flow forward or backwards due to processes taking place at the quantum level. This is the thought-provoking discovery made by researchers at the University of Surrey, as a new study reveals that opposing arrows of time can theoretically emerge from certain quantum systems.

For centuries, scientists have puzzled over the arrow of time—the idea that time flows irreversibly from past to future. While this seems obvious in our experienced reality, the underlying laws of physics do not inherently favor a single direction. Whether time moves forward or backwards, the equations remain the same.

Dr. Andrea Rocco, Associate Professor in Physics and Mathematical Biology at the University of Surrey and lead author of the study, said, One way to explain this is when you look at a process like spilled milk spreading across a table, it’s clear that time is moving forward. But if you were to play that in reverse, like a movie, you’d immediately know something was wrong—it would be hard to believe milk could just gather back into a glass.

AI program plays the long game to solve decades-old math problems

A game of chess requires its players to think several moves ahead, a skill that computer programs have mastered over the years. Back in 1996, an IBM supercomputer famously beat the then world chess champion Garry Kasparov. Later, in 2017, an artificial intelligence (AI) program developed by Google DeepMind, called AlphaZero, triumphed over the best computerized chess engines of the time after training itself to play the game in a matter of hours.

More recently, some mathematicians have begun to actively pursue the question of whether AI programs can also help in cracking some of the world’s toughest math problems. But, whereas an average game of chess lasts about 30 to 40 moves, these research-level math problems require solutions that take a million or more steps, or moves.

In a paper appearing on the arXiv preprint server, a team led by Caltech’s Sergei Gukov, the John D. MacArthur Professor of Theoretical Physics and Mathematics, describes developing a new type of machine-learning algorithm that can solve math problems requiring extremely long sequences of steps. The team used their new algorithm to solve families of problems related to an overarching decades-old math problem called the Andrews–Curtis conjecture. In essence, the algorithm can think farther ahead than even advanced programs like AlphaZero.

IEEE CIS Webinar: Quantum Computational Intelligence

Quantum computing is an alternative computing paradigm that exploits the principles of quantum mechanics to enable intrinsic and massive parallelism in computation. This potential quantum advantage could have significant implications for the design of future computational intelligence systems, where the increasing availability of data will necessitate ever-increasing computational power. However, in the current NISQ (Noisy Intermediate-Scale Quantum) era, quantum computers face limitations in qubit quality, coherence, and gate fidelity. Computational intelligence can play a crucial role in optimizing and mitigating these limitations by enhancing error correction, guiding quantum circuit design, and developing hybrid classical-quantum algorithms that maximize the performance of NISQ devices. This webinar aims to explore the intersection of quantum computing and computational intelligence, focusing on efficient strategies for using NISQ-era devices in the design of quantum-based computational intelligence systems.

Speaker Biography:

Prof. Giovanni Acampora is a Professor of Artificial Intelligence and Quantum Computing at the Department of Physics “Ettore Pancini,” University of Naples Federico II, Italy. He earned his M.Sc. (cum laude) and Ph.D. in Computer Science from the University of Salerno. His research focuses on computational intelligence and quantum computing. He is Chair of the IEEE-SA 1855 Working Group, Founder and Editor-in-Chief of Quantum Machine Intelligence. Acampora has received multiple awards, including the IEEE-SA Emerging Technology Award, IBM Quantum Experience Award and Fujitsu Quantum Challenge Award for his contributions to computational intelligence and quantum AI.

Pioneering the Aging Frontier with AI Models

David Furman, an immunologist and data scientist at the Buck Institute for Research on Aging and Stanford University, uses artificial intelligence to parse big data to identify interventions for healthy aging.

Read more.

David Furman uses computational power, collaborations, and cosmic inspiration to tease apart the role of the immune system in aging.

New algorithm improves how AI can independently learn and uncover patterns in data

“ tabindex=”0” KAIST researchers have discovered a molecular switch that can revert cancer cells back to normal by capturing the critical transition state before full cancer development. Using a computational gene network model based on single-cell RNA

Ribonucleic acid (RNA) is a polymeric molecule similar to DNA that is essential in various biological roles in coding, decoding, regulation and expression of genes. Both are nucleic acids, but unlike DNA, RNA is single-stranded. An RNA strand has a backbone made of alternating sugar (ribose) and phosphate groups. Attached to each sugar is one of four bases—adenine (A), uracil (U), cytosine ©, or guanine (G). Different types of RNA exist in the cell: messenger RNA (mRNA), ribosomal RNA (rRNA), and transfer RNA (tRNA).

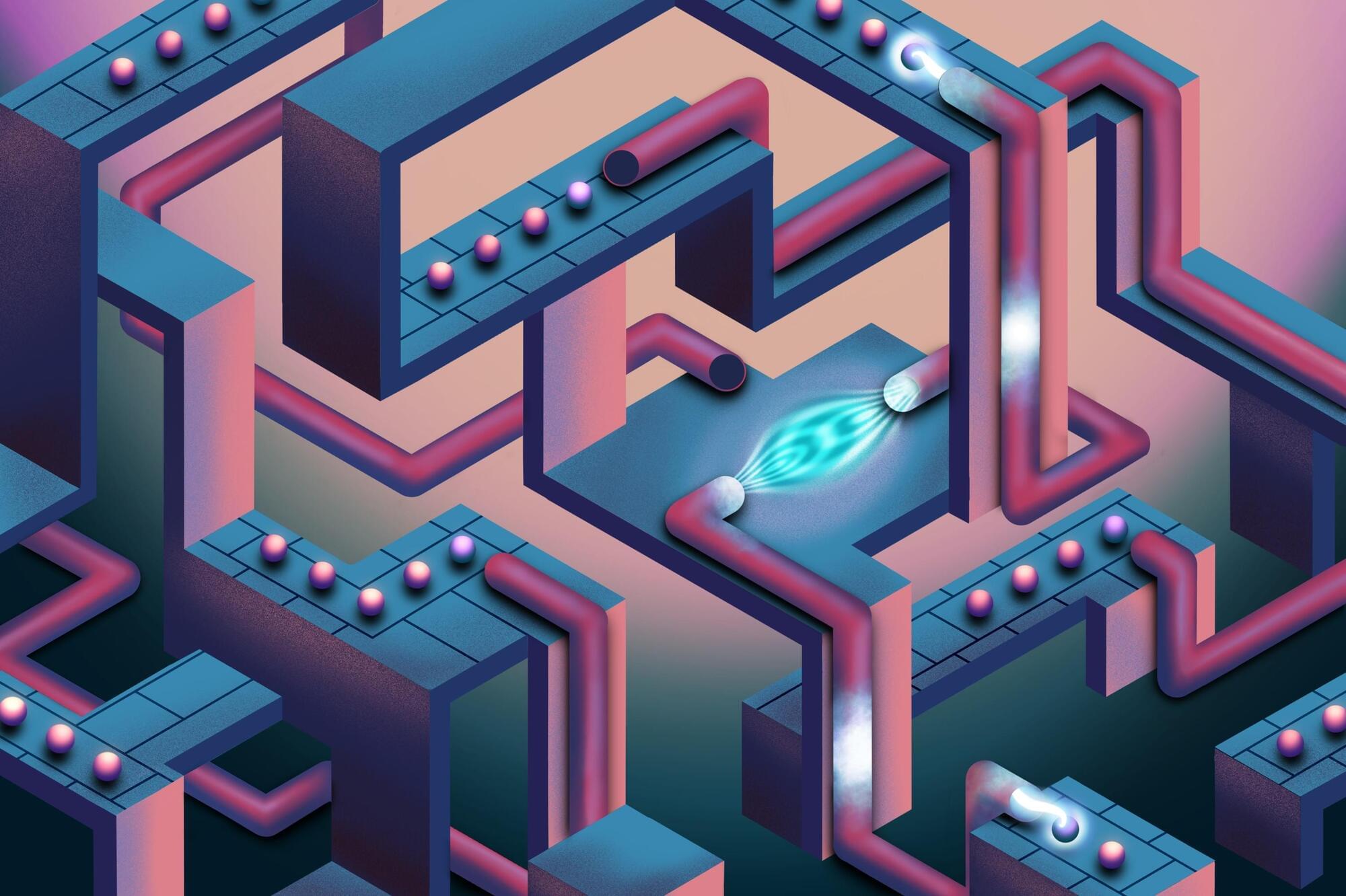

First distributed quantum algorithm brings quantum supercomputers closer

In a milestone that brings quantum computing tangibly closer to large-scale practical use, scientists at Oxford University’s Department of Physics have demonstrated the first instance of distributed quantum computing. Using a photonic network interface, they successfully linked two separate quantum processors to form a single, fully connected quantum computer, paving the way to tackling computational challenges previously out of reach. The results have been published in Nature.

Quantum computers successfully model particle scattering

Scattering takes place across the universe at large and miniscule scales. Billiard balls clank off each other in bars, the nuclei of atoms collide to power the stars and create heavy elements, and even sound waves deviate from their original trajectory when they hit particles in the air.

Understanding such scattering can lead to discoveries about the forces that govern the universe. In a recent publication in Physical Review C, researchers from Lawrence Livermore National Laboratory (LLNL), the InQubator for Quantum Simulations and the University of Trento developed an algorithm for a quantum computer that accurately simulates scattering.

“Scattering experiments help us probe fundamental particles and their interactions,” said LLNL scientist Sofia Quaglioni. “The scattering of particles in matter [materials, atoms, molecules, nuclei] helps us understand how that matter is organized at a microscopic level.”

CERN Physicists Use AI To Understand the ‘God Particle’

To identify signs of particles like the Higgs boson, CERN researchers work with mountains of data generated by LHC collisions.

Hunting for evidence of an object whose behavior is predicted by existing theories is one thing. But having successfully observed the elusive boson, identifying new and unexpected particles and interactions is an entirely different matter.

To speed up their analysis, physicists feed data from the billions of collisions that occur in LHC experiments into machine learning algorithms. These models are then trained to identify anomalous patterns.