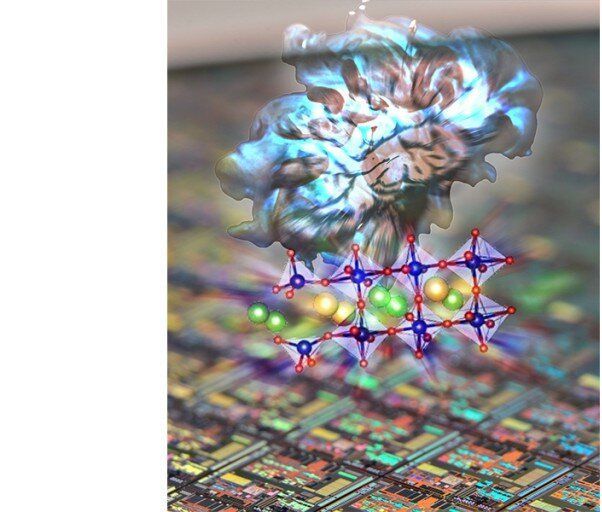

Nanographene is a material that could radically improve solar cells, fuel cells, LEDs and more. Typically, the synthesis of this material has been imprecise and difficult to control. For the first time, researchers have discovered a simple way to gain precise control over the fabrication of nanographene. In doing so, they have shed light on the previously unclear chemical processes involved in nanographene production.

Graphene, one-atom-thick sheets of carbon molecules, could revolutionize future technology. Units of graphene are known as nanographene; these are tailored to specific functions, and as such, their fabrication process is more complicated than that of generic graphene. Nanographene is made by selectively removing hydrogen atoms from organic molecules of carbon and hydrogen, a process called dehydrogenation.

“Dehydrogenation takes place on a metal surface such as that of silver, gold or copper, which acts as a catalyst, a material that enables or speeds up a reaction,” said Assistant Professor Akitoshi Shiotari from the Department of Advanced Materials Science. “However, this surface is large relative to the target organic molecules. This contributes to the difficulty in crafting specific nanographene formations. We needed a better understanding of the catalytic process and a more precise way to control it.”