Intelligent Machines

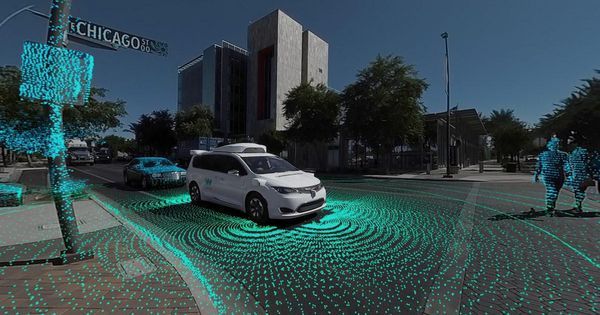

I rode in a car in Las Vegas that was controlled by a guy in Silicon Valley.

A startup thinks autonomous cars will need remote humans as backup drivers. For now, it’s kind of nerve-racking.

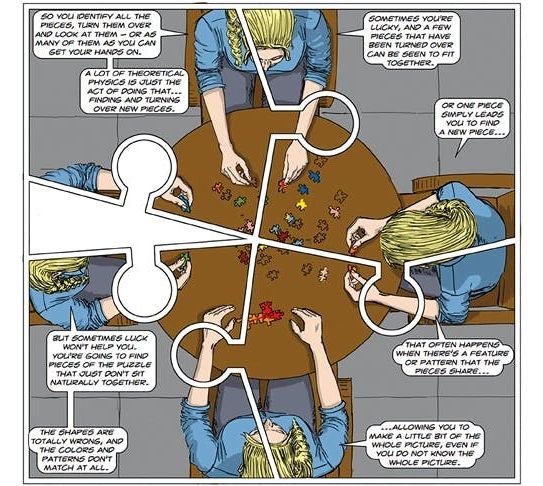

We all know that physics and maths can be pretty weird, but these three books tackle their mind-bending subjects in markedly contrasting ways. Clifford V. Johnson’s The Dialogues is a graphic novel, seeking to visualise cosmic ideas in comic-book style. Darling and Banerjee’s Weird Maths is a miscellany of fun oddities, ranging from chess-playing computers to prime-counting insects. Philip Ball’s Beyond Weird argues that we’ve got quantum mechanics all wrong: it’s not so weird actually, but quite sensible. All three books do a fine job for their respective audiences. Just make sure you know which target group you’re in.

The Dialogues is a sequence of illustrated conversations, often between pairs of youthful and attractive characters, scrupulously diverse in race and gender, who happen to meet in a café, gallery or train carriage, and find themselves talking about physics. Perhaps ‘The Lectures’ would be a better title, since one interlocutor is the expert, while the other is an interested lay person whose role is to feed questions at appropriate intervals.

The author shows himself to be a highly talented graphic artist as well as being a distinguished theoretician, and while the ping-pong chats may be somewhat lacking in narrative drive, they do provide a platform for some admirably lucid explanations of topics such as Maxwell’s equations or Einstein’s cosmological constant. Not the kind of comic book you roll up in your pocket, but a weighty hardback that would grace any coffee table.

Kitty Hawk, an aeronautics firm funded by Alphabet CEO and Google co-founder Larry Page, is inching closer to its plans of creating Uber for flights: it’s unveiled Cora, a fully electric self-flying air taxi that can cover 100 km (62 miles) on a single charge – and you’ll soon be able to hail one with your phone.

Cora has been in the works for a while now, and it’s just been cleared to begin tests in New Zealand. The goal is for Kitty Hawk to launch a fleet of its flying taxis within the next three years. You can Click on photo to start video.

” target=“_blank” rel=“nofollow noopener”>watch a clip of the vehicle in action here.

Powered by batteries and driven by a dozen rotors, the vehicle has a wingspan of 3.6m (12 feet), and can seat two passengers. It can take off vertically, which means it doesn’t need a runaway to land on – a parking lot or rooftop will do. Kitty Hawk is already managing operations in New Zealand through Zephyr Airworks, a company it set up in the country in 2016, and is also building an app that will let you hail flights.

But hyperloops are no longer quite so hypothetical. A handful of firms are now competing to develop the necessary technology. And in addition to designing the magnetically levitated pods and testing them on small-scale tracks, the firms are taking preliminary steps to set up hyperloop routes in the U.S. and abroad.

“It’s happening far faster than I would have ever expected, and it’s happening all over the world,” said Dr. David Goldsmith, a transportation researcher at Virginia Tech.

One of the biggest players is Musk himself. His whimsically named Boring Company is planning to dig a hyperloop tunnel that would make it possible to travel from Washington, D.C. to New York City in half an hour (the fastest Amtrak trains make the trip in just under three hours). Meanwhile, a pair of California-based startups, Virgin Hyperloop One and Hyperloop Transportation Technologies, are developing routes in North America, Asia, and Europe.

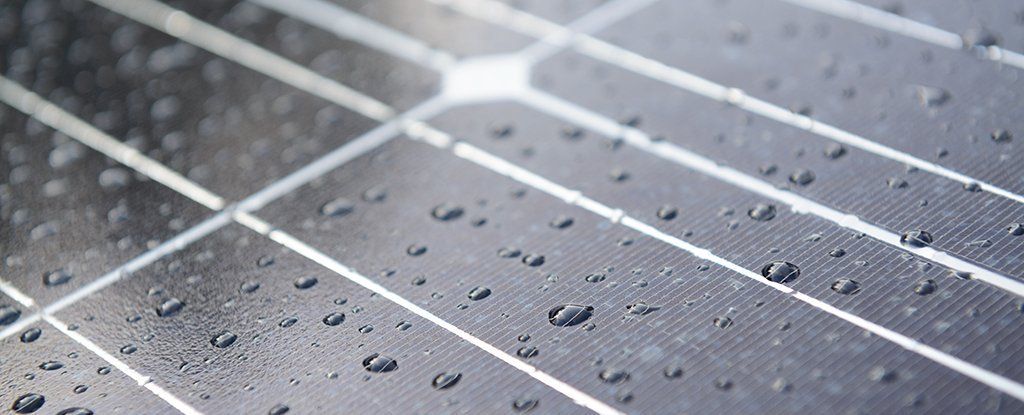

As advanced and efficient as our solar panels are becoming, they’re still pretty much useless when rain clouds arrive overhead. That could soon change thanks to a hybrid cell that can harvest energy from both sunlight and raindrops.

The key part of the system is a triboelectric nanogenerator or TENG, a device which creates electric charge from the friction of two materials rubbing together, as with static electricity – it’s all about the shifting of electrons.

TENGs can draw power from car tyres hitting the road, clothing materials rubbing up against each other, or in this case the rolling motion of raindrops across a solar panel. The end result revealed by scientists from Soochow University in China is a cell that works come rain or shine.

Kind of funny, but probably a sign of what will come in the mid 2020’s.

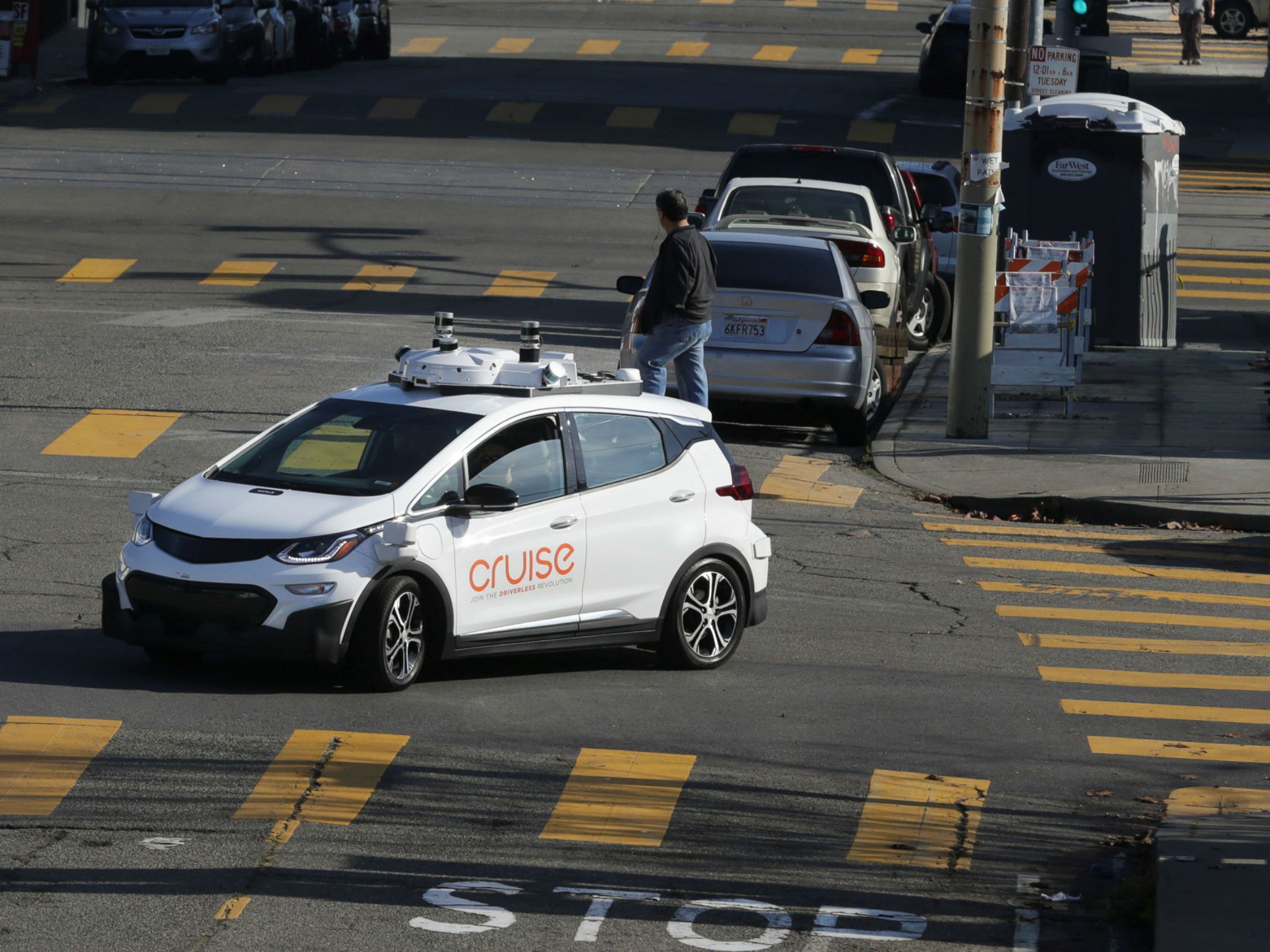

Technology and automotive companies touting self-driving cars as the future of transportation may have some work to convince San Franciscans, who keep attacking the vehicles.

A third of traffic collisions involving autonomous vehicles in 2018 so far featured humans physically confronting the cars, according to data released by California.

In one case, a taxi driver exited his cab and slapped the front passenger window of a General Motors Cruise parked behind him. No one was hurt, though the car sustained a scratch.

Tesla made a significant change to its electric motor strategy with the introduction of the Model 3, switching from an AC induction motor to a permanent magnet motor.

Now, Tesla’s principal motor designer, Konstantinos Laskaris, explains the logic behind the move.

The state of California will now allow driverless cars without safety drivers to be tested on public roads for the first time. Is a world full of driverless cars about to kick into gear?

Via NBC News MACH

Sometimes a buzzword gets so overhyped that it deserves some light-hearted mockery. That seems to be the case with “blockchain.” While it’s true that not every industry can benefit from a distributed-ledger technology, the trucking industry most certainly can. In fact, a new consortium called the Blockchain in Transport Alliance (BiTA) is working to apply blockchain to solve some of the most intransigent problems in trucking.

Trucking is a massive industry that affects virtually every American. Trucks move roughly 70 percent of the nation’s freight by weight, according to the American Trucking Association. The Association also found that in 2015, gross freight revenues from trucking were $726.4 billion, representing 81.5 percent of the nation’s freight bill.

Companies hailing from each piece of the trucking supply chain have joined BiTA, including: UPS, Salesforce, McCleod Software, DAT, Don Hummer Trucking and about 1,000 more applicants. [Full disclosure: Our company, Transfix, is also a member.].