Researchers at the Nanoscience Center and at the Faculty of Information Technology at the University of Jyväskylä in Finland have demonstrated that new distance-based machine learning methods developed at the University of Jyväskylä are capable of predicting structures and atomic dynamics of nanoparticles reliably. The new methods are significantly faster than traditional simulation methods used for nanoparticle research and will facilitate more efficient explorations of particle-particle reactions and particles’ functionality in their environment. The study was published in a Special Issue devoted to machine learning in the Journal of Physical Chemistry on May 15, 2020.

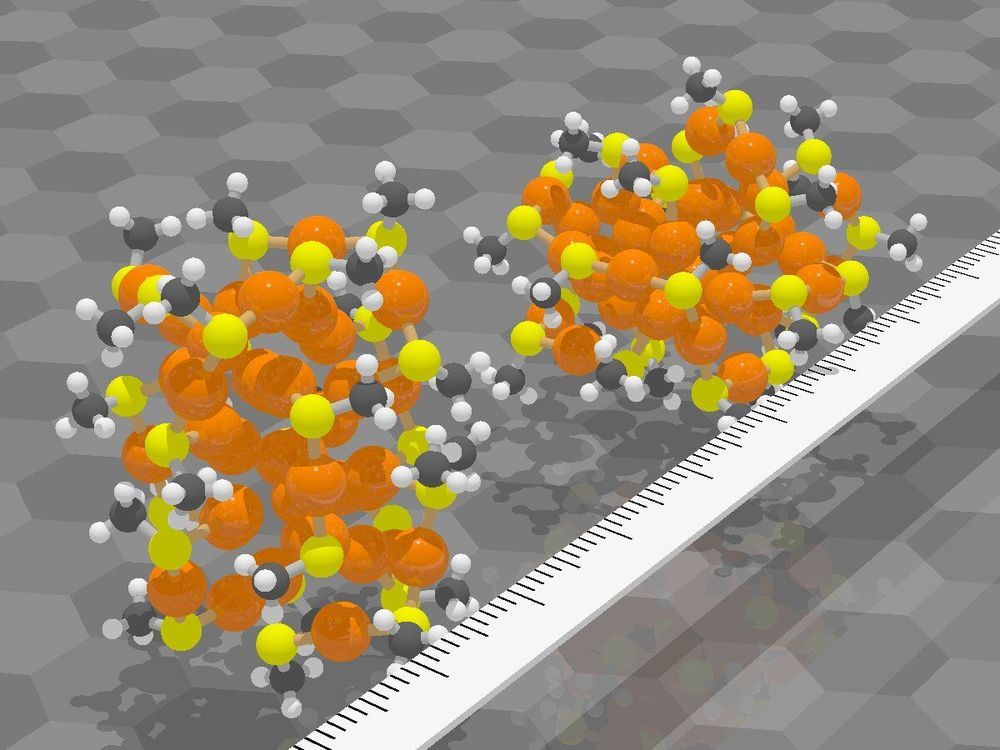

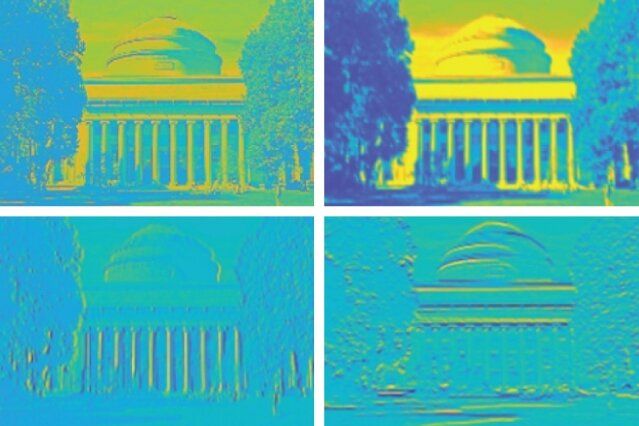

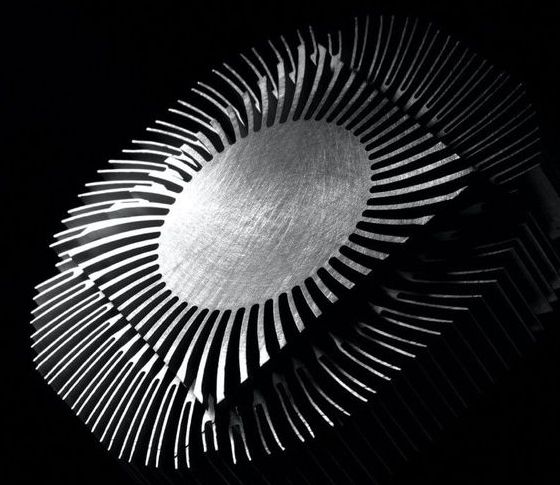

The new methods were applied to ligand-stabilized metal nanoparticles, which have been long studied at the Nanoscience Center at the University of Jyväskylä. Last year, the researchers published a method that is able to successfully predict binding sites of the stabilizing ligand molecules on the nanoparticle surface. Now, a new tool was created that can reliably predict potential energy based on the atomic structure of the particle, without the need to use numerically heavy electronic structure computations. The tool facilitates Monte Carlo simulations of the atom dynamics of the particles at elevated temperatures.

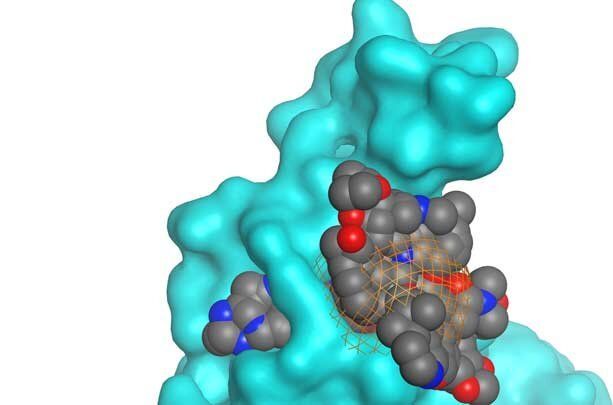

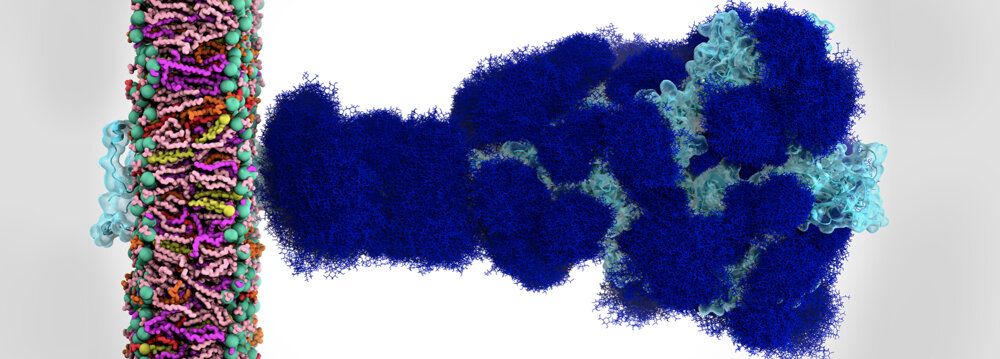

Potential energy of a system is a fundamental quantity in computational nanoscience, since it allows for quantitative evaluations of system’s stability, rates of chemical reactions and strengths of interatomic bonds. Ligand-stabilized metal nanoparticles have many types of interatomic bonds of varying chemical strength, and traditionally the energy evaluations have been done by using the so-called density functional theory (DFT) that often results in numerically heavy computations requiring the use of supercomputers. This has precluded efficient simulations to understand nanoparticles’ functionalities, e.g., as catalysts, or interactions with biological objects such as proteins, viruses, or DNA. Machine learning methods, once trained to model the systems reliably, can speed up the simulations by several orders of magnitude.