Microsoft develops a lightweight scanner that detects backdoors in open-weight LLMs using three behavioral signals, improving AI model security and tr

Multiple critical vulnerabilities in the popular n8n open-source workflow automation platform allow escaping the confines of the environment and taking complete control of the host server.

Collectively tracked as CVE-2026–25049, the issues can be exploited by any authenticated user who can create or edit workflows on the platform to perform unrestricted remote code execution on the n8n server.

Researchers at several cybersecurity companies reported the problems, which stem from n8n’s sanitization mechanism and bypass the patch for CVE-2025–68613, another critical flaw addressed on December 20.

A landmark study suggests that this daily habit may reshape the way your mind activates.

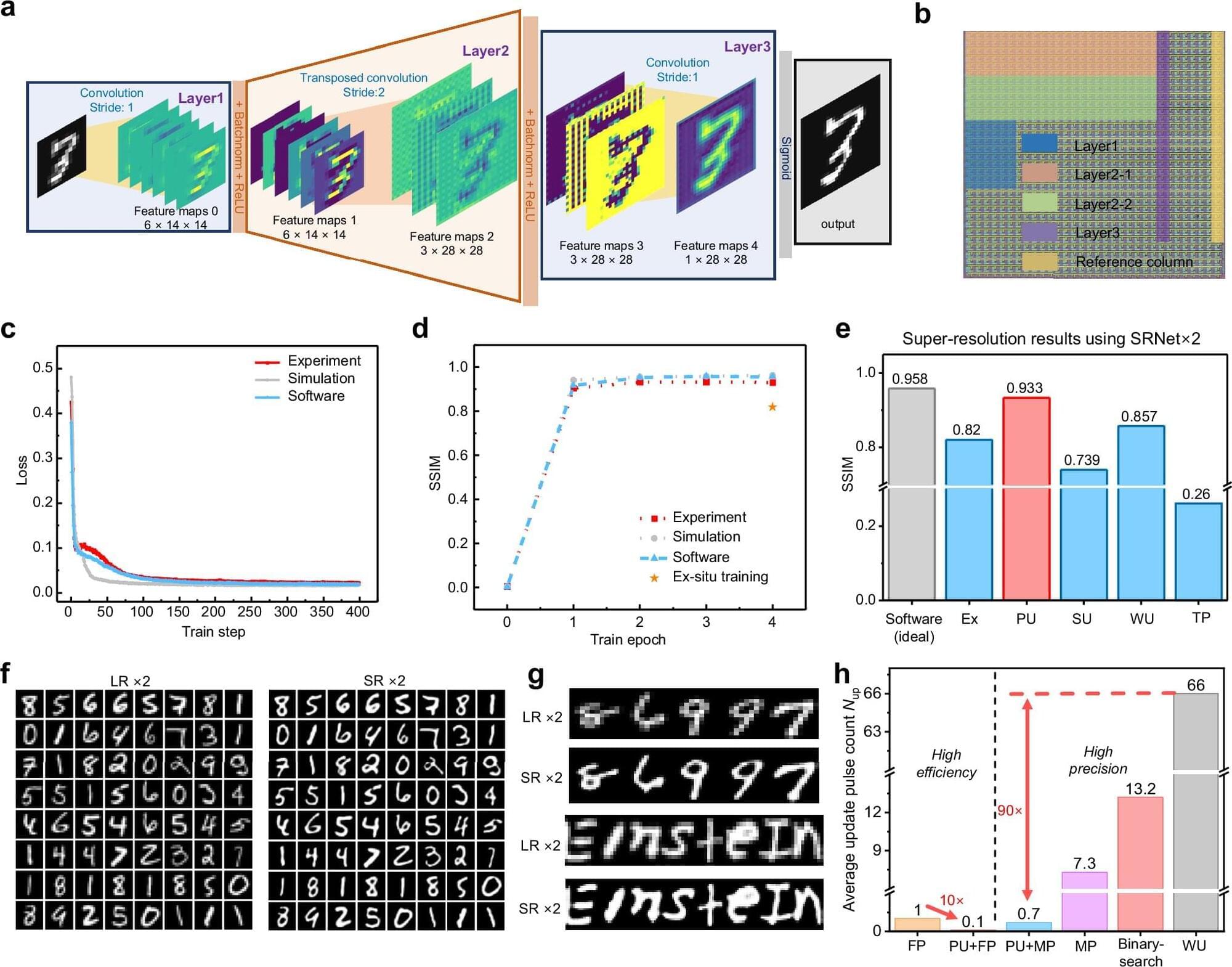

In a Nature Communications study, researchers from China have developed an error-aware probabilistic update (EaPU) method that aligns memristor hardware’s noisy updates with neural network training, slashing energy use by nearly six orders of magnitude versus GPUs while boosting accuracy on vision tasks. The study validates EaPU on 180 nm memristor arrays and large-scale simulations.

Analog in-memory computing with memristors promises to overcome digital chips’ energy bottlenecks by performing matrix operations via physical laws. Memristors are devices that combine memory and processing like brain synapses.

Inference on these systems works well, as shown by IBM and Stanford chips. But training deep neural networks hits a snag: “writing” errors when setting memristor weights.

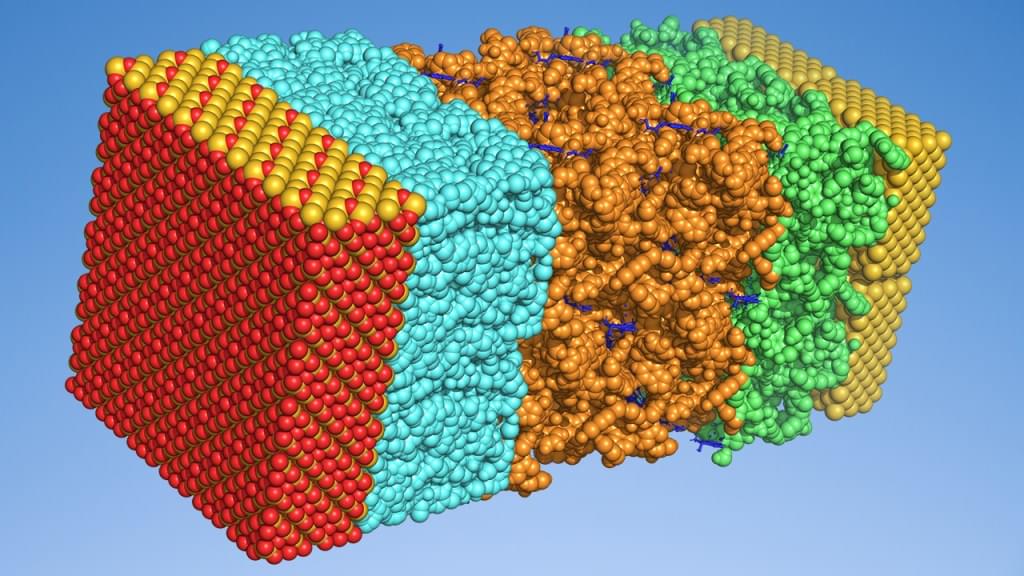

Many advanced electronic devices – such as OLEDs, batteries, solar cells, and transistors – rely on complex multilayer architectures composed of multiple materials. Optimizing device performance, stability, and efficiency requires precise control over layer composition and arrangement, yet experimental exploration of new designs is costly and time-intensive. Although physics-based simulations offer insight into individual materials, they are often impractical for full device architectures due to computational expense and methodological limitations.

Schrödinger has developed a machine learning (ML) framework that enables users to predict key performance metrics of multilayered electronic devices from simple, intuitive descriptions of their architecture and operating conditions. This approach integrates automated ML workflows with physics-based simulations in the Schrödinger Materials Science suite, leveraging physics-based simulation outputs to improve model accuracy and predictive power. This advancement provides a scalable solution for rapidly exploring novel device design spaces – enabling targeted evaluations such as modifying layer composition, adding or removing layers, and adjusting layer dimensions or morphology. Users can efficiently predict device performance and uncover interpretable relationships between functionality, layer architecture, and materials chemistry. While this webinar focuses on single-unit and tandem OLEDs, the approach is readily adaptable to a wide range of electronic devices.