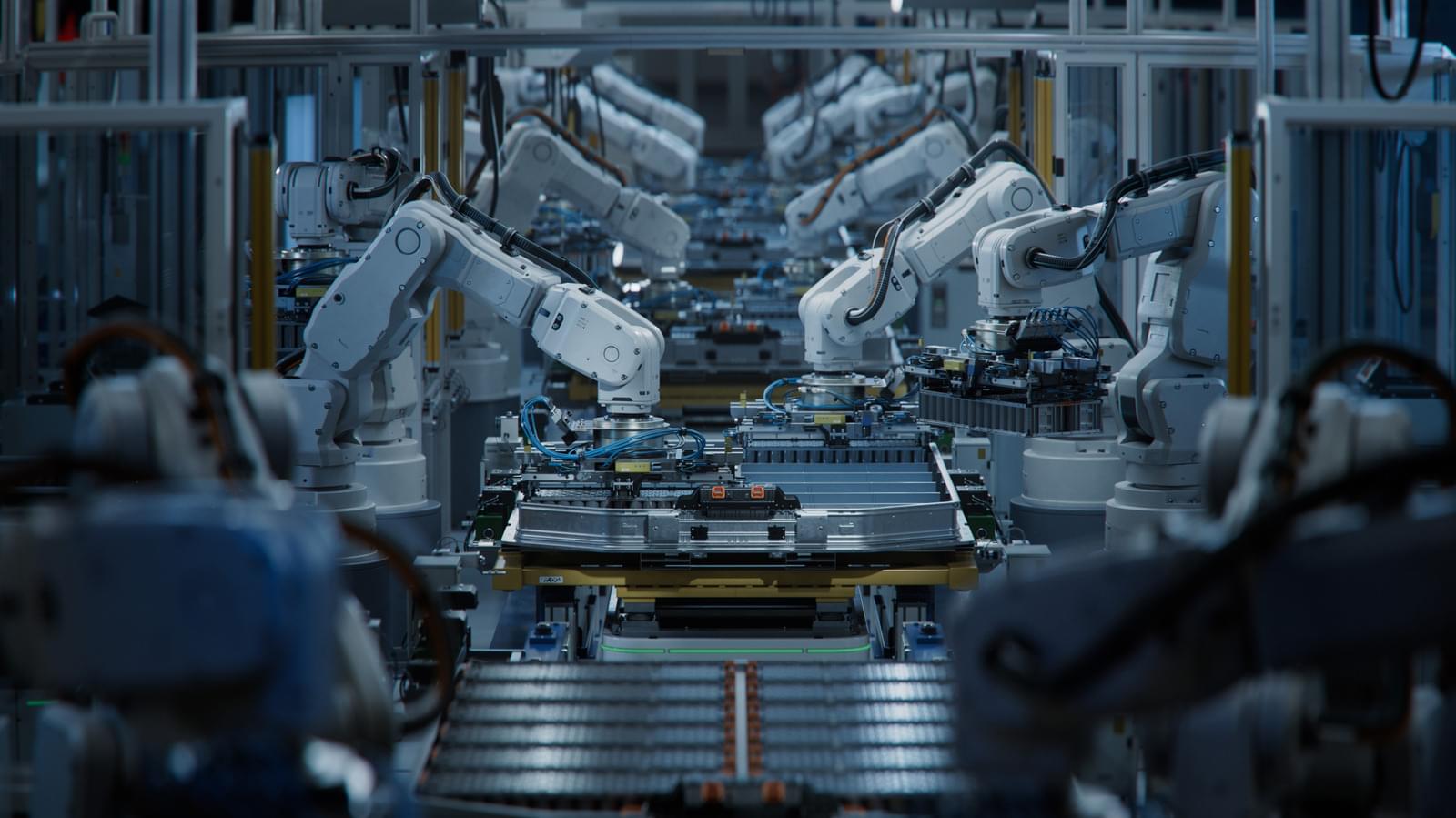

This fully automated Xiaomi smartphone factory operates with the lights off because no one works on the assembly line, thanks to AI and robotics.

Please see my LinkedIn article: “Securing the Neural Frontier.”

We are poised to witness one of the most significant technological advancements in human history: the direct interaction between human brains and machines. Brain-computer interfaces (BCIs), neurotechnology, and brain-inspired computing have already arrived and need to be secure.

Link.

NVIDIA CEO Jensen Huang discusses how artificial intelligence is advancing and handling competition with China on ‘Maria Bartiromo’s Wall Street.’ #fox #media #breakingnews #us #usa #new #news #breaking #foxbusiness #nvidia #ai #technology #tech #artificialintelligence #innovation #business #china #competition #jensenhuang #huang #ceo #economy #global #future.

Watch more Fox Business Video: https://video.foxbusiness.com.

Watch Fox Business Network Live: http://www.foxnewsgo.com/

FOX Business Network (FBN) is a financial news channel delivering real-time information across all platforms that impact both Main Street and Wall Street. Headquartered in New York — the business capital of the world — FBN launched in October 2007 and is one of the leading business networks on television. In 2025 it opened the year posting double-digit advantages across business day, market hours and total day viewers in January. Additionally, the network continued to lead business news programming, with each business day program placing among the top 15 shows, while FBN delivered its highest-rated month since April 2023 with market hours.

Follow Fox Business on Facebook: / foxbusiness.

Follow Fox Business on Twitter: / foxbusiness.

Follow Fox Business on Instagram: / foxbusiness.

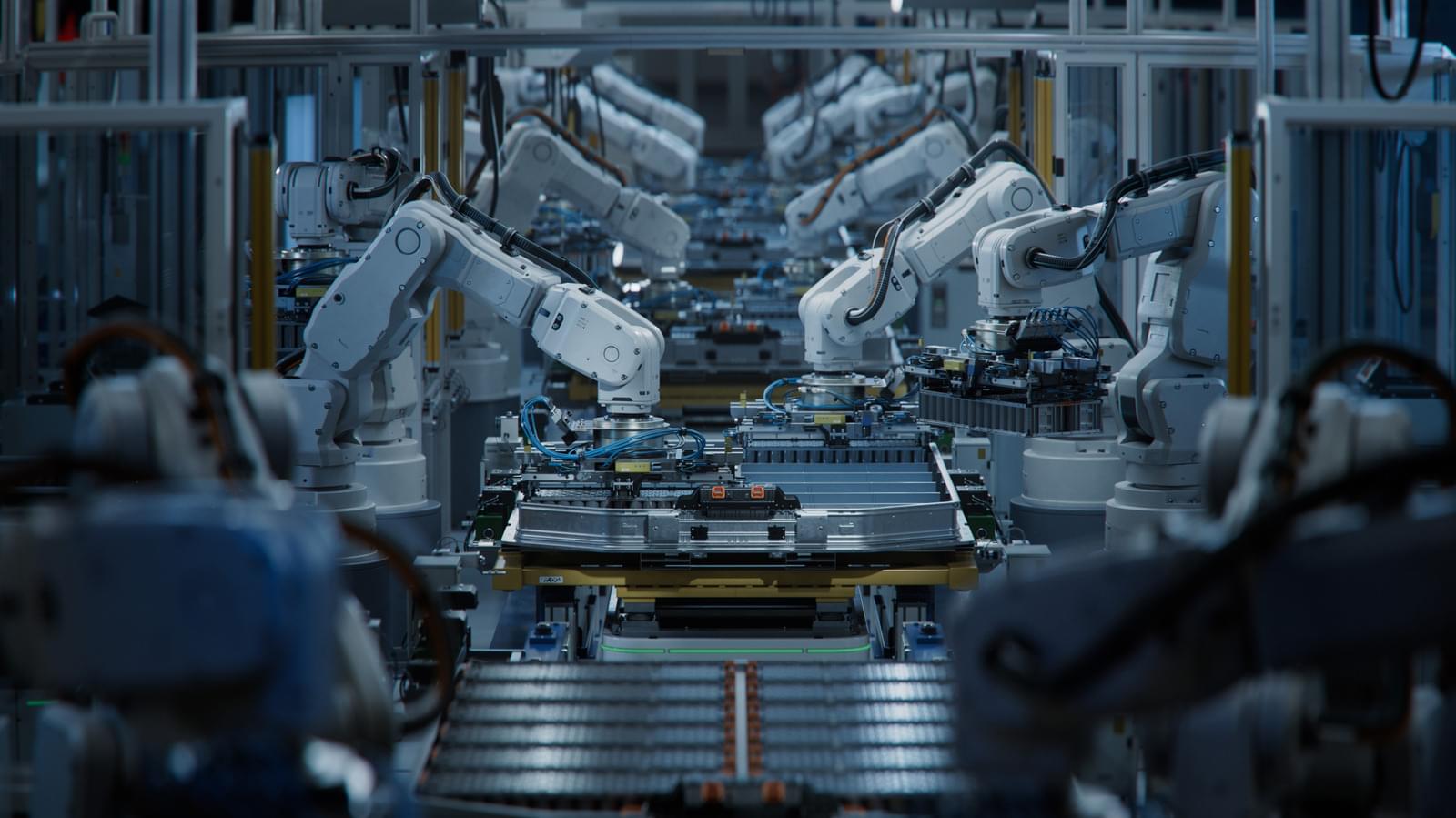

Scientific Reports volume 16, Article number: 1,279 (2026) Cite this article.

Apple’s blockbuster holiday quarter was impressive — but it shouldn’t give cover to avoid an AI reckoning. Also: A new MacBook Pro is planned for the macOS 26.3 release cycle; the company explores a clamshell follow-up to its upcoming foldable phone; and an updated AirTag finally rolls out.

Last week in Power On: Inside Apple’s AI shake-up and its plans for two new versions of Siri powered by Gemini.

On stage at Imagination In Action’s AI Summit in Davos with John Werner, founder and CEO of Imagination In Action, Yann LeCun discusses the inevitable shift from current large language models to a new paradigm of “physical AI” based on world models. LeCun opens up about the importance of maintaining open-source research to mitigate the geopolitical risks of concentrated AI power.

Fuel your success with Forbes. Gain unlimited access to premium journalism, including breaking news, groundbreaking in-depth reported stories, daily digests and more. Plus, members get a front-row seat at members-only events with leading thinkers and doers, access to premium video that can help you get ahead, an ad-light experience, early access to select products including NFT drops and more:

https://account.forbes.com/membership/?utm_source=youtube&ut…ytdescript.

Stay Connected.

Forbes newsletters: https://newsletters.editorial.forbes.com.

Forbes on Facebook: http://fb.com/forbes.

Forbes Video on Twitter: http://www.twitter.com/forbes.

Forbes Video on Instagram: http://instagram.com/forbes.

More From Forbes: http://forbes.com.

Forbes covers the intersection of entrepreneurship, wealth, technology, business and lifestyle with a focus on people and success.

The future won’t be built on one breakthrough. It will be shaped by how well we mesh AI, quantum, 5G, IoT, and human intelligence into secure, resilient systems. Technology advantage now comes from orchestration, not adoption.

My latest Forbes article explores why this convergence will define the next decade.

#AI #QuantumComputing #EmergingTechnology #Cybersecurity #Leadership #FutureOfTech.

Link to article.

The next decade of innovation will not be defined by a single breakthrough technology. Instead, it will be shaped by the convergence of multiple emerging technologies.

…Q.ai is tight-lipped in public about its technology, but patents it filed show tech being used in headphones or glasses using ‘facial skin micro movements’ for nonverbal communication, according to the FT.

Apple’s vice president of hardware, Johnny Srouji, said in a statement that the startup is ‘pioneering new and creative ways to use imaging and machine learning.’

The move may be a component of Apple’s strategy for ‘wearable’ products, such as smart glasses. Software that reads facial expressions could potentially make way for a hands-free user interface that doesn’t require talking out loud, reports noted.

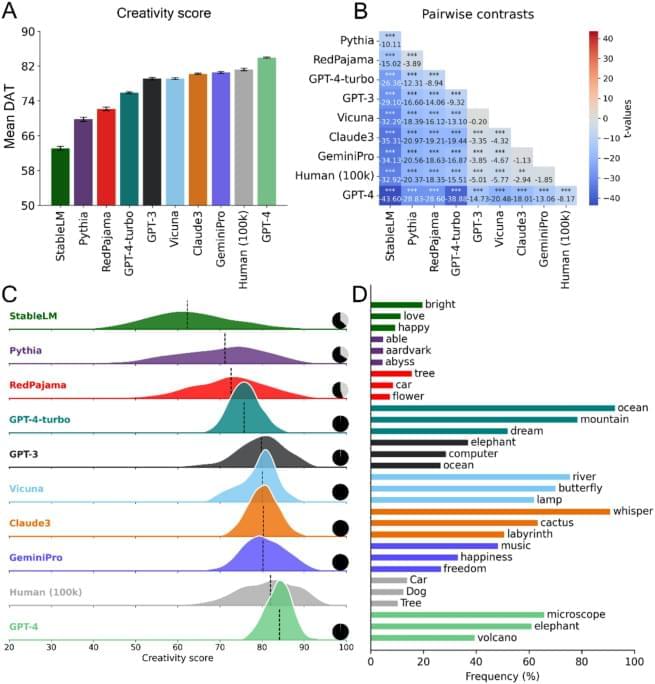

A new study from the University at Albany shows that artificial intelligence systems may organize information in far more intricate ways than previously thought. The study, “Exploring the Stratified Space Structure of an RL Game with the Volume Growth Transform,” has been published online through arXiv.

For decades, scientists assumed that neural networks encoded data on smooth, low-dimensional surfaces known as manifolds. But UAlbany researchers found that a transformer-based reinforcement-learning model instead organizes its internal representations in stratified spaces—geometric structures composed of multiple interconnected regions with different dimensions. Their findings mirror recent results in large language models, suggesting that stratified geometry might be a fundamental feature of modern AI systems.

“These models are not living on simple surfaces,” said Justin Curry, associate professor in the Department of Mathematics and Statistics in the College of Arts and Sciences. “What we see instead is a patchwork of geometric layers, each with its own dimensionality. It’s a much richer and more complex picture of how AI understands the world.”