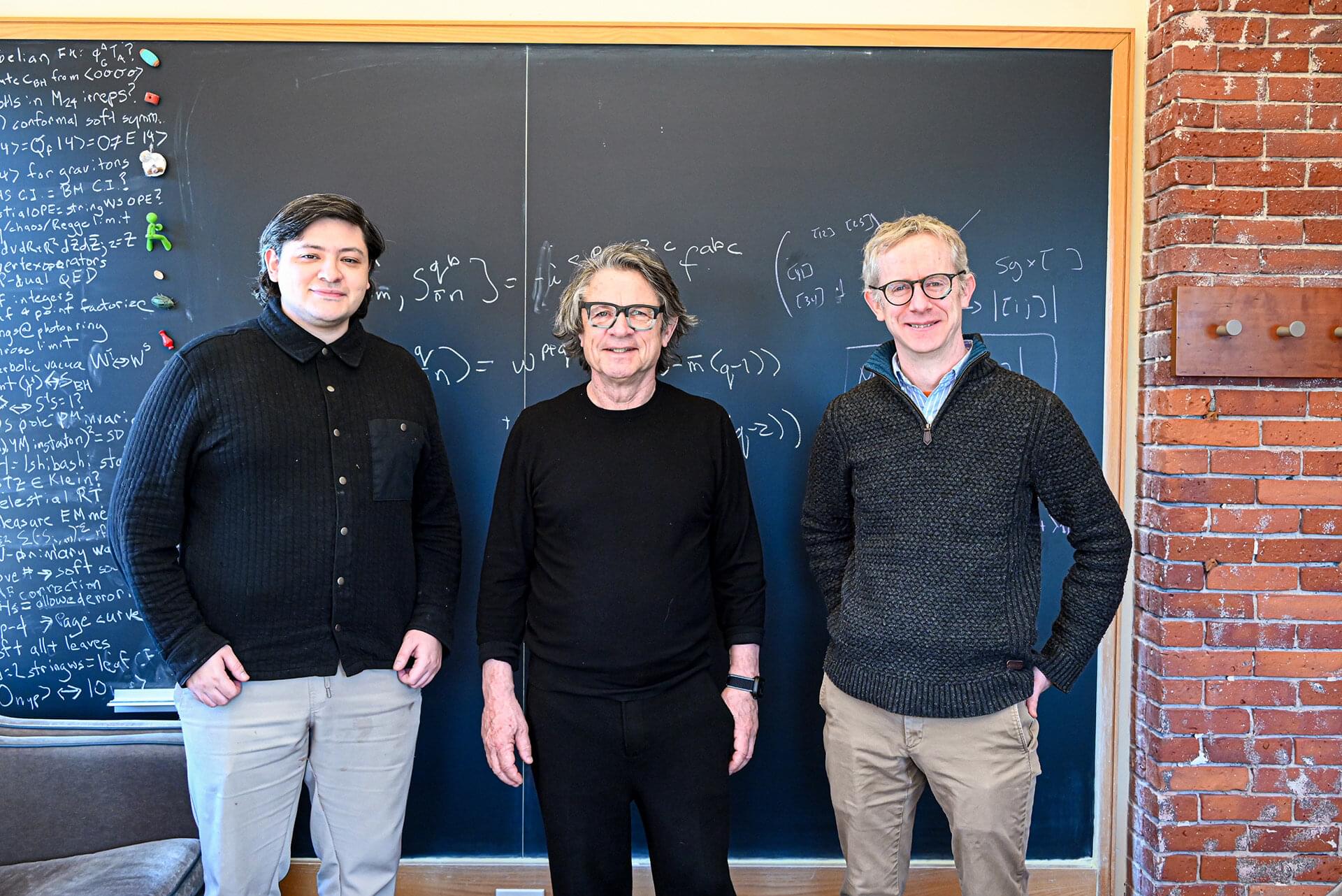

Like many scientists, theoretical physicist Andrew Strominger was unimpressed with early attempts at probing ChatGPT, receiving clever-sounding answers that didn’t stand up to scrutiny. So he was skeptical when a talented former graduate student paused a promising academic career to take a job with OpenAI. Strominger told him physics needed him more than Silicon Valley.

Still, Strominger, the Gwill E. York Professor of Physics, was intrigued enough by AI that he agreed when the former student, Alex Lupsasca, Ph.D., invited him to visit OpenAI last month to pose a thorny problem to the firm’s powerful in-house version of ChatGPT.

Strominger came away with much more than he expected—and the field of theoretical physics appears to have gained a little something too.