Globally, approximately 139 million people are expected to have Alzheimer’s disease (AD) by 2050. Magnetic resonance imaging (MRI) is an important tool for identifying changes in brain structure that precede cognitive decline and progression with disease; however, its cost limits widespread use.

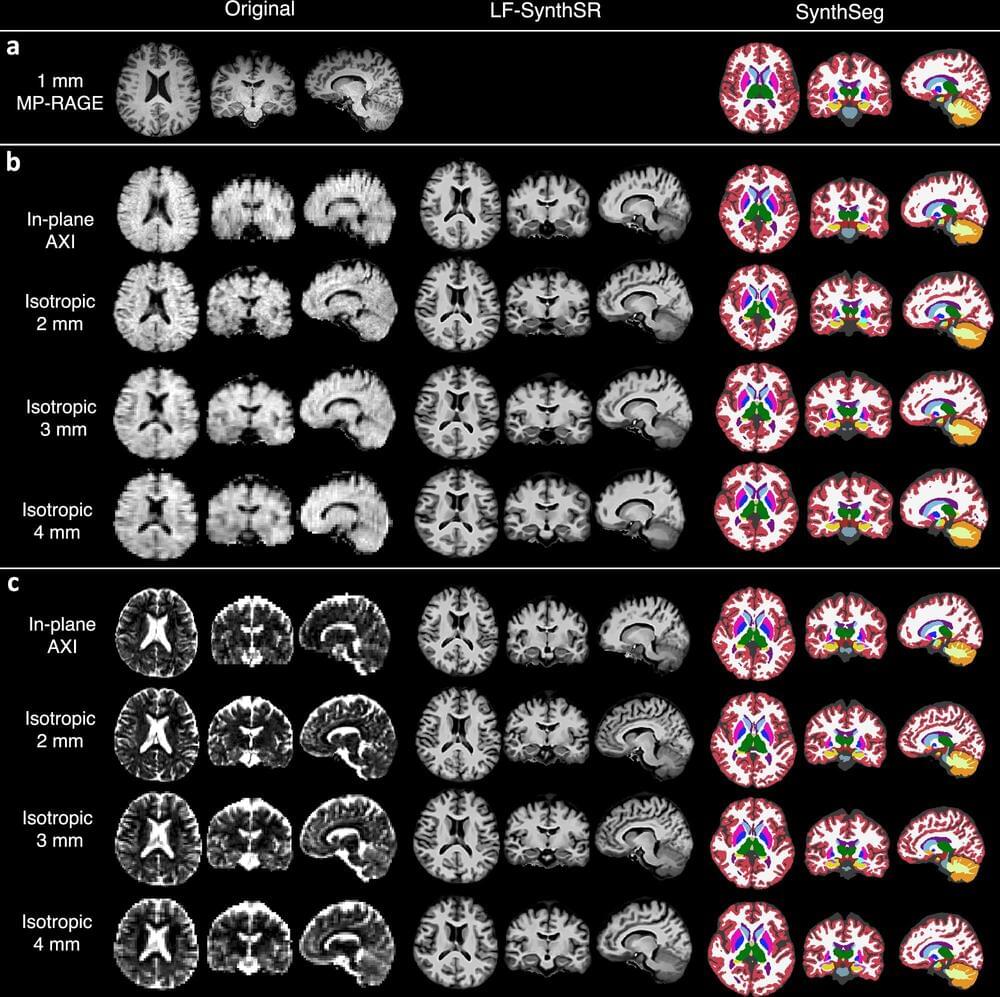

A new study by investigators from Massachusetts General Hospital (MGH), a founding member of the Mass General Brigham health care system, demonstrates that a simplified, low magnetic field (LF) MRI machine, augmented with machine learning tools, matches conventional MRI in measuring brain characteristics relevant to AD. Findings, published in Nature Communications, highlight the potential of the LF-MRI to help evaluate those with cognitive symptoms.

“To tackle the growing, global health challenge of dementia and cognitive impairment in the aging population, we’re going to need simple, bedside tools that can help determine patients’ underlying causes of cognitive impairment and inform treatment,” said senior author W. Taylor Kimberly, MD, Ph.D., chief of the Division of Neurocritical Care in the Department of Neurology at MGH.