A team of AI researchers at the Alibaba Group’s Tongyi Lab, has debuted a new approach to training LLMs; one that costs much less than those now currently in use. Their paper is posted on the arXiv preprint server.

As LLMs such as ChatGPT have become mainstream, the resources and associated costs of running them have skyrocketed, forcing AI makers to look for ways to get the same or better results using other techniques. To this end, the team working at the Tongyi Lab has found a way to train LLMs in a new way that uses far fewer resources.

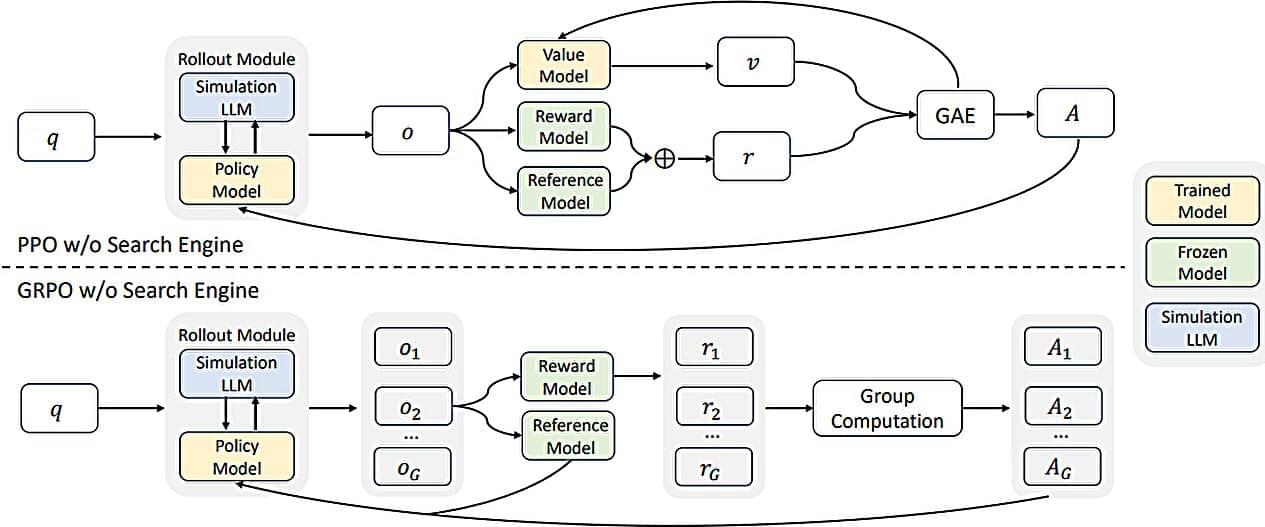

The idea behind ZeroSearch is to no longer use API calls to search engines to amass search results as a way to train an LLM. Their method instead uses simulated AI-generated documents to mimic the output from traditional search engines, such as Google.