Artificial intelligence is getting smarter every day, but it still has its limits. One of the biggest challenges has been teaching advanced AI models to reason, which means solving problems step by step. But in a new paper published in the journal Nature, the team from DeepSeek AI, a Chinese artificial intelligence company, reports that they were able to teach their R1 model to reason on its own without human input.

When many of us try to solve a problem, we typically don’t get the answer straight away. We follow a methodical process that may involve gathering information and taking notes until we get to a solution. Traditionally, training AI models to reason has involved copying our approach. However, it is a long, drawn-out process where people show an AI model countless examples of how to work through a problem. It also means that AI is only as good as the examples it is given and can pick up on human biases.

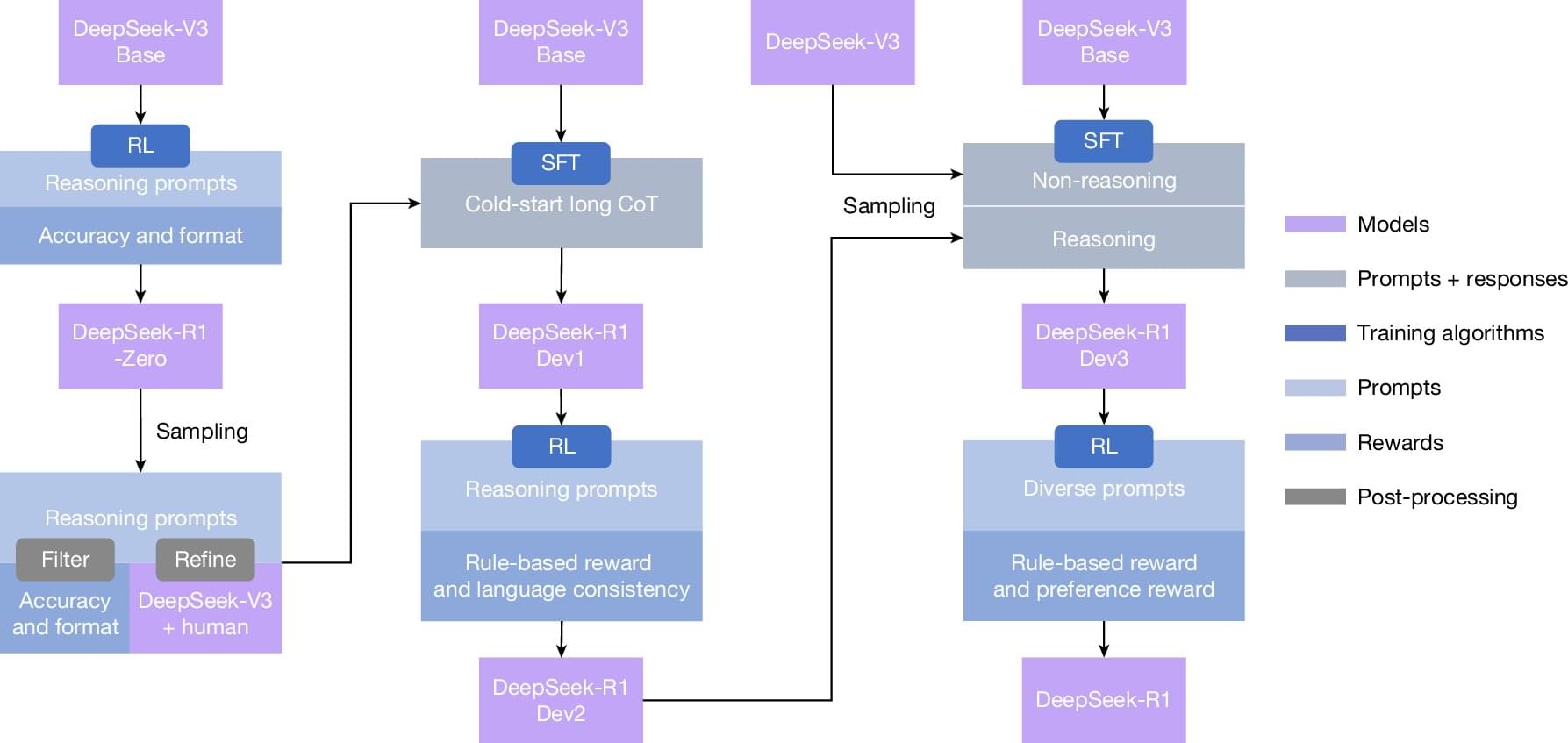

Instead of showing the R1 model every step, researchers at DeepSeek AI used a technique called reinforcement learning. This trial-and-error approach, using rewards for correct answers, encouraged the model to reason for itself.