The tool, called Wendy’s FreshAI, can understand customization requests, respond to common customer questions and offer upsell suggestions.

A new study focuses on the role of the hippocampus, a brain region important for memory, and its place cells which “replay” neuronal sequences.

The researchers built an artificial intelligence model to better understand these processes, discovering that sequences of experiences are prioritized during replay based on familiarity and rewards.

The AI agent was found to learn spatial information more effectively when replaying these prioritized sequences, offering valuable insight into the way our brains learn and process information.

Invertebrates like jellyfish are inspiring flexible ‘soft’ robot designs. They could be used in tight and delicate settings, such as in surgical tools.

Scientific work often involves sifting through enormous amounts of data, a task that’s overwhelmingly mundane for humans but a piece of cake for artificial intelligence. A new platform dubbed BacterAI can conduct as many as 10,000 experiments per day to teach itself – and us – more about bacteria.

The human body is home to trillions of microbes, covering almost every surface inside and out. Many of them are vital to specific bodily functions, while many others make you sick. Research continues to uncover how inextricably linked our overall health is to our microbiomes, but managing and exploring the data involved remains a daunting task.

“We know almost nothing about most of the bacteria that influence our health,” said Paul Jensen, corresponding author of the new study. “Understanding how bacteria grow is the first step toward reengineering our microbiome.”

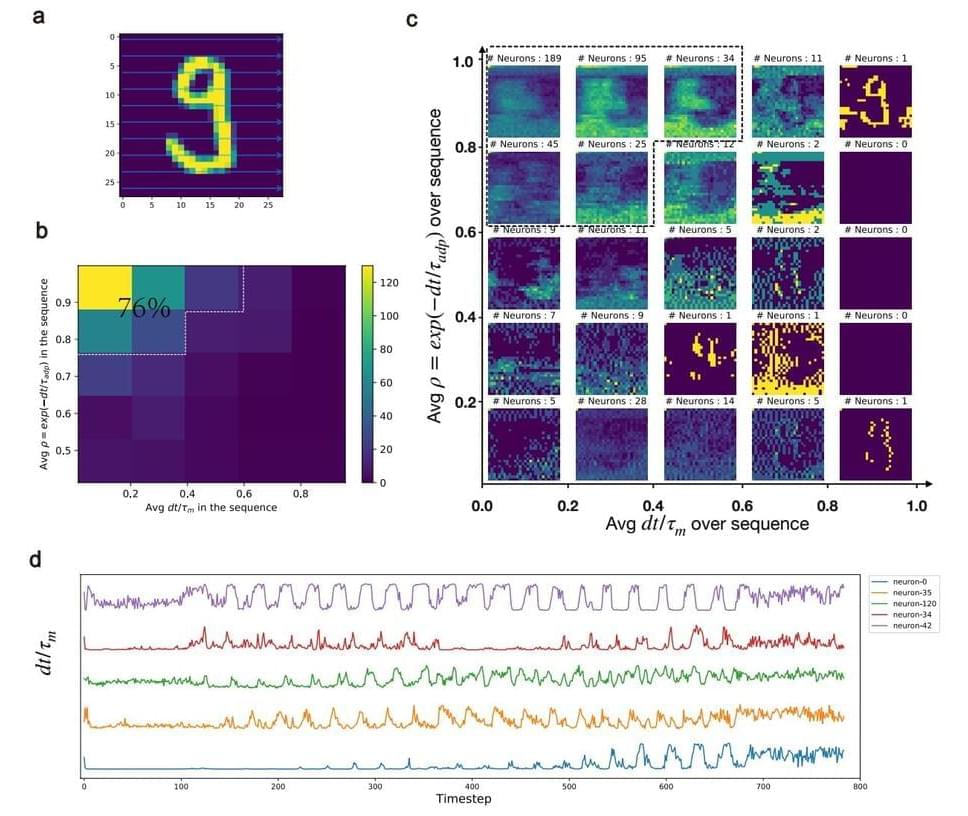

In a new study in Nature Machine Intelligence, researchers Bojian Yin and Sander Bohté from the HBP partner Dutch National Research Institute for Mathematics and Computer Science (CWI) demonstrate a significant step towards artificial intelligence that can be used in local devices like smartphones and in VR-like applications, while protecting privacy.

They show how brain-like neurons combined with novel learning methods enable training fast and energy-efficient spiking neural networks on a large scale. Potential applications range from wearable AI to speech recognition and Augmented Reality.

While modern artificial neural networks are the backbone of the current AI revolution, they are only loosely inspired by networks of real, biological neurons such as our brain. The brain however is a much larger network, much more energy-efficient, and can respond ultra-fast when triggered by external events. Spiking neural networks are special types of neural networks that more closely mimic the working of biological neurons: the neurons of our nervous system communicate by exchanging electrical pulses, and they do so only sparingly.

Discover the fascinating world of digital immortality and the pivotal role artificial intelligence plays in bringing this concept to life. In this captivating video, we delve into the intriguing idea of preserving our consciousness, memories, and personalities in a digital realm, potentially allowing us to live forever in a virtual environment. Unravel the cutting-edge AI technologies like mind uploading, AI-powered avatars, and advanced brain-computer interfaces that are pushing the boundaries of what it means to be alive.

Join us as we explore the ethical considerations, current progress, and future prospects of digital immortality. Learn about the ongoing advancements in brain-computer interfaces such as Neuralink, AI-powered virtual assistants like ChatGPT, and the challenges and opportunities that lie ahead. Will digital immortality redefine humanity’s relationship with life, death, and existence itself? Watch now to uncover the possibilities.

Keywords: digital immortality, artificial intelligence, mind uploading, AI-powered avatars, brain-computer interfaces, Neuralink, ChatGPT, virtual afterlife, eternal life, neuroscience, ethics, virtual reality, consciousness, future of humanity.

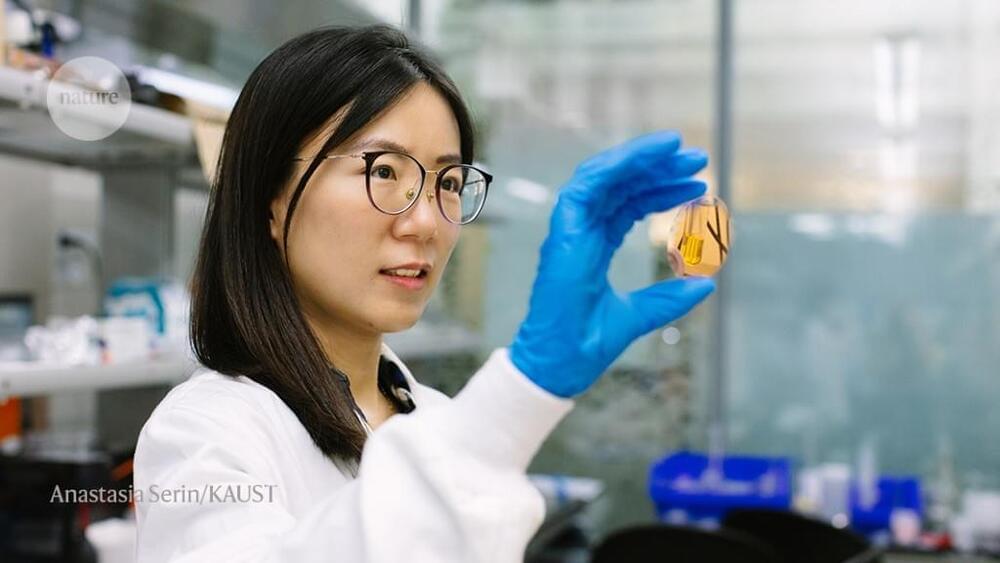

New research by biotech Integrated Biosciences and scientists from MIT and the Broad Institute of MIT and Harvard has demonstrated the potential of AI in discovering novel senolytic compounds.

Longevity. Technology: Senolytics are small molecules that suppress age-related processes such as fibrosis, inflammation and cancer. They target senescent cells – the so-called ‘zombie’ cells that are no longer dividing, emit toxic chemicals and are a hallmark of aging. Senescent cells have been linked to various age-related diseases, including cancer, cardiovascular disease, diabetes and Alzheimer’s disease, but senolytic compounds can tackle them by selectively inducing apoptosis or programmed cell death in these zombie cells. This new research reduced the number of senescent cells and lowered the expression of senescence-associated genes in aged mice, results which, the authors say “underscore the promise of leveraging deep learning to discover senotherapeutics” [1].

The AI-guided screening of more than 800,000 compounds led to the identification of three drug candidates, which, when compared with senolytics currently under investigation, were found to have comparable efficacy and superior medicinal chemistry properties [1].

AI is poised as a way to address many of the hazardous elements of manufacturing workplaces. The technology can help limit employees’ exposure to loud environments, unwieldy machinery and dangerous tasks by streamlining processes and helping workers focus on less physically risky activities.

In manufacturing, many of AI’s potential benefits are concentrated in replacing the cause of the most common workplace injuries. These include “musculoskeletal disorders, mainly from overexertion in lifting and lowering, and being struck by powered industrial trucks and other materials handling equipment,” according to an OSHA spokesperson.

There are several ways to reduce those points of risk, with the most dangerous manufacturing tasks standing to benefit the most.