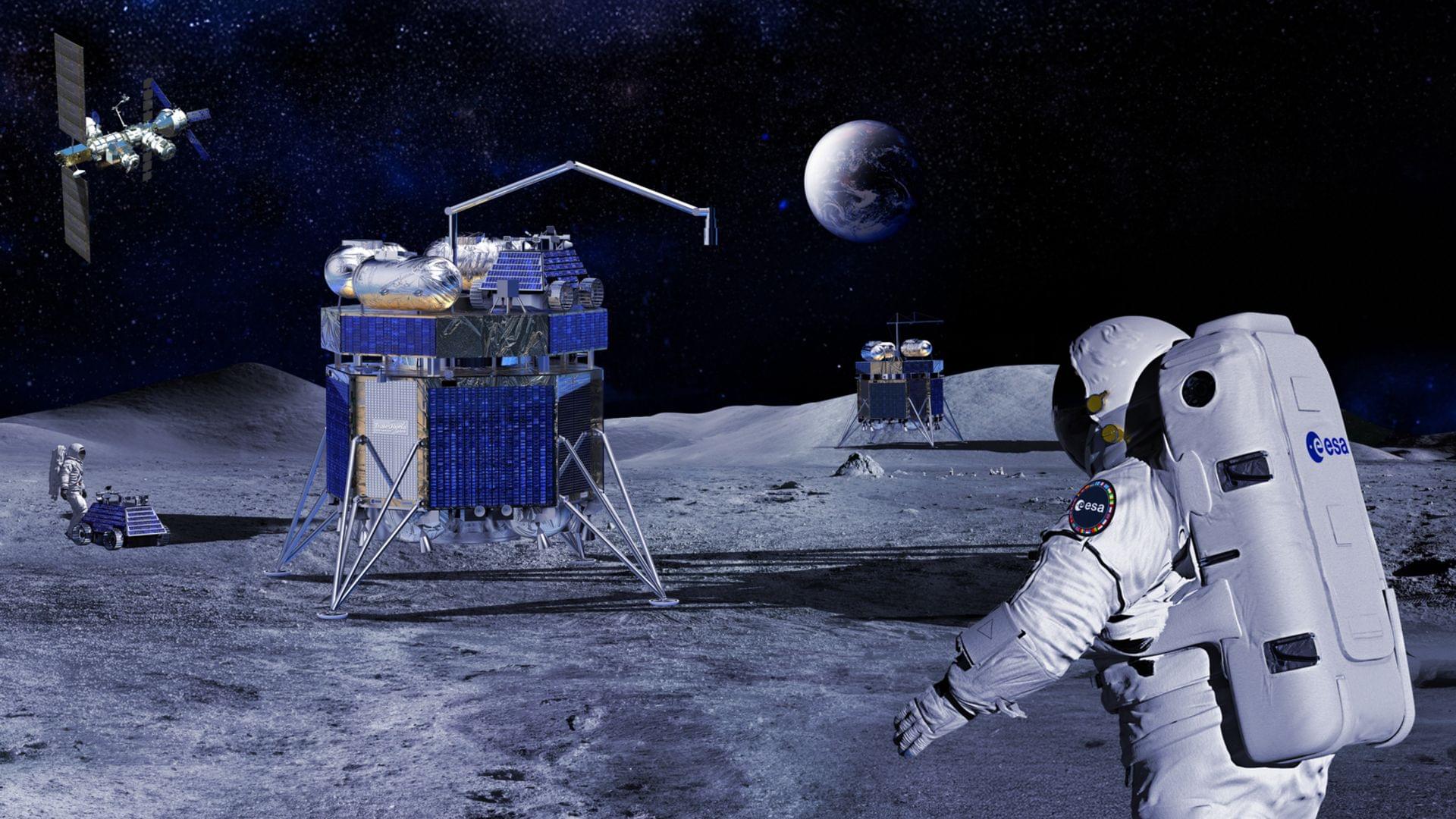

The robotic Argonaut is scheduled to fly its first mission in 2030.

Tesla has released a new clip of its Optimus humanoid robot running inside a lab. The video comes from the official Optimus account on X and Elon Musk shared it with his followers soon after. The robot jogs past other units in the background and keeps a steady pace on the lab floor.

Also, The team said that they had set a new personal record in the lab. Musk also stressed the internal record had been broken, which puts fresh attention on how far the project has moved in a short time.

Just set a new PR in the lab pic.twitter.com/8kJ2om7uV7 — Tesla Optimus (@Tesla_Optimus) December 2, 2025

Imagine having a continuum soft robotic arm bend around a bunch of grapes or broccoli, adjusting its grip in real time as it lifts the object. Unlike traditional rigid robots that generally aim to avoid contact with the environment as much as possible and stay far away from humans for safety reasons, this arm senses subtle forces, stretching and flexing in ways that mimic more of the compliance of a human hand. Its every motion is calculated to avoid excessive force while achieving the task efficiently.

In the MIT Computer Science and Artificial Intelligence Laboratory (CSAIL) and Laboratory for Information and Decisions Systems (LIDS) labs, these seemingly simple movements are the culmination of complex mathematics, careful engineering, and a vision for robots that can safely interact with humans and delicate objects.

Soft robots, with their deformable bodies, promise a future where machines move more seamlessly alongside people, assist in caregiving, or handle delicate items in industrial settings. Yet that very flexibility makes them difficult to control. Small bends or twists can produce unpredictable forces, raising the risk of damage or injury. This motivates the need for safe control strategies for soft robots.

By Chuck Brooks

#cybersecurity #artificialintelligence #criticalinfrastructure #risks

By Chuck Brooks, president of Brooks Consulting International

Federal agencies and their industry counterparts are moving at a breakneck pace to modernize in this fast-changing digital world. Artificial intelligence, automation, behavioral analytics, and autonomous decision systems have become integral to mission-critical operations. This includes everything from managing energy and securing borders to delivering healthcare, supporting defense logistics, and verifying identities. These technologies are undeniably enhancing capabilities. However, they are also subtly altering the landscape of risk.

The real concern isn’t any one technology in isolation, but rather the way these technologies now intersect and rely on each other. We’re leaving behind a world of isolated cyber threats. Now, we’re facing convergence risk, a landscape where cybersecurity, artificial intelligence, data integrity, and operational resilience are intertwined in ways that often remain hidden until a failure occurs. We’re no longer just securing networks. We’re safeguarding confidence, continuity, and the trust of society.

Addressing the staggering power and energy demands of artificial intelligence, engineers at the University of Houston have developed a revolutionary new thin-film material that promises to make AI devices significantly faster while dramatically cutting energy consumption.

The breakthrough, detailed in the journal ACS Nano, introduces a specialized two-dimensional (2D) thin film dielectric —or an electric insulator—designed to replace traditional, heat generating components in integrated circuit chips. This new thin film material, which does not store electricity, will help reduce the significant energy cost and heat produced by the high-performance computing necessary for AI.

“AI has made our energy needs explode,” said Alamgir Karim, Dow Chair and Welch Foundation Professor at the William A. Brookshire Department of Chemical and Biomolecular Engineering at UH.

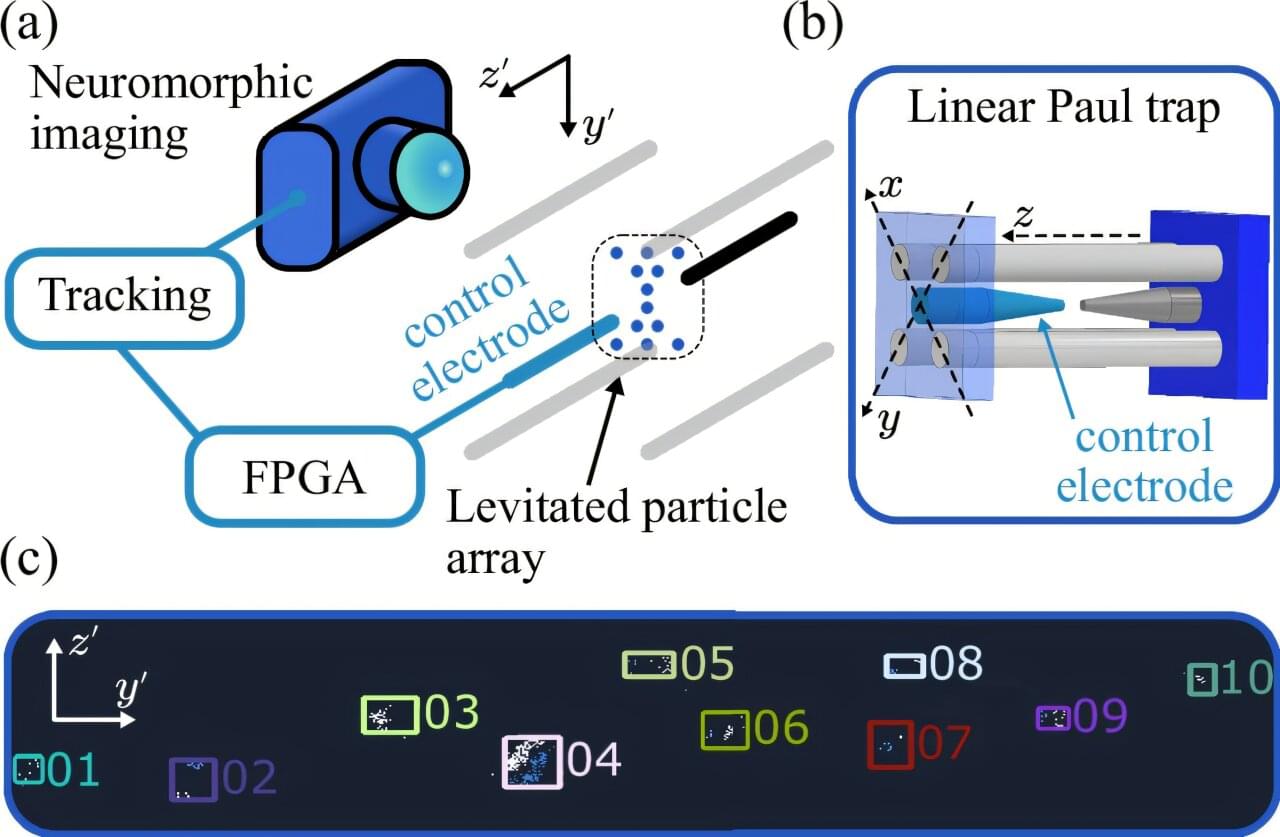

A new type of sensor that levitates dozens of glass microparticles could revolutionize the accuracy and efficiency of sensing, laying the foundation for better autonomous vehicles, navigation and even the detection of dark matter.

Using a camera inspired by the human eye, scientists from King’s College London believe they could track upwards of 100 floating particles in what could be one of the most sensitive sensors to date.

Levitating sensors typically isolate small particles to observe and quantify the impact of outside forces like acceleration on them. The higher the number of particles which could be disturbed and the greater their isolation from their environment, the more accurate the sensor can be.

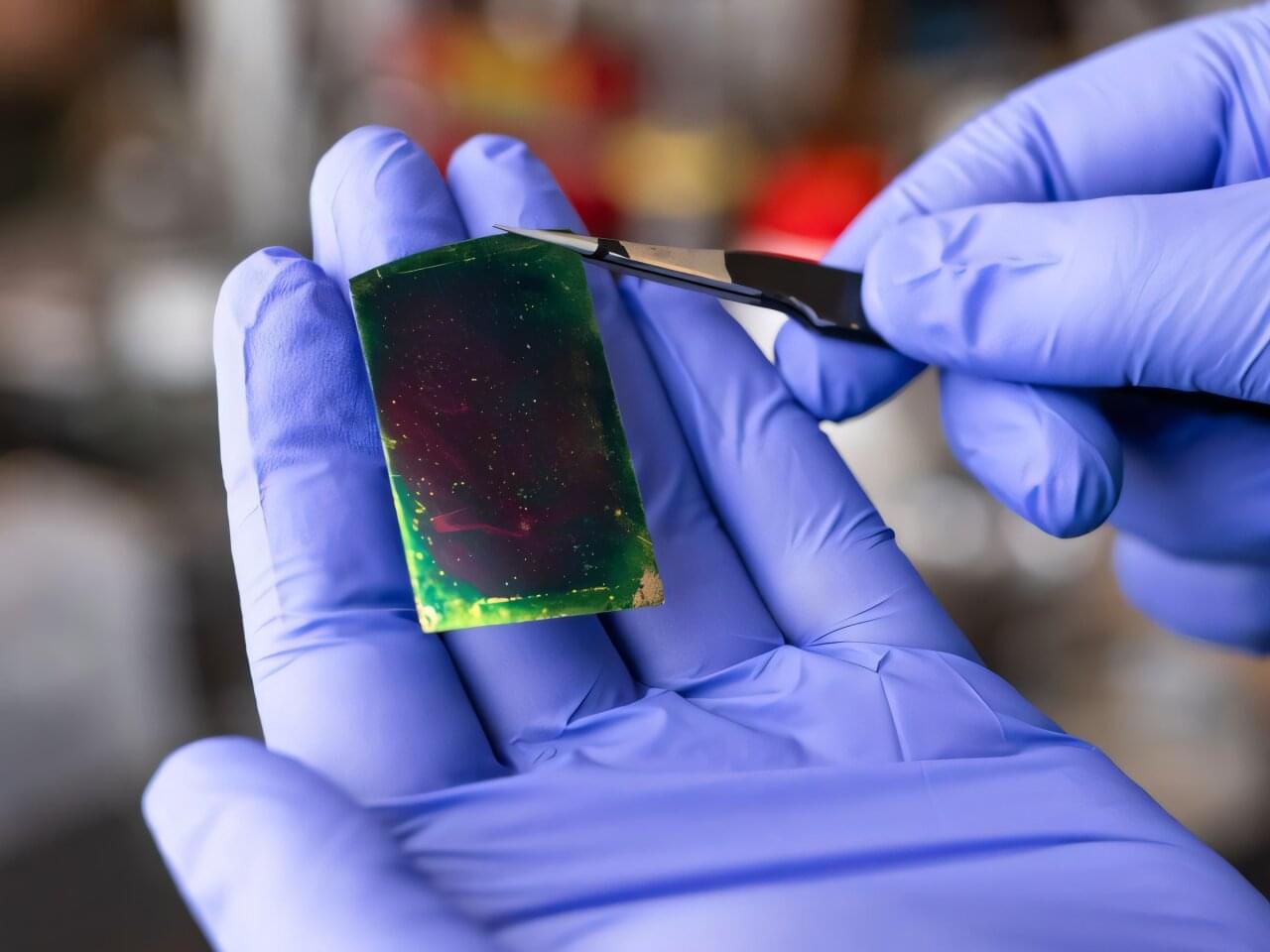

A tiny device that entangles light and electrons without super-cooling could revolutionize quantum tech in cryptography, computing, and AI.

Present-day quantum computers are big, expensive, and impractical, operating at temperatures near-459 degrees Fahrenheit, or “absolute zero.” In a new paper, however, materials scientists at Stanford University introduce a new nanoscale optical device that works at room temperature to entangle the spin of photons (particles of light) and electrons to achieve quantum communication—an approach that uses the laws of quantum physics to transmit and process data. The technology could usher in a new era of low-cost, low-energy quantum components able to communicate over great distances.

“The material in question is not really new, but the way we use it is,” says Jennifer Dionne, a professor of materials science and engineering and senior author of the paper just published in Nature Communications describing the novel device. “It provides a very versatile, stable spin connection between electrons and photons that is the theoretical basis of quantum communication. Typically, however, the electrons lose their spin too quickly to be useful.”

OpenAI’s AI-powered ChatGPT is down worldwide with users receiving errors when attempting to access chats, with no reasons currently given.

If you are affected, you will see errors, “something seems to have gone wrong,” errors, with ChatGPT adding that “There was an error generating a response” to their queries.

In our tests, BleepingComputer observed that GPT keeps loading, and the response never comes.