Circa 2015 face_with_colon_three

The disease is linked to genetic changes on the evolutionary road from ape to human.

face_with_colon_three circa 2018.

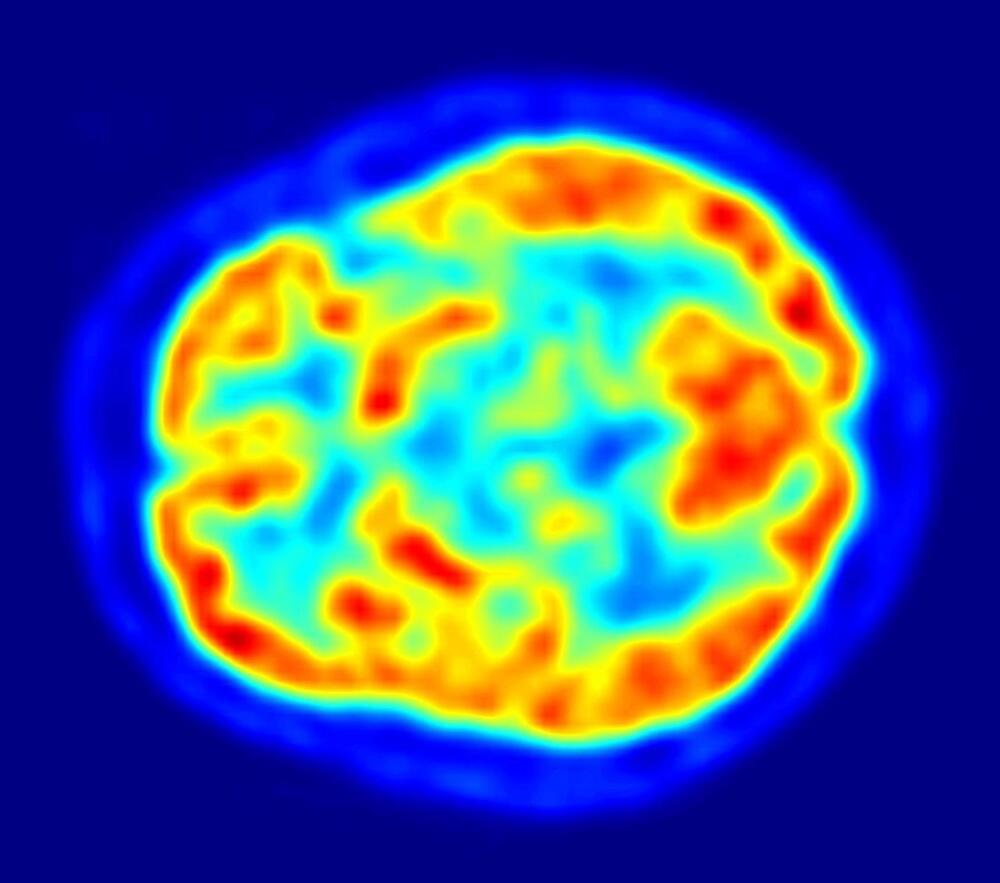

Understanding the fundamental constituents of the universe is tough. Making sense of the brain is another challenge entirely. Each cubic millimetre of human brain contains around 4 km of neuronal “wires” carrying millivolt-level signals, connecting innumerable cells that define everything we are and do. The ancient Egyptians already knew that different parts of the brain govern different physical functions, and a couple of centuries have passed since physicians entertained crowds by passing currents through corpses to make them seem alive. But only in recent decades have neuroscientists been able to delve deep into the brain’s circuitry.

On 25 January, speaking to a packed audience in CERN’s Theory department, Vijay Balasubramanian of the University of Pennsylvania described a physicist’s approach to solving the brain. Balasubramanian did his PhD in theoretical particle physics at Princeton University and also worked on the UA1 experiment at CERN’s Super Proton Synchrotron in the 1980s. Today, his research ranges from string theory to theoretical biophysics, where he applies methodologies common in physics to model the neural topography of information processing in the brain.

“We are using, as far as we can, hard mathematics to make real, quantitative, testable predictions, which is unusual in biology.” — Vijay Balasubramanian

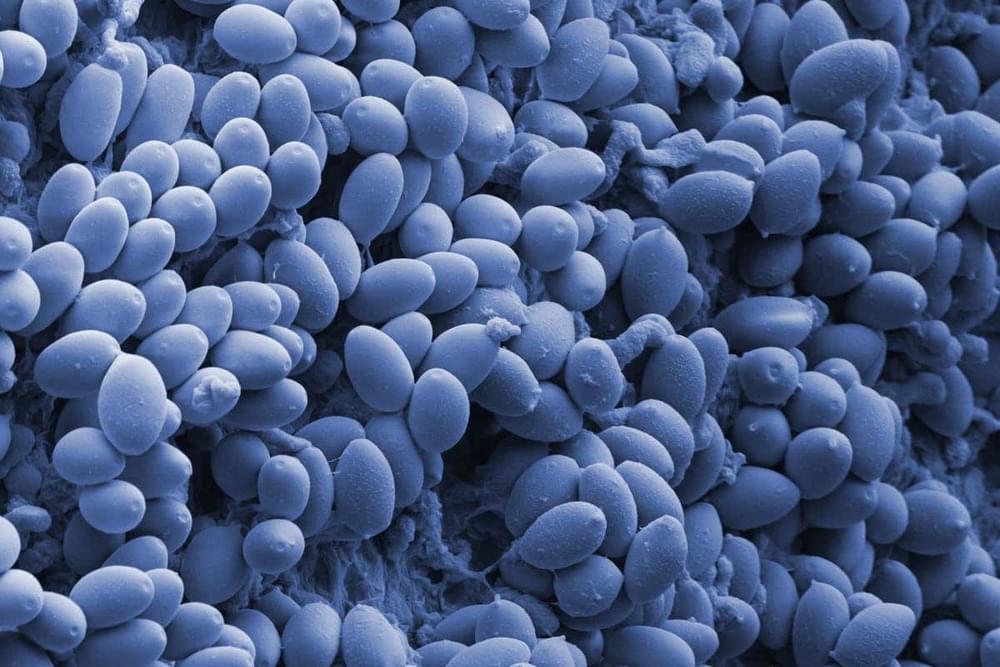

Psilocybin, the psychedelic compound in magic mushrooms, may help people with alcohol dependencies abstain from drinking. Nearly half of those who took the drug as part of a 12-week therapy programme no longer drank more than eight months later, according to results from the largest trial to date on psilocybin and addiction.

Michael Bogenschutz at NYU Langone Health in New York and his colleagues recruited 95 adults who were diagnosed with alcohol dependence. None of the participants had any major psychiatric conditions or had used psychedelics in the past year.

Everyone in the group went through a 12-week therapy programme. Most weeks, they had a roughly 1-hour long session with a therapist and a psychiatrist where they received cognitive behavioural therapy for alcohol use disorder.

Axolotls Can Regenerate Their Own Brains: New research maps out the different cell types hoping to pave the way to regenerative medicine!

This lecture was recorded on February 3, 2003 as part of the Distinguished Science Lecture Series hosted by Michael Shermer and presented by The Skeptics Society in California (1992–2015).

Can there be freedom and free will in a deterministic world? Renowned philosopher and public intellectual, Dr. Dennett, drawing on evolutionary biology, cognitive neuroscience, economics and philosophy, demonstrates that free will exists in a deterministic world for humans only, and that this gives us morality, meaning, and moral culpability. Weaving a richly detailed narrative, Dennett explains in a series of strikingly original arguments that far from being an enemy of traditional explorations of freedom, morality, and meaning, the evolutionary perspective can be an indispensable ally. In Freedom Evolves, Dennett seeks to place ethics on the foundation it deserves: a realistic, naturalistic, potentially unified vision of our place in nature.

Dr. Daniel Dennett — Freedom Evolves: Free Will, Determinism, and Evolution

Watch some of the past lectures for free online.

https://www.skeptic.com/lectures/

SUPPORT THE SOCIETY

You play a vital part in our commitment to promote science and reason. If you enjoy watching the Distinguished Science Lecture Series, please show your support by making a donation, or by becoming a patron. Your ongoing patronage will help ensure that sound scientific viewpoints are heard around the world.

A new study has identified an association between receiving an influenza vaccine and a reduced risk of stroke. The research is published in the journal Neurology.

Risk factors for stroke

A stroke occurs when the blood supply to the brain is cut off, causing damage to neuronal cells that in turn affects physiological functions in the body. There are different types of strokes that can occur: ischemic – where a blockage prevents blood from reaching the brain, hemorrhagic – caused by a bleed in or around the brain and transient ischemic attacks (TIA) which are strokes that last for a short amount of time. It’s estimated that one in four people aged 25 and over will be afflicted by a stroke in their lifetime.