Our aim is to harness the unrivalled computing power of the human brain to dramatically increase the ability of computers to help us solve complex problems.

Virtual reality isn’t just for gaming. Researchers can use virtual reality, or VR, to assess participants’ attention, memory and problem-solving abilities in real world settings. By using VR technology to examine how folks complete daily tasks, like making a grocery list, researchers can better help clinical populations that struggle with executive functioning to manage their everyday lives.

The many different sensations our bodies experience are accompanied by deeply complex exchanges of information within the brain, and the feeling of pain is no exception. So far, research has shown how pain intensity can be directly related to specific patterns of oscillation in brain activity, which are altered by the activation and deactivation of the ‘interneurons’ connecting different regions of the brain. However, it remains unclear how the process is affected by ‘inhibitory’ interneurons, which prevent chemical messages from passing between these regions. Through new research published in EPJ B, researchers led by Fernando Montani at Instituto de Física La Plata, Argentina, show that inhibitory interneurons make up 20% of the circuitry in the brain required for pain processing.

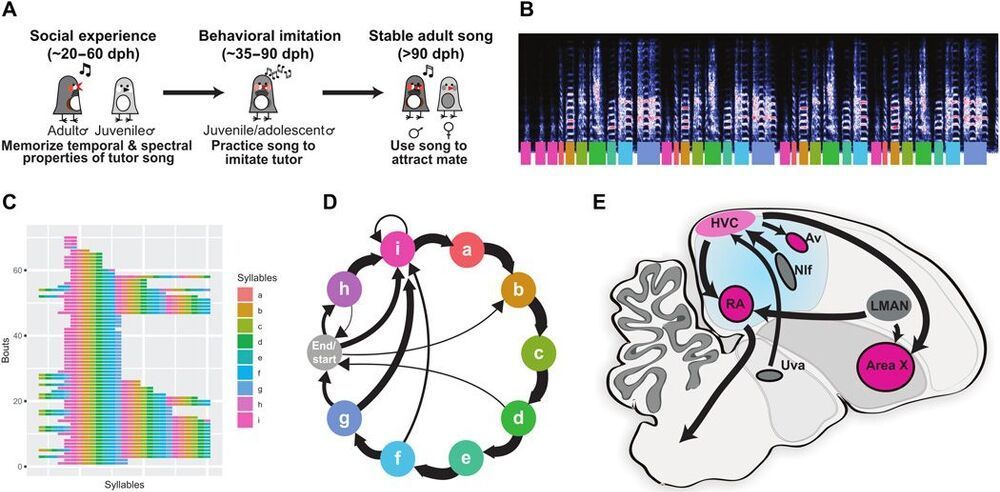

Autism spectrum disorders (ASDs) are characterized by impaired learning of social skills and language. Memories of how parents and other social models behave are used to guide behavioral learning. How ASD-linked genes affect the intertwined aspects of observational learning and behavioral imitation is not known. Here, we examine how disrupted expression of the ASD gene FOXP1, which causes severe impairments in speech and language learning, affects the cultural transmission of birdsong between adult and juvenile zebra finches. FoxP1 is widely expressed in striatal-projecting forebrain mirror neurons. Knockdown of FoxP1 in this circuit prevents juvenile birds from forming memories of an adult song model but does not interrupt learning how to vocally imitate a previously memorized song.