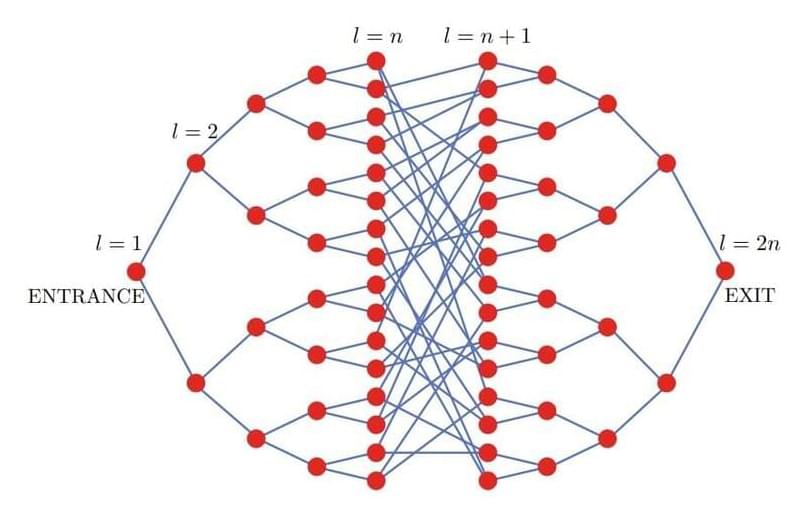

A proposed model unites quantum theory with classical gravity by assuming that states evolve in a probabilistic way, like a game of chance.

Physicists’ best theory of matter is quantum mechanics, which describes the discrete (quantized) behavior of microscopic particles via wave equations. Their best theory of gravity is general relativity, which describes the continuous (classical) motion of massive bodies via space-time curvature. These two highly successful theories appear fundamentally at odds over the nature of space-time: quantum wave equations are defined on a fixed space-time, but general relativity says that space-time is dynamic—curving in response to the distribution of matter. Most attempts to solve this tension have focused on quantizing gravity, with the two leading proposals being string theory and loop quantum gravity. But new theoretical work by Jonathan Oppenheim at University College London proposes an alternative: leave gravity as a classical theory and couple it to quantum theory through a probabilistic mechanism [1].