So-called neuromorphic networks could be a much more efficient way to train and run machine learning algorithms.

The future of smart glasses is about to change drastically with the upcoming release of DigiLens ARGO range next year. These innovative smart glasses will be powered by Phantom Technology’s cutting-edge spatial AI assistant, CASSI. This partnership between DigiLens, based in Silicon Valley, and Phantom Technology, located at St John’s Innovation Centre, brings together the expertise of both companies to create a game-changing wearable device.

Phantom Technology, an AI start-up founded by a group of brilliant minds, has been working diligently over the years to develop advanced human interface technologies for AI wearables. Their breakthrough 3D imaging technology allows users to identify objects in their environment, enhancing their overall experience. With DigiLens incorporating Phantom’s patented optical platform into their consumer product, customers can expect a new era of smart glasses with unparalleled features.

CASSI, the novel spatial AI assistant designed by Phantom Technology, aims to boost productivity and awareness in enterprise settings. This innovative assistant combines computer vision algorithms with a large language model, enabling users to receive step-by-step instructions and assistance for various tasks. Imagine effortlessly locating any physical object or destination in the real world with 3D precision, using your voice to generate instructions, and seamlessly managing tasks using augmented reality.

In recent years, the field of artificial intelligence has witnessed remarkable advancements, with researchers exploring innovative ways to utilize existing technology in groundbreaking applications. One such intriguing concept is the use of WiFi routers as virtual cameras to map a home and detect the presence and locations of individuals, akin to an MRI machine. This revolutionary technology harnesses the power of AI algorithms and WiFi signals to create a unique, non-intrusive way of monitoring human presence within indoor spaces. In this article, we will delve into the workings of this technology, its potential capabilities, and the implications it may have on the future of smart homes and security.

The Foundation of WiFi Imaging: WiFi imaging, also known as radio frequency (RF) sensing, revolves around leveraging the signals emitted by WiFi routers. These signals interact with the surrounding environment, reflecting off objects and people within their range. AI algorithms then process the alterations in these signals to form an image of the indoor space, thus providing a representation of the occupants and their movements. Unlike traditional cameras, WiFi imaging is capable of penetrating walls and obstructions, making it particularly valuable for monitoring people without compromising their privacy.

AI Algorithms in WiFi Imaging: The heart of this technology lies in the powerful AI algorithms that interpret the fluctuations in WiFi signals and translate them into meaningful data. Machine learning techniques, such as neural networks, play a pivotal role in recognizing patterns, identifying individuals, and discerning between static objects and moving entities. As the AI model continuously learns from the WiFi data, it enhances its accuracy and adaptability, making it more proficient in detecting and tracking people over time.

A scientist claims to have developed an inexpensive system for using quantum computing to crack RSA, which is the world’s most commonly used public key algorithm.

See Also: Live Webinar | Generative AI: Myths, Realities and Practical Use Cases

The response from multiple cryptographers and security experts is: Sounds great if true, but can you prove it? “I would be very surprised if RSA-2048 had been broken,” Alan Woodward, a professor of computer science at England’s University of Surrey, told me.

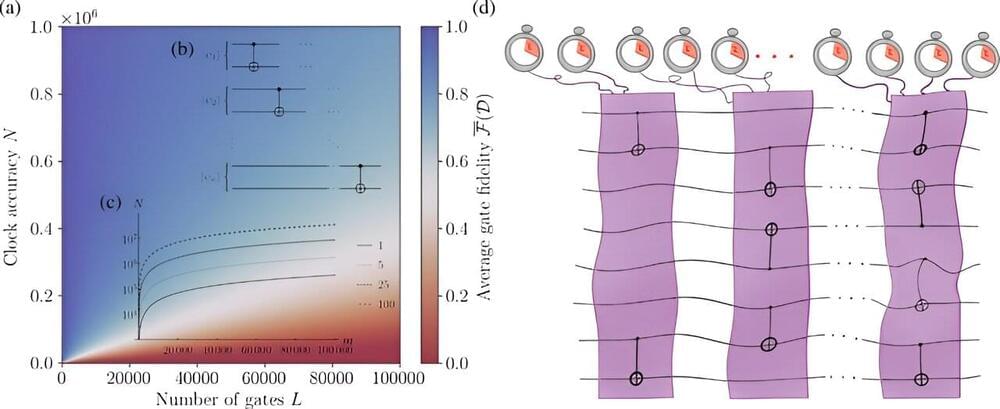

New research from a consortium of quantum physicists, led by Trinity College Dublin’s Dr. Mark Mitchison, shows that imperfect timekeeping places a fundamental limit to quantum computers and their applications. The team claims that even tiny timing errors add up to place a significant impact on any large-scale algorithm, posing another problem that must eventually be solved if quantum computers are to fulfill the lofty aspirations that society has for them.

The paper is published in the journal Physical Review Letters.

It is difficult to imagine modern life without clocks to help organize our daily schedules; with a digital clock in every person’s smartphone or watch, we take precise timekeeping for granted—although that doesn’t stop people from being late.

Even as toddlers, we have an uncanny ability to turn what we learn about the world into concepts. With just a few examples, we form an idea of what makes a “dog” or what it means to “jump” or “skip.” These concepts are effortlessly mixed and matched inside our heads, resulting in a toddler pointing at a prairie dog and screaming, “But that’s not a dog!”

Last week, a team from New York University created an AI model that mimics a toddler’s ability to generalize language learning. In a nutshell, generalization is a sort of flexible thinking that lets us use newly learned words in new contexts—like an older millennial struggling to catch up with Gen Z lingo.

When pitted against adult humans in a language task for generalization, the model matched their performance. It also beat GPT-4, the AI algorithm behind ChatGPT.

75 years ago Claude Shannon, the “father of information theory,” showed how information transmission can be quantified mathematically, namely via the so-called information transmission rate.

Yet, until now this quantity could only be computed approximately. AMOLF researchers Manuel Reinhardt and Pieter Rein ten Wolde, together with a collaborator from Vienna, have now developed a simulation technique that—for the first time—makes it possible to compute the information rate exactly for any system. The researchers have published their results in the journal Physical Review X.

To calculate the information rate exactly, the AMOLF researchers developed a novel simulation algorithm. It works by representing a complex physical system as an interconnected network that transmits the information via connections between its nodes. The researchers hypothesized that by looking at all the different paths the information can take through this network, it should be possible to obtain the information rate exactly.

Go to https://brilliant.org/EmergentGarden to get a 30-day free trial + the first 200 people will get 20% off their annual subscription.

Welcome to my meme complication video lolz rolf!!1! if you laugh you lose and you have to start the video over!! or if you blink or utilize any facial muscles! failing to restart or share the video will result in infinite suffering, success will result in infinite bliss.

☻/

/ ▌ copy me.

/ \

My Links.

Patreon: https://www.patreon.com/emergentgarden.

Discord: https://discord.gg/ZsrAAByEnr.

Music: https://youtube.com/@acolyte-compositions.

Sources:

Richard Dawkins ted: https://www.youtube.com/watch?v=mvJVseKdlJA

Orange Justice: https://www.youtube.com/watch?v=i0E10FASz2c.

Starling imitating camera: https://www.youtube.com/watch?v=S4IDaGo6a40

Baby imitating clapping: https://www.youtube.com/watch?v=uq1eZ8VZpSc.

Cinnamon challenge guy: https://www.youtube.com/watch?v=IqmhuYtldd0

Dawkins “It works…bitches”: https://www.youtube.com/watch?v=0OtFSDKrq88

Virus reproduction animation: https://www.youtube.com/watch?v=QHHrph7zDLw.

DNA replication animation: https://www.youtube.com/watch?v=7Hk9jct2ozY

Old bicycle: https://www.youtube.com/watch?v=p–RKyDFIAk.

Charlie bit me: https://www.youtube.com/watch?v=0EqSXDwTq6U

Vsauce fingers: https://www.youtube.com/watch?v=UMwEbsbLw3k.

Unknown Video (do not watch): https://www.youtube.com/watch?v=dQw4w9WgXcQ

The Internette: https://www.youtube.com/watch?v=Y5BZkaWZAAA

Beirut 2020 explosion: https://www.youtube.com/watch?v=LNDhIGR-83w.

Rotating Duck: https://www.youtube.com/watch?v=ggDbDRUauzo.

Free Real Estate: https://www.youtube.com/watch?v=cd4-UnU8lWY

Do I look like I know what a jpeg is: https://www.youtube.com/watch?v=QEzhxP-pdos.

Free Real Estate asmr meme: https://www.youtube.com/watch?v=CCSE6x8T0jc.

Silly red bird: https://www.youtube.com/watch?v=kUJw2eVYznw.

Silly siamangs: https://www.youtube.com/watch?v=-JUhUI_KvUI

Strong vsauce: https://www.youtube.com/shorts/crVjtJyc-r4

Jazz Frog TikTok: https://www.youtube.com/watch?v=0BWiMF8_v6M

Barry Algorithm: https://www.youtube.com/watch?v=ktAbh39aoU8

AI Pizza Ad: https://www.youtube.com/watch?v=qSewd6Iaj6I

Dawkins weird meme song: https://www.youtube.com/watch?v=2tIwYNioDL8

I could not realistically credit everything.

Timestamps.

AI has exploded onto the scene in recent years, bringing both promise and peril. Systems like ChatGPT and Stable Diffusion showcase the tremendous potential of AI to enhance productivity and creativity. Yet they also reveal a dark reality: the algorithms often reflect the same systemic prejudices and societal biases present in their training data.

While the corporate world has quickly capitalized on integrating generative AI systems, many experts urge caution, considering the critical flaws in how AI represents diversity. Whether it’s text generators reinforcing stereotypes or facial recognition exhibiting racial bias, the ethical challenges cannot be ignored.

From generating text that furthers stereotypes to producing discriminatory facial recognition results, biased AI poses ethical and social challenges.

Hussam Amrouch has developed an AI-ready architecture that is twice as powerful as comparable in-memory computing approaches. As reported in the journal Nature Communications (“First demonstration of in-memory computing crossbar using multi-level Cell FeFET”), the professor at the Technical University of Munich (TUM) applies a new computational paradigm using special circuits known as ferroelectric field effect transistors (FeFETs). Within a few years, this could prove useful for generative AI, deep learning algorithms and robotic applications.