SpaceX’s newest drone ship is on its way out into the Atlantic Ocean for a Starlink mission that will break the company’s record for annual launch cadence.

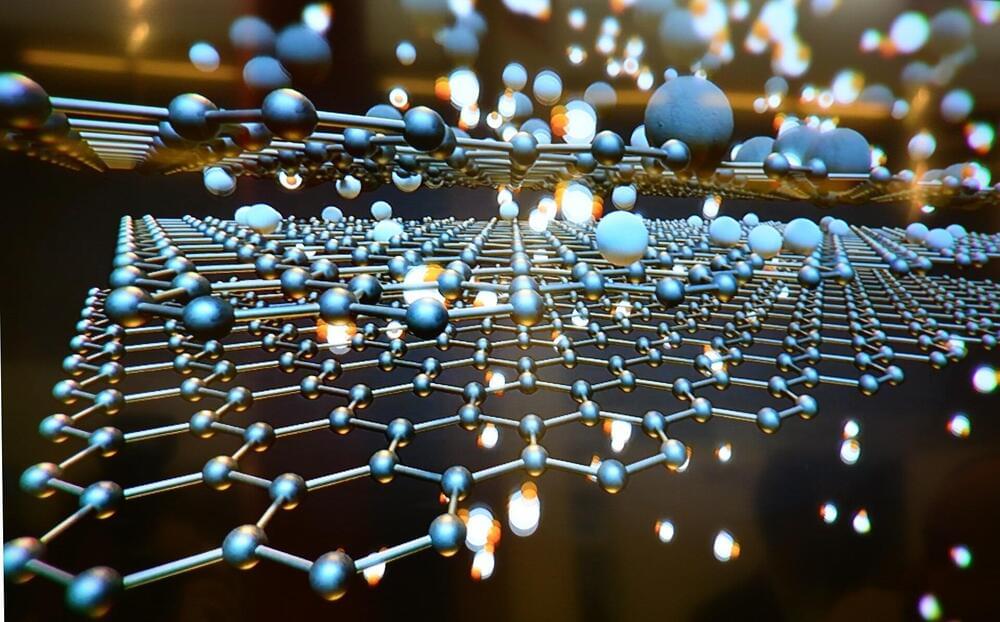

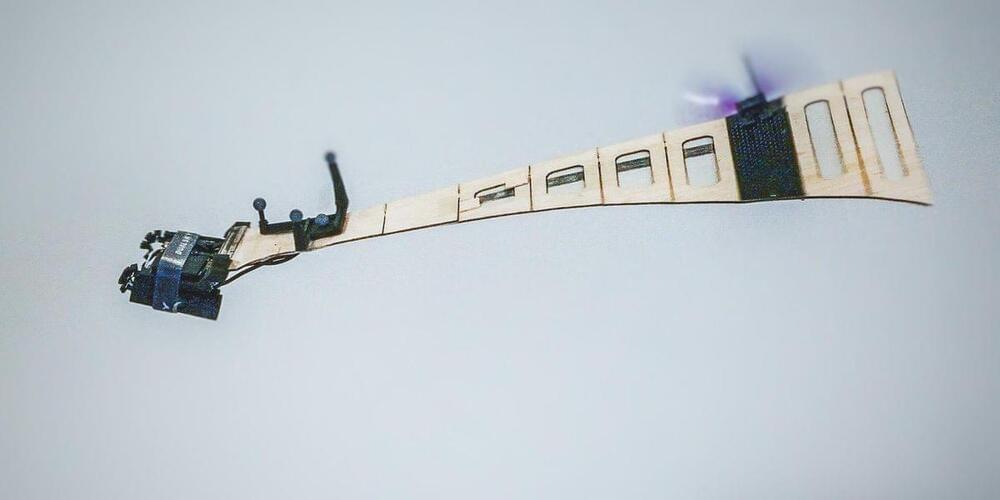

Somewhat confusing known as Starlink Shell 4 Launch 3 or Starlink 4–3, the batch of 53 laser-linked V1.5 satellites is scheduled to fly before Starlink 4–2 for unknown reasons and at the same time as Starlink 2–3 is scheduled to fly before Starlink 2–2 on the West Coast. Regardless of the seemingly unstable launch order, perhaps related to the recent introduction of Starlink’s new V1.5 satellite design, drone ship A Shortfall of Gravitas’ (ASOG) November 27th Port Canaveral confirms that SpaceX is more or less on track to launch Starlink 4–3 no earlier than (NET) 6:20 pm EST (23:20 UTC) on Wednesday, December 1st.

In a bit of a return to stride after launching 20 times in the first six months but only three times in the entire third quarter of 2021, Starlink 4–3 is currently the first of four or even five SpaceX launches scheduled in the last month of the year. Nevertheless, if Starlink 4–3 is successful, it will also set SpaceX up to cross a milestone unprecedented in the history of satellite launches.