The work on the planned Dallas and Houston high-speed rail continues to advance slowly, despite years of pushback.

SparkLabs — an early-stage venture capital firm that has made a name for itself for backing OpenAI as well as a host of other AI startups such as Vectara, Allganize, Kneron, Anthropic, xAI, Glade (YC S23) and Lucidya AI — is gearing up to double down on more startups in the space. The VC firm announced Tuesday that it has closed a new $50 million fund, AIM AI Fund, which will back AI startups out of its own AIM-X accelerator in Saudi Arabia as well as other AI startups across the globe.

SparkLabs’ new fund and its wider investment aims underscore the bigger trends that have swirled around artificial intelligence for the last few years. The explosion of interest in generative AI in particular has led to a surge of startups in the space, as well as a rush of investors looking for the next Open AI — or at the very least, a startup that a bigger company might snap up as it looks to sharpen its own AI edge.

It also points to how the AI opportunity continues to widen beyond Silicon Valley. AIM-X is an AI-focused startup accelerator that SparkLabs launched earlier this year in the kingdom as part of its AI Mission, a national initiative to bolster AI technology over the next five years.

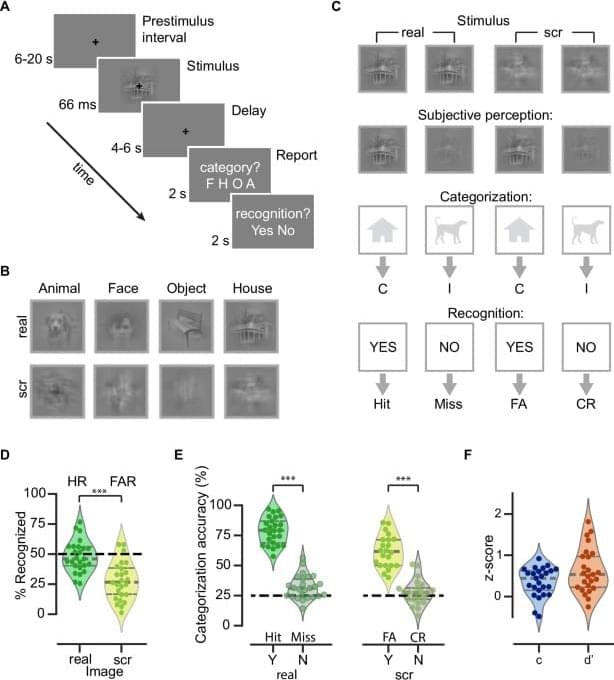

When sensory input meets spontaneous brain activity’

https://cell.com/trends/neurosciences/fulltext/S0166-2236(24)00153-X

https://nature.com/articles/s41467-024-50102-9

Imagine your brain as a bustling city that never sleeps, constantly active even when you’re resting or not paying attention.

It is not fully understood how spontaneous brain activity contributes to sensory processing. Here the authors find a number of influences of spontaneous brain activity on conscious perception and further illuminates the underlying mechanisms.

Here the notorious but eloquent transhumanism critic Wesley J. Smith takes a swipe at the quickly growing movement to overcome death with science. New story in Merion West!

“Utopians often produce evil because their movement’s aspirations become paramount —that is, more important than avoiding acts ‘traditionally perceived as immoral.’ If enough people follow Istvan on the transhuman roller coaster, people could eventually get hurt.”

“I’m not afraid of dying. I just don’t want to be there when it happens.” – Woody Allen.

In 2016, transhumanism proselytizer Zoltan Istvan ran for president promising to defeat death while touring the country in a bus redesigned to look like a coffin. It was a great gimmick that made him, perhaps, the most famous transhumanist in the world.

I know and like Istvan. I admire his indefatigable work ethic that has him writing hundreds of transhumanist-boosting columns and engaging in countless interviews (including by opponents like me). But his recent piece in Merion West “When We’re Overly Optimistic about the Pace of Life Extension Research” took a dark and disturbing turn. He warns that at the current pace of life-extending research, the transhumanist goal of living indefinitely will not be attained during his lifetime (based on a formula he concocted he calls, “the senescence inference”). In 2,131, he moans, our expected lifespan will “only” be 165 years, and it will take “well over a millennium to attain Methuselah-like lifespans nearing 1,000 years.”

A real stinker.

The trial, conducted by Amazon Web Services, was commissioned by the government regulator as a proof of concept for generative AI’s capabilities, and in particular its potential to be used in business settings.

That potential, the trial found, is not looking promising.

In a series of blind assessments, the generative AI summaries of real government documents scored a dire 47 percent on aggregate based on the trial’s rubric, and were decisively outdone by the human-made summaries, which scored 81 percent.

A Space Force officer will command a mission later this month to safely bring home two astronauts who have been unexpectedly stuck aboard the International Space Station, or ISS, marking the first time a Guardian will launch into space for such a high-profile operation.

Col. Nick Hague, an active-duty Space Force Guardian, will be joined by Roscosmos cosmonaut Aleksandr Gorbunov aboard the SpaceX Dragon spacecraft for NASA’s Crew-9 mission. Originally, Hague and Gorbunov were supposed to be joined by two other astronauts for a trip to space, but problems with the Boeing Starliner spacecraft that have left astronauts Barry Wilmore and Sunita Williams stuck aboard the space station for months longer than anticipated shifted the mission objective, date and staffing.

Hague and Gorbunov will launch no earlier than Sept. 24, NASA said in a Friday news release, and will return to Earth with Wilmore and Williams in February 2025. The Guardian and the cosmonaut were chosen for their particular experience and skill sets, the agency said.

Scientists have grown blood stem cells in the laboratory for the first time in a move that could potentially end the need for stem cell transplants.

During a stem cell (or bone marrow) transplant, damaged blood cells are replaced with healthy ones and can be used to treat conditions such as leukaemia.

However, finding a donor match can be difficult and some patients die before a donor is found.