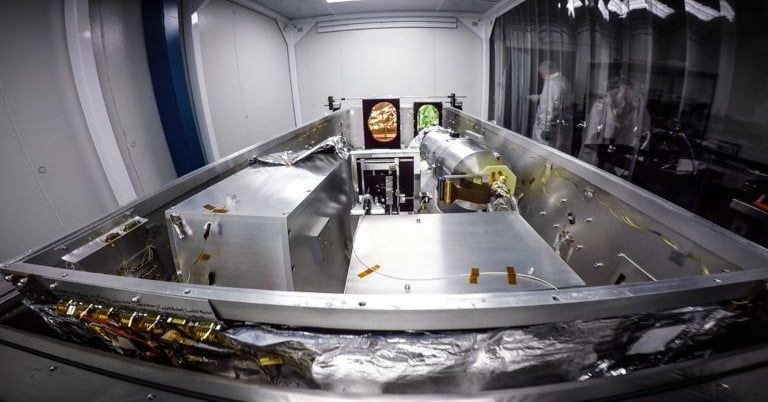

Astronomers have a new tool to help them find habitable planets in our galaxy: the Habitable Planet Finder (HPF), a high-precision spectrograph. The HPF can be used to detect worlds which have some key qualities, like being a rocky planet orbiting an red dwarf. A red dwarf, also known as an M-dwarf, is a type of star that is relatively cool, small, and dim, and is somewhat similar to our Sun (which is classified as a white or yellow dwarf.) Red dwarfs are common in the Milky Way, like the nearby Barnard’s star, making them good hunting grounds for exoplanets.

“About 70 percent of the stars in our galaxy are M-dwarfs like Barnard’s star, but the near-infrared light they emit has made it difficult for astronomers to see their planets with ordinary optical telescopes,” Paul Robertson, assistant professor of physics and astronomy at the University of California, Irvine, said in a statement. “With the HPF, it’s now open season for exoplanet hunting on a greatly expanded selection of stellar targets.”

The HPF measures subtle changes in the color of light given off by stars, which can indicate the influence of an orbiting planet. In particular, it searches for planets with a low mass located within the “habitable zone” of their stars where surface water can exist. The spectrograph has already demonstrated its usefulness by confirming the existence of a super-Earth which is orbiting Barnard’s star during its commissioning, and should be able to detect many more planets similar in size to Earth in the future.