“Waves of virtual reality and augmented reality startups have their funding. Venture capitalists invested $1.7 billion in the AR/VR sector in the 12 months ended March 2016, and $1.2 billion of that was invested in the first quarter of this year alone, according to Digi-Capital.”

“These days every company is a tech company, but some have better niches, faster growth, more attractive offerings or more favorable share prices than others.”

With only 9 days left on the SENS cancer fundraiser here is an article from Fightaging! that explains why finding novel solutions to treating cancer is critical in the roadmap to longer healthier lives.

This year’s SENS rejuvenation research crowdfunding event puts the spotlight on the SENS Research Foundation’s cancer program. So far more than 300 people have donated, and more than $26,000 has been raised; with ten days left to go, it won’t take that much more of an effort to reach the same number of donors and the same level of support given to last year’s fundraiser, and which led to the success in that research program. As for all of the SENS research initiatives in the science of aging, the SENS Research Foundation’s work on cancer aims to support a big, bold goal in medicine: to build a single type of therapy that can be used to effectively treat all forms of cancer. When achieved, that will greatly increase the pace of progress towards control of cancer, the goal of finally ending cancer as a threat to health. At present the cancer research community spends much of its time and funding on approaches that are highly specific to only one or only a few of the hundred of subtypes of cancer. That is no way to win any time soon, as even with the vast funding devoted to cancer research, there are just too many forms of cancer and too few researchers. What is needed is to change the strategy, to focus on approaches to the treatment of cancer that are no more expensive to develop, but that far more patients can benefit from.

The most promising approach to a universal cancer therapy is to block telomere lengthening in cancerous tissues. Telomeres are a part of the mechanism that limits cell division in all human cells other than stem cells, repeating DNA sequences at the ends of chromosomes that shorten every time a cell divides. In order to achieve unfettered growth all cancers must bypass this limit by continually lengthening their telomeres, a goal that is achieved through mutations that allow cancer cells to use telomerase or the alternative lengthening of telomeres (ALT) processes. If both telomerase and ALT can be blocked in cancer tissue, then the cancer will wither; this is such a fundamental piece of cellular machinery that there is no expectation that cancer cells could find a way around it. Block only one of these two methods of telomere lengthening, however, and the cancer will probably switch to use the other. This has been observed in mice.

Thus it is very important that the research community deploy both telomerase and ALT blockades as a part of a prospective universal cancer therapy. Unfortunately while a number of groups are working on telomerase interdiction, and telomerase is very well studied these days, ALT is still poorly characterized, at the frontiers of what is known of cell biology. ALT doesn’t occur in normal cells, and thus despite the fact that 10% of cancers make use of it, only recently have the necessary tools been developed to work towards understanding and intervention. The SENS Research Foundation is picking up the slack in this overlooked area of development, and with our support is working towards ensuring that the first universal cancer therapies can in fact target both telomerase and ALT, and therefore succeed.

Now, there is a question that must be asked when it comes to atheletes and CRISPR. As we have seen over the years with doping/ atheletic enhancing drugs, etc. how will we know for sure that an athelete from China, Russia, or even US was not enhanced as an embryo with CRISPR to be a superior athelete? Sure we can claim to set up a world wide database; however, in lile all things done before not everyone plays by the rules.

The future of sport, and how technology and genetics may change it, and the lesson for business.

Although I shared this news yesterday, this article provide some added details.

Efforts towards realising a practical quantum computer have been given a huge boost after a team of researchers made successful advances in qubit miniaturisation.

Qubits or quantum bits are the basic building blocks behind quantum computing and hold the capacity to process enormous computational tasks in real-time. However, working out how to miniaturise the technology remains a huge obstacle to quantum computer development.

One approach to the challenge involves trapped ions, but this requires large and complicated hardware components. Now, researchers at MIT are developing a prototype chip which is able to trap ions in an electric field and control them using targeted laser technology.

Glad folks realizes and admit to the risks; however, I stand by my argument until the underpinning technology is still tied to dated digital technology; it will be hacked by folks like China who are planning to be on a new Quantum network and platforms. Reason why all countries must never lose sight of replacing their infrastructure and the net with Quantum technology.

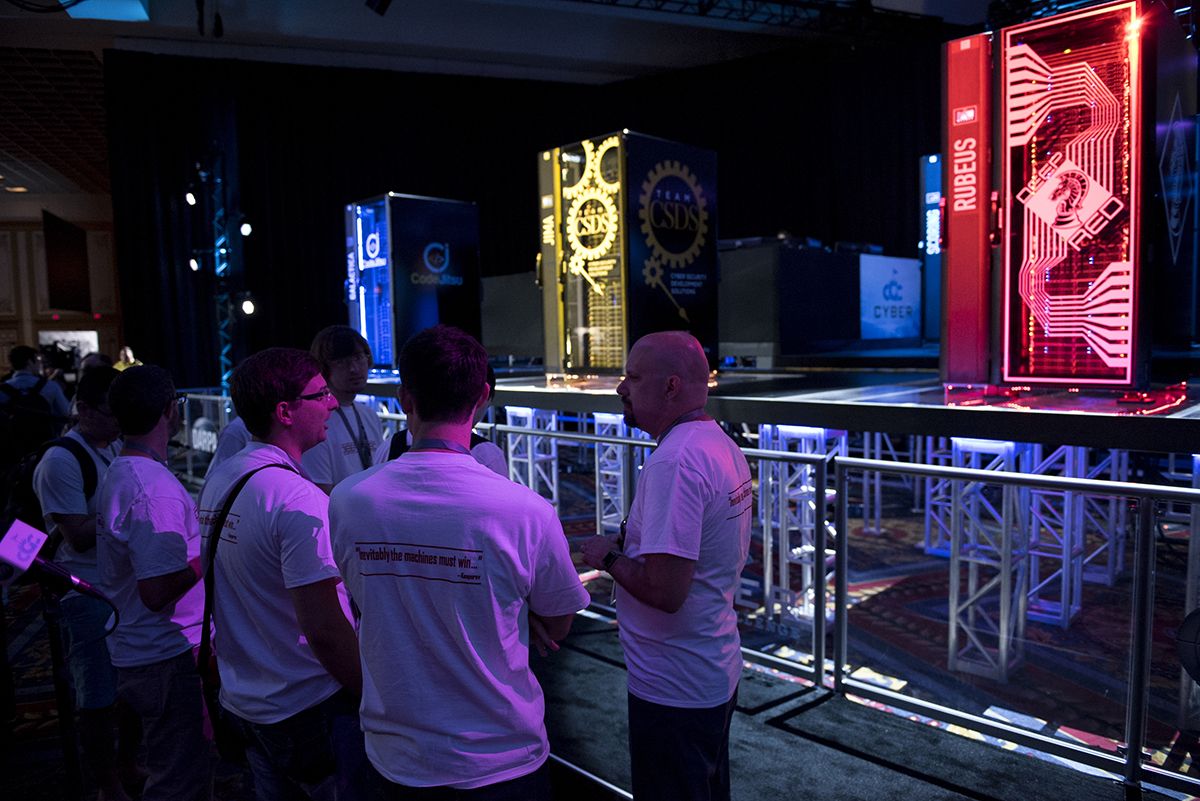

A team of researchers from Pittsburgh has won DARPA’s Cyber Grand Challenge – a competition billed as the ‘world’s first automated network defense tournament.’ The implications for the Internet of Things (IoT) are grave, as the machines on display threaten to ravage the already flaky state of IoT security.

DARPA, the Department of Defense’s (DoD) Defense Advanced Research Projects Agency, is a wing of the US military that investigates how the latest technological breakthroughs can be put to use on the battlefield. With nation-state cybersecurity now declared a new front in conventional warfare, militaries around the world will be flocking to gather the tools needed to exert force in this new medium.

So while the DARPA competition presents itself as a smiley affair, it dies represent the new face of an emerging weapon – and the most promising weapon on display came from the aforementioned ForAllSecure, a startup with close ties to Pittsburgh’s Carnegie Mellon University.

Many folks have voiced the concerns over autonomous autos for many legitimate reasons including hacking and weak satellite signals for navigation especially when you review mountain ranges of the east coast.

The world has witnessed enormous advances in autonomous passenger vehicle technologies over the last dozen years.

The performance of microprocessors, memory chips and sensors needed for autonomous driving has greatly increased, while the cost of these components has decreased substantially.

Software for controlling and navigating these systems has similarly improved.

Nice.

This summer, more than a million tons of chardonnay grapes are plumping on manicured vineyards around the world. The grapes make one of the most popular white wines, but their juicy fruit and luscious leaves are also targets for diseases such as downy mildew, a stubborn fungus-like parasite.

Tags: Wine, Pesticides, CRISPR

LAS VEGAS, Nev. — Mayhem ruled the day when seven AIs clashed here last week — a bot named Mayhem that, along with its competitors, proved that machines can now quickly find many types of security vulnerabilities hiding in vast amounts of code.

Sponsored by the Defense Advanced Research Projects Agency, or DARPA, the first-of-its-kind contest sought to explore how artificial intelligence and automation might help find security and design flaws that bad actors use to penetrate computer networks and steal data.

Mayhem, built by the For All Secure team out of Carnegie Mellon University, so outclassed its competition that it won even though it was inoperable for about half of the contest’s 96 270-second rounds. Mayhem pivoted between two autonomous methods of finding bugs and developing ways to exploit them.