Sep 15, 2014

Robots Aren’t Out to Get You. You Should Be Terrified of Them Anyway.

Posted by Seb in category: robotics/AI

By Nick Bostrom — Slate

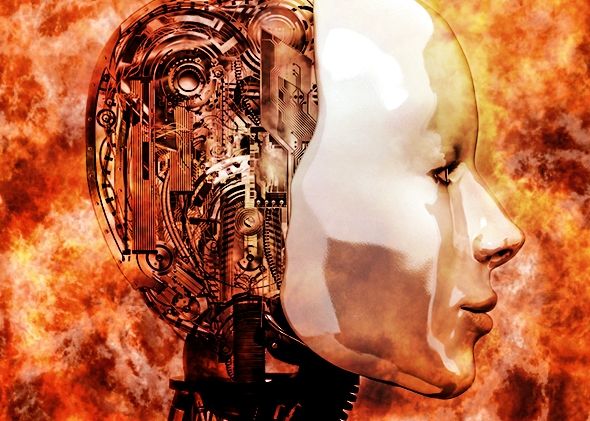

n the recent discussion over the risks of developing superintelligent machines—that is, machines with general intelligence greater than that of humans—two narratives have emerged. One side argues that if a machine ever achieved advanced intelligence, it would automatically know and care about human values and wouldn’t pose a threat to us. The opposing side argues that artificial intelligence would “want” to wipe humans out, either out of revenge or an intrinsic desire for survival.

As it turns out, both of these views are wrong. We have little reason to believe a superintelligence will necessarily share human values, and no reason to believe it would place intrinsic value on its own survival either. These arguments make the mistake of anthropomorphising artificial intelligence, projecting human emotions onto an entity that is fundamentally alien.