Flying train Circa 2015.

Amazon announced Thursday that it plans to develop new technology for its autonomous delivery vehicles in Helsinki, Finland.

The Seattle-headquartered tech giant said in a blog post that it is setting up a new “Development Center” to support Amazon Scout, which is a fully electric autonomous delivery robot that is being tested in four U.S. locations.

Two dozen engineers will be based at the Amazon Scout Development Center in Helsinki initially, the company said, adding that they will be focused on research and development.

It costs $199.99 and includes a monitor and tripod mount.

Dell has launched a high-end UltraSharp webcam that costs $199.99 and is available now worldwide. Its cylindrical design is reminiscent of Apple’s old but iconic iSight external webcam, but its features are aimed to compete with Logitech’s Brio and other modern 4K-ready webcams. In addition, it aims to serve as a more affordable and easier-to-set up alternative to mounting a DSLR camera behind your monitor.

The UltraSharp is a USB-C webcam that houses a Sony STARVIS CMOS 8.3-megapixel sensor. It’s capable of recording or streaming in 4K at 30 or 24 frames per second and in 1080p or 720p at 24, 30, or 60 frames per second. You can tweak the field of view (FOV) between 65 degrees for a close crop, 78 degrees, or 90 degrees for the widest crop available. The webcam has a bevy of auto-light correction features that aim to make your picture look good regardless of your lighting. It supports up to 5x digital zoom and has autofocus. Dell claims the UltraSharp offers the best image quality in its class.

This webcam can work without drivers on Windows 10 or macOS computers, but many of its features are accessible only in Dell’s Peripheral Manager software. One of the most appealing features that the software unlocks is the AI auto-framing mode that lets it follow your movements to keep you centered in the frame. The webcam doesn’t actually move, but the video feed appeared to pan and deliver smooth, seamless motion tracking during a live demo shown to The Verge. (The GIF below is an accurate portrayal.) A similar feature has appeared recently in Amazon’s new Echo Show smart displays and the latest iPad Pro, and it’s a perk that currently sets Dell’s webcam apart from the rest.

The US power grid needs all of support it can get. Sad that some would stand in the way of progress.

There is no love lost between the notorious Koch brothers and the nation’s railroad industry, and the relationship is about to get a lot unlovelier. A massive new, first-of-its-kind renewable energy transmission line is taking shape in the Midwest, which will cut into the Koch family’s fossil energy business. It has a good chance of succeeding where others have stalled, because it will bury the cables under existing rights-of-way using railroad rights-of-way and avoid stirring up the kind of opposition faced by conventional above-ground lines.

The Koch brothers and their family-owned company, Koch Industries, have earned a reputation for attempting to throttle the nation’s renewable energy sector. That makes sense, considering that the diversified, multinational firm owns thousands of miles of oil, gas, and chemical pipelines criss-crossing the US (and sometimes breaking down) in addition to other major operations that depend on rail and highway infrastructure.

Koch Industries owns fleets of rail cars, but one thing it doesn’t have is its own railroad right-of-way. That’s a bit ironic, considering that railroads provided the initial kickstart for the family business back in the 1920s, but that is where trouble has been brewing today.

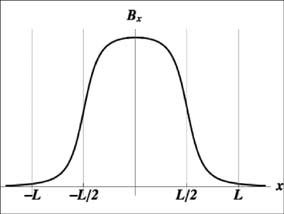

Now, researchers are homing in on an artificial photosynthesis device that could let us do the same trick, turning sunlight and water into clean-burning hydrogen fuel for our cars, homes, and more.

Solar cells already let us convert sunlight into electricity. Artificial photosynthesis devices, however, use sunlight to turn water or carbon dioxide into liquid fuels, such as hydrogen or ethanol.

These can be stored more easily than electricity and used in different ways, allowing them to substitute for fossil fuels like oil and gas.

The Q engineer (previously) who likes to fiddle around with bicycle tires, decided to remove the entire internal hub (spokes and frame) from the wheel of his bicycle. He then crafted, molded, and welded an entire external system to ensure the ride would be smooth.

I doubt they were the first to use artificial intelligence in war. But it does discuss the AI technologies used in the recent conflict.

They used AI technology to identify targets for air strikes, specifically to counter the extensive tunnel network of their opponents.

Play War Thunder for FREE! Register using https://wt.link/DefenseUpdates and get a premium tank or aircraft or ship and thee days of premium account time.

The Israel Defense Forces (IDF) have claimed the world’s first use of artificial intelligence (AI) and supercomputing in its recent conflict with Hamas.

IDF said it relied heavily on machine learning and data gathering for a period of over two years during its operation Guardian of the Walls.

An outstanding idea, because for one there has been a video/ TV show/ movie, etc… showing every conceivable action a human can do; and secondly the AI could watch all of these at super high speeds.

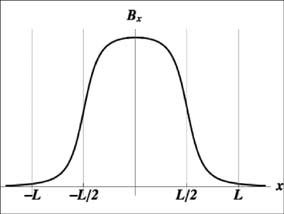

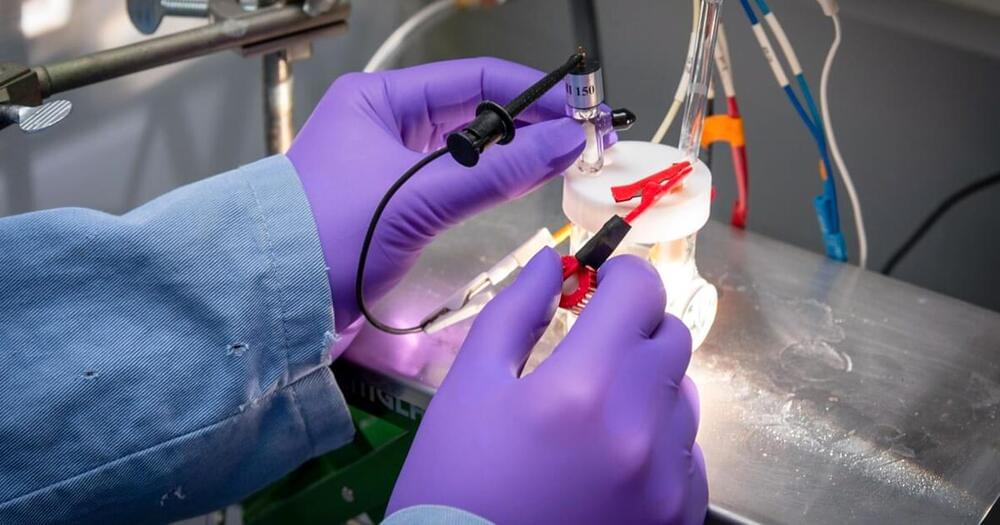

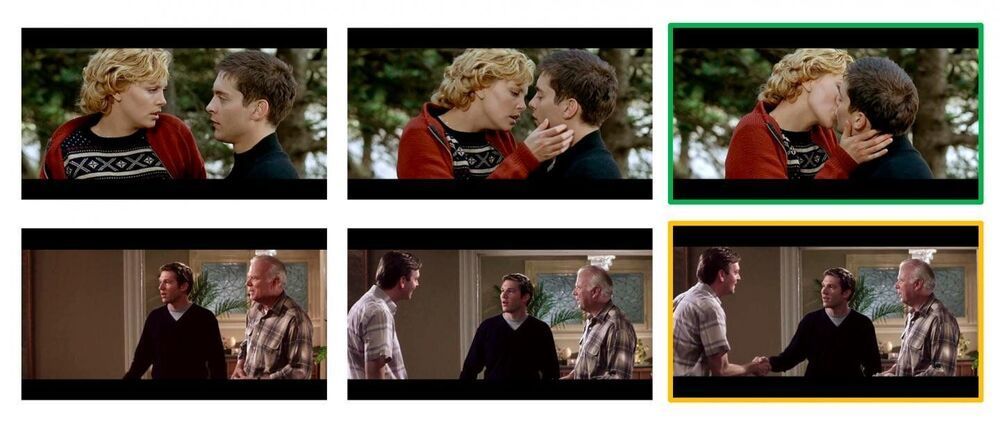

Predicting what someone is about to do next based on their body language comes naturally to humans but not so for computers. When we meet another person, they might greet us with a hello, handshake, or even a fist bump. We may not know which gesture will be used, but we can read the situation and respond appropriately.

In a new study, Columbia Engineering researchers unveil a computer vision technique for giving machines a more intuitive sense for what will happen next by leveraging higher-level associations between people, animals, and objects.

“Our algorithm is a step toward machines being able to make better predictions about human behavior, and thus better coordinate their actions with ours,” said Carl Vondrick, assistant professor of computer science at Columbia, who directed the study, which was presented at the International Conference on Computer Vision and Pattern Recognition on June 24, 2021. “Our results open a number of possibilities for human-robot collaboration, autonomous vehicles, and assistive technology.”

Electric motors have a lot of power and it is instant on-demand.

When Tesla unveiled its new Plaid Model S earlier this month, it took the world by storm, fueling dreams of speed, racing, and fun. In the sense of a grand finale to Tesla’s event, another event was talked about in the weeks following. That event was the annual race to the summit of Pikes Peak. Also known as “The Race to the Clouds,” the entire track winds through 156 turns over 12.42 miles as it reaches the summit of Pikes Peak.