Lawrence Livermore National Laboratory (LLNL) scientists in collaboration with University of Nevada Las Vegas (UNLV) have discovered a previously unknown pressure induced phase transition for TATB that can help predict detonation performance and safety of the explosive. The research appears in the May 13 online edition of the Applied Physics Letters and it is highlighted as a cover and featured article.

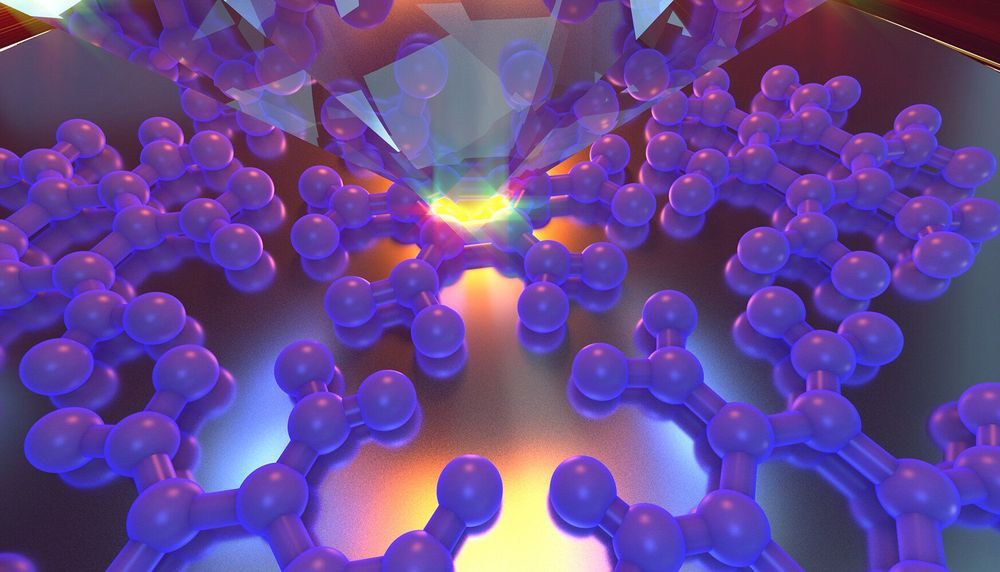

1,3,5-Triamino-2,4,6- trinitrobenzene (TATB), the industry standard for an insensitive high explosive, stands out as the optimum choice when safety (insensitivity) is of utmost importance. Among similar materials with comparable explosive energy release, TATB is remarkably difficult to shock-initiate and has a low friction sensitivity. The causes of this unusual behavior are hidden in the high-pressure structural evolution of TATB. Supercomputer simulations of explosives detonating, running on the world’s most powerful machines at LLNL, depend on knowing the exact locations of the atoms in the crystal structure of an explosive. Accurate knowledge of atomic arrangement under pressure is the cornerstone for predicting the detonation performance and safety of an explosive.

The team performed experiments utilizing a diamond anvil cell, which compressed TATB single crystals to a pressure of more than 25 GPa (250,000 times atmospheric pressure). According to all previous experimental and theoretical studies, it was believed that the atomic arrangement in the crystal structure of TATB remains the same under pressure. The project team challenged the consensus in the field aiming to clarify the high-pressure structural behaviour of TATB.

Read more