The future of cancer care should mean more cost-effective treatments, a greater focus on prevention, and a new mindset: A Surgical Oncologist’s take

Multidisciplinary team management of many types of cancer has led to significant improvements in median and overall survival. Unfortunately, there are still other cancers which we have impacted little. In patients with pancreatic adenocarcinoma and hepatocellular cancer, we have been able to improve median survival only by a matter of a few months, and at a cost of toxicity associated with the treatments. From the point of view of a surgical oncologist, I believe there will be rapid advances over the next several decades.

Robotic Surgery

There is already one surgery robot system on the market and another will soon be available. The advances in robotics and imaging have allowed for improved 3-dimensional spacial recognition of anatomy, and the range of movement of instruments will continue to improve. Real-time haptic feedback may become possible with enhanced neural network systems. It is already possible to perform some operations with greater facility, such as very low sphincter-sparing operations for rectal adenocarcinoma in patients who previously would have required a permanent colostomy. As surgeons’ ability and experience with new robotic equipment becomes greater, the number and types of operation performed will increase and patient recovery time, length of hospital stay, and return to full functional status will improve. Competition may drive down the exorbitant cost of current equipment.

More Cost Effective Screening

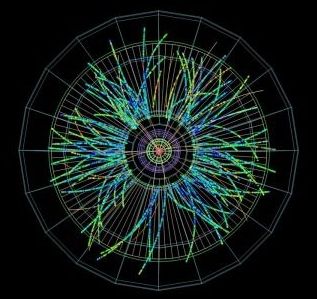

The mapping of the human genome was a phenomenal project and achievement. However, we still do not understand the function of all of the genes identified or the complex interactions with other molecules in the nucleus. We also forget that cancer is a perfect experiment in evolutionary biology. Once cancer has developed, we begin treatments with cytotoxic chemotherapy drugs, targeted agents, immunotherapies, and ionizing radiation. Many of the treatments are themselves mutagenic, and place selection pressure on cells with beneficial mutations allowing them to evade response or repair damage caused by the treatment, survive, multiply, and metastasize. In some patients who are seeming success stories, new cancers develop years or decades later, induced by our therapies to treat their initial cancer. Currently, we place far too little emphasis on screening and prevention of cancer. Hopefully, in the not too distant future, screening of patients with simple, readily available, and inexpensive blood tests looking at circulating cells and free DNA may allow us to recognize patients at high risk to develop certain malignancies, or to detect cancer at far earlier stages when surgical and other therapies have a higher probability of success.

Changing the Mindset

A diagnosis of cancer incites fear and uncertainty in patients and their family members. Many feel they are receiving a certain death sentence. While we have improved the probability of long-term success with some cancers, there are others where we have simply shifted the survival curve to produce a few more months of survival before the patient succumbs. We need to adopt strategies that allow us to contain and control malignant disease without necessarily eradicating it. If a tumor or tumors are in a dormant or senescent state and not causing symptoms or problems, minimally toxic treatments stopping tumor growth and progression allowing the patient to live a normal and productive life would be a success. Patients with a diagnosis of diabetes are never “cured” of their diabetes, but with proper medical management their disease can be controlled and they can survive and function without any of the negative consequences and sequelae of the disease. If we can understand genetic signaling and aberrations sufficiently, perhaps we can control cancer for long periods while maintaining a high quality of life for our patients.

Taking on Tough Political Issues

I am often asked by patients if I believe there will ever be a “cure” for cancer. I invariably reply it is unlikely if we continue to engage in activities and behaviors which increase the likelihood of developing cancer. Cigarette smoking, smokeless tobacco use, excess alcohol or food intake, lack of exercise, and pollution of the environment around us produce carcinogens or conditions increasing the risk of cancer development. Unless we find the courage and strength to limit access or ban substances that are known carcinogens, like cigarettes, and begin as thoughtful citizens of the planet behaving in a more responsible fashion to eliminate air, ground, and water pollution, we will not make a significant impact on the incidence of cancer. We must also be willing to develop greater and more far reaching population education programs about things as simple as proper ultraviolet light protection during sun exposure, and to recognize tanning beds or excessive, unprotected natural sunlight exposure increases the risk of a particularly difficult and vicious malignancy, melanoma. Whether we like to admit it or not, humans respond to societal pressures and images displayed or touted by media, marketing firms, or so-called beauty and glamor outlets that may actually be harmful to the health of the populace. People do and should have a free will, but they should also be given understandable, honest, and rational information on the potential consequences of their choices. There should also be a higher level of personal accountability and responsibility for negative outcomes based on an individual’s choices.

Global Cancer Care

It is estimated that between half and two thirds of the world’s population, particularly in poor or developing countries, have limited or no access to cancer prevention, screening, or care. The improved outcomes we report in medical and surgical journals from advanced countries assume the treatment can be paid for and access is available to all. Nothing is further from the truth. Meaningful efforts to rein in the rampant increases in cancer drug costs, reduce the prohibitively long and expensive process to develop and approve a novel treatment, and to provide training and education for practitioners in developing countries must be made. The disparities even within the United States are great, and it is well known and documented that disadvantage populations are often diagnosed with later stage disease, and generally have reduced chances of long-term success with the treatments available. We must become inclusive, not exclusive, in our worldview and through outreach and development programs begin to build infrastructure and access to affordable care worldwide.

Thinking Outside the Box

Personalized or individualized patient cancer care is a popular buzz phrase these days. In reality, we currently have very few drugs or targeted agents to act upon the numerous genetic or epigenetic abnormalities present in the average cancer. To search for drugs to new targets or abnormal pathways, we must create a system where there is rapid assessment, cost effectiveness, and streamlined regulatory approval for patients with lethal diseases. Personalized cancer treatment is not affordable without major changes in policy and practice. We should recognize malignant tumors have interesting physicochemical and electrical properties different from the normal tissues from which they arise. Therapy with electromagnetic fields specifically tailored to a given patient’s tumor properties can enhance tumor blood flow and improve delivery of drugs or agents while reducing toxicity and side effects. Developing approaches that do not produce acute and long-term side effects or an increased risk to develop second malignancies must be a priority.

Science and technology information is being produced at an incomprehensible rate. We need help from specialized colleagues with big data management and recognition of trends and developments which can be quickly disseminated throughout the medical community, and to appropriate patient populations. All of these measures require commitment and dedication to changing the way we think, reversing priorities based far too much on profitability of treatments rather than availability and affordability of treatment, and we cannot ignore the importance of programs to improve cancer prevention, screening, and early diagnosis.